Deep Render entered 2024 with real technical progress on its AI-based codec. The team had a working model, encoding and decoding live in FFmpeg and VLC, published research, funding, and a belief that AI-based compression represented a step change rather than an academic experiment. What they lacked was sustained visibility with streaming professionals who make codec decisions. The gap was not technology. It was recognition, credibility, and repetition in front of the right eyes.

Deep Render asked the Streaming Learning Center to help solve that perception gap through a campaign that did more than drop a single report or conference talk into a news cycle. The goal was clear. Validate the technology independently, document performance honestly, and keep Deep Render in the codec conversation long enough for people to notice.

The companies agreed on a sequenced set of content pieces supported with LinkedIn promotion, newsletter mentions, and follow-up editorial work that kept AI codecs on the radar while the industry argued about AV1, VVC, and royalties.

This case study documents the content and related promotion. First, Deep Render CTO Arsalan Zafar recorded an instructional video explaining how AI codecs differ from traditional block-based compression. That was followed by the actual engagement, which delivered two core outputs.

One, a live technical walkthrough with Arsalan and Sebastijan showing Deep Render running in FFmpeg and VLC. Two, an independent performance evaluation published as a written report and a YouTube demonstration.

After that came supplemental editorial coverage, including the article on why metrics misread neural codecs. Over about five months, Deep Render was featured in articles, posts, newsletters, and videos consumed by codec experts and decision-makers, rather than being briefly announced and then forgotten.

What follows here is the work itself: what got published, where it was shared, who interacted with it, and how the story was kept alive long enough to matter.

Several months after the engagement concluded, InterDigital acquired Deep Render. We are making no claims of causation. But Deep Render was clearly visible. The right people saw the content, engaged with it, and discussed it. This document simply shows the trail of communication and visibility that preceded the acquisition.

This technical marketing case study is designed as a practical example of how to bring complex technology to market through sequencing, independent validation, and consistent multi-channel visibility. Deep Render is the example, but the underlying model applies to any company trying to move a sophisticated technical product from research circles into the hands of real users and decision makers. The goal is to show how a structured campaign builds recognition, credibility, and repetition long enough for the right people to actually notice.

Contents

Pre-engagement content: AI-codec Instructional Video

Before formal testing began, Deep Render commissioned an instructional video with CTO Arsalan Zafar explaining how AI-based compression works, why neural methods differ from block-based codecs, and how NPUs change the hardware conversation. The video was directed, recorded, and produced by the Streaming Learning Center.

The goal was awareness and technical education, not proof of performance. It worked. The video, entitled Foundations of AI-Based Codec Development: An Introductory Course, has over 1,701 views as of this article’s publication date, making it one of the most-watched neural codec explainers available at the time and confirming genuine interest in the subject beyond research circles.

The lesson was promoted via a LinkedIn post on Jan Ozer’s account. Entitled Foundations of AI-Based Codec Technology, the post reached 6,567 impressions, drawing engagement from compression researchers, streaming engineers, and companies evaluating next-generation codecs. The response signaled that AI-based compression was more than a novelty topic and gave Deep Render an audience to build on before validation began. It also validated that the Streaming Learning Center was a viable channel for streaming-related promotion.

Engagement Content: First FFmpeg/VLC Demo

The engagement began with a live technical walkthrough published on YouTube during which the Streaming Learning Center’s Jan Ozer guided Deep Render CTO Arsalan Zafar and engineer Sebastjan Cizel through installation, encoding, decoding, and playback of the Deep Render codec inside FFmpeg and VLC. At a time when most AI-based codecs existed only in research papers or controlled demos, this session showed the technology running in real tools used throughout the streaming workflow. As of this article, the video has 2,804 views.

The video was promoted via a LinkedIn post on Ozer’s account entitled Deep Render AI Codec Running in FFmpeg and VLC. To date, the post has gathered 10,074 impressions from 4,508 unique members. Post analytics showed that the audience included codec engineers and streaming specialists from Dolby, Microsoft, Meta, Google, AWS, and several research groups. The discussion confirmed that the people evaluating next-generation compression technology were paying attention.

This initial content established that Deep Render’s codec was a working implementation, not a lab-only model, and it laid the foundation for the independent performance evaluation that followed. As before, the video was directed, recorded, and produced by the Streaming Learning Center.

Engagement Content: Independent Evaluation Report

The centerpiece of the engagement was an independent technical evaluation of the Deep Render codec against state-of-the-art alternatives. Ozer reviewed Deep Render’s test methodology, command strings, and quality results, then compared performance against SVT-AV1 and other reference codecs.

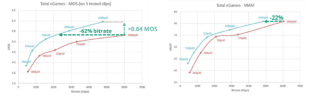

The analysis highlighted a key issue for neural codecs. Formal subjective testing showed Deep Render with roughly a 45 percent BD rate advantage over SVT-AV1, while VMAF reported closer to 3 percent. The report explained why this discrepancy occurs, how traditional metrics were trained, and why they struggle to capture the artifacts and improvements produced by AI-based compression. It also documented workflow considerations to enable engineers to replicate the tests in their own environments.

The results were published on the Streaming Learning Center site as Deep Render: An AI Codec That Encodes in FFmpeg, Plays in VLC, and Outperforms SVT-AV1. As of this writing, the article has 3,960 page views, and the underlying report has been downloaded more than 2,398 times. For a technical white paper on a new codec, those are substantial numbers and indicate that the evaluation did not sit on a shelf.

Five LinkedIn pieces promoted the article over several weeks, using different formats and highlighting different aspects of the work rather than repeating the same message. The first post announced the evaluation itself, summarizing the main findings and linking directly to the article on SLC. This post served as the primary launch and has generated 9,417 impressions to date.

A second promotion appeared in Ozer’s LinkedIn newsletter in a reference entitled First Look at an AI Codec in FFmpeg/VLC, which recapped the Deep Render results. That newsletter mention gathered 2,953 impressions and reached a slightly different segment of the audience, including executives and product managers who follow newsletter content more closely than standard posts.

A third post, Deep Render AI Codec in Action: FFmpeg Encoding and VLC Playback, shifted focus from charts and BD rate numbers to practical workflow, reinforcing that the codec ran in familiar tools and could be evaluated without exotic infrastructure. This post has drawn 4,260 impressions and appealed to hands-on practitioners who care more about “can I run this” than “what is the exact metric delta.”

Two additional posts returned to the testing and evaluation theme from different angles. One highlighted how the workflow and test assets were structured so that others could replicate the work. The other revisited the findings in the context of the broader codec landscape and the growing role of AI-based approaches. These posts generated 2,916 and 1,578 impressions, respectively.

All told, these five LinkedIn entries produced 21,124 impressions for the Deep Render evaluation. More importantly, they drove sustained attention to the underlying report, which, as noted, has been downloaded more than 2,398 times. The combination of a detailed technical article and a structured LinkedIn promotion plan ensured that the work reached not just a large audience, but the right one.

YouTube Walkthrough of the Deep Render Evaluation

Along with the written report, Ozer produced a YouTube walkthrough showing Deep Render’s smooth integration into FFmpeg, VLC, and MediaInfo. The video demonstrated what was described in the report: excellent deployability, with real-time performance without GPUs or custom hardware.

Ozer promoted the video in a LinkedIn post titled Deep Render Codec Workflow Walkthrough,

which to date has garnered 1679 impressions. The video walkthrough was also mentioned in an SLC Newsletter that produced 2,763 impressions, and another LinkedIn post focusing on integration entitled An AI Codec That Just Works: Deep Render in FFmpeg and VLC with 1580 impressions.

Supplemental article extending the discussion beyond Deep Render

After the evaluation report and walkthrough video were published, Ozer produced a follow-up article that extended the conversation beyond Deep Render and into a broader industry question. The piece, titled When Metrics Mislead: Evaluating AI-Based Video Codecs Beyond VMAF, examined the disconnect between Deep Render’s subjective and objective results and placed that experience in the context of published research on learned image and video compression. The article, which discusses the Deep Render findings in detail, has received 4,391 page views to date.

While not a Deep Render-specific deliverable, it kept the topic of neural compression active for readers of the Streaming Learning Center and reinforced the issue identified during the evaluation. The article was supported by three LinkedIn posts, each highlighting a different perspective on the metric reliability problem. The first post, titled We don’t just need better codecs, we need better metrics, reached 1,465 impressions.

A second post, If you are evaluating AI codecs using PSNR or VMAF, you might make confident decisions based on inaccurate data, summarized the research findings and gathered 706 impressions. A third post focused specifically on the Deep Render results. Titled VMAF said 3 percent. Subjective tests said 45 percent. Which do you believe, the post reached 641 impressions and provided another point of discussion about the limitations of existing video quality tools.

Together, these posts produced 2,812 impressions and kept codec evaluators engaged long after the primary deliverables were released. This supplemental editorial coverage extended the visibility window for Deep Render by connecting its evaluation to a larger topic that was already gaining attention in the industry.

Conclusion

Across five months of coordinated content and promotion, the Streaming Learning Center delivered independent verification of performance, clear demonstrations of deployability and repeated visibility in front of the engineers, architects, researchers, and decision makers who shape codec adoption. The work was a structured sequence of articles, videos, LinkedIn posts, and newsletter mentions that kept Deep Render present in the codec conversation over an extended period.

The results reflected that consistency. The evaluation report and related content reached tens of thousands of targeted impressions. The report itself was downloaded more than 2,398 times, and the supporting videos and posts drew engagement from professionals at companies including Dolby, Microsoft, Meta, Google, AWS, and others who influence technical strategy in streaming and compression. Supplemental editorial coverage, including the metrics article, extended interest beyond Deep Render specifically by connecting the work to a broader industry issue.

Deep Render CTO Arsalan Zafar summarized the impact this way. “Jan’s work helped boost our visibility within the codec-relevant community on LinkedIn and significantly increased awareness of what we were doing and what we had accomplished.”

The engagement provided Deep Render with technical validation, market visibility, and a sustained presence during a period when AI-based codecs were beginning to enter serious discussion. Several months after the campaign concluded, Deep Render was acquired by InterDigital. This case study does not claim any connection. It simply documents the activity that preceded that outcome. A consistent flow of content, a clear technical story, and technical validation from a highly trusted source.

The Streaming Learning Center helps companies develop strategic communication plans and showcase their products in the codec and codec-adjacent streaming markets. If you’re interested in working together, contact Jan Ozer at jan.ozer@streaminglearningcenter.com.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel