When I published When Metrics Mislead: Evaluating AI-Based Video Codecs Beyond VMAF earlier this year, the takeaway was blunt: traditional video quality metrics like PSNR, MS-SSIM, and VMAF simply can’t be trusted for AI-based codecs. My tests with Deep Render, and supporting research from JPEG AI and Microsoft’s MLCVQA project, showed that those metrics consistently underrate the perceived quality of learned compression. VMAF missed Deep Render’s 45 percent subjective advantage, giving it just 3 percent.

So, when a new paper titled Evaluating Video Quality Metrics for Neural and Traditional Codecs using 4K/UHD-1 Videos surfaced from the Audiovisual Technology Group, Technische Universitat Ilmenau, and Institute for Communications Engineering (IENT), RWTH Aachen, I expected more of the same; another postmortem for legacy metrics. Instead, it complicated the picture. The authors found that for certain kinds of neural codecs, the old standbys still hold up remarkably well. The real insight isn’t that metrics are broken. It’s that they’re bound. Specifically, they work as long as the distortions stay within the space they were trained on.

Contents

The Study That Put It to the Test

The Ilmenau team set out to answer a simple question: Do existing objective metrics still track human perception when evaluating neural video codecs? To find out, they designed a formal subjective study that would be at home in any standards lab.

They compared four encoders: two traditional (AV1 and VVC) and two neural (DCVC-FM and DCVC-RT). Each codec compressed six 4K/60p videos, including clips like Big Buck Bunny, Daydreamer, and Water, each at four resolutions and nine QP levels. That added up to 216 test sequences, each rated by 30 participants in a controlled environment on a 43-inch UHD display. After outlier filtering, 26 subjects’ ratings made the final dataset. In total: 6,480 subjective scores.

The neural codecs came from Microsoft’s DCVC family, one tuned for feature modulation (FM) and one for real-time operation (RT). AV1 and VVC used realistic, production-style settings, AOM’s cpu-used=4 and VVenC’s medium preset, rather than artificially slow, high-efficiency modes.

DCVC was limited to low-delay P-frame configurations, which reduced efficiency but kept it aligned with its real-world design goals. At the time of testing, DCVC-FM and DCVC-RT didn’t support random-access or hierarchical GOP structures, which are typically developed later in the codec cycle. The authors note that this constraint inherently reduces compression efficiency compared to codecs like AV1 and VVC, which use full random-access configurations.

using VCA [17] on the left with stills from the videos on the right.

Testing Parameters

Testing disparate clips is always a challenge. Where some researchers fall into the trap of testing at a fixed bitrate for each clip, these researchers took the more time-consuming but correct approach of matching perceptual quality instead. They targeted specific PSNR levels so that quality, not bitrate, stayed roughly comparable across codecs and clips.

Each codec was tested at four resolutions (360 p, 720 p, 1080 p, and 2160 p), and for each resolution, they defined several PSNR targets, typically 35, 38, 41, and 44 dB, and interpolated the encoder setting that hit those values. Table I in the paper lists the chosen parameters, which ranged from about QP 17 to 63 depending on codec and resolution. This approach produced overlapping quality ranges for all codecs, ensured that viewers saw clips from clearly impaired to visually lossless, and made the correlation analysis statistically meaningful.

The subjective scores covered the full five-point MOS scale, with a roughly normal distribution centered around 3. Figure 7 in the paper shows MOS versus bitrate; scores ranged from barely watchable (around 1) to near-transparent (around 5). VMAF followed the same trend, stretching from the mid-30s at the low end for 360p clips to the high-90s at the top. The test spanned almost the entire range of perceptual quality, which makes the correlation statistics far more reliable.

Had the team tested at fixed bitrates, motion-heavy clips like Water and Sparks15 would have dominated the results, since those sequences naturally compress less efficiently. Aligning tests by PSNR kept every source clip contributing about equally. It also mirrors how most streaming engineers tune their ladders in practice, by perceived quality rather than fixed bitrates. I would have aligned by VMAF, but the results would have been similar.

What They Found

The results were strikingly consistent across all codecs.

When the authors compared each metric’s predictions against mean opinion scores (MOS), VMAF and its “no enhancement gain” variant (VMAF-neg) came out on top, with Pearson correlations around 0.9. The hybrid model AVQBits|H0|f landed right next to them at 0.89. PSNR, despite its simplicity, showed the best rank-order consistency when comparing encodes of the same source clip.

Among the no-reference models, FasterVQA was the clear winner with a correlation of 0.8, followed by MUSIQ at 0.67. Even more important, there was no measurable drop in correlation when switching from traditional to neural codecs. The ΔNvT analysis, which is essentially a check for bias, showed no tendency for any metric to over- or under-rate neural content relative to traditional compression.

Table II of the paper tells the tale (Table 1 below; click to view at full resolution). Whether the sequence came from AV1, VVC, or one of the DCVC variants, the numbers barely move. For the first time, someone had empirical proof that VMAF still works just fine for at least some neural codecs.

Codec Efficiency: Still a Reality Check

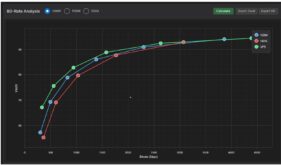

For those who care about respective codec quality, Figure 3 plots PSNR against bitrate for each resolution. As you can see, the DCVC-FM and DCVC-RT curves sit slightly below AV1 and VVC, showing lower PSNR at the same bitrate. That’s because, as noted earlier, the authors tested DCVC in low-delay P-frame mode, while AV1 and VVC used random-access hierarchical GOPs. Without bidirectional prediction or long reference chains, DCVC’s compression efficiency will naturally lag, and Figure 3 makes that difference visible.

The authors went beyond the rate–distortion curves and also examined visual differences between the traditional and AI-based codecs. They write that “visible blocking artifacts [are] present with AV1 and VVC, while the DCVCs produce smoother images. Some details, like the car’s logo, are better preserved by DCVC, while others, such as the grille, are preserved by AV1 and VVC but lost using DCVC.” In short, DCVC trades sharpness for smoothness, which produces different artifacts rather than improved efficiency. The key takeaway is that the visual differences were conventional compression artifacts, which helps explain why metrics like VMAF still correlated well across all codecs.

Why Metrics Held Up Here but Failed Elsewhere

This is where the two lines of research finally meet.

When Metrics Mislead focused on AI-native codecs like Deep Render and JPEG AI. These are end-to-end learned systems that don’t predict or transform in the traditional sense, often utilizing Generative Adversarial Networks (GANs) or diffusion models; they synthesize content. They can reconstruct detail by hallucination, sharpen edges perceptually, and sometimes generate structure that wasn’t in the original. Those transformations break pixel- and feature-similarity metrics because they violate the assumption that “difference” equals “error.” A generated texture might look better to humans but worse numerically.

DCVC and other neural-hybrid codecs, by contrast, remain predictive systems. They use neural models to improve motion compensation, entropy coding, or residual prediction but still operate within the conventional hybrid framework: reference frames, motion vectors, transforms, quantization, and reconstruction. Their artifacts include blurring, ringing, and quantization noise, which are all familiar.

That’s why Ilmenau’s results don’t contradict earlier findings. They apply to a different species of “AI codec.” The metrics held up because DCVC’s distortions still look like compression, not synthesis. In a sense, the neural model sits inside the traditional pipeline; it’s not reinventing it.

This distinction may sound semantic, but it’s practical. It’s the line between “metric-friendly” neural codecs and those that need a new perceptual framework entirely. Hybrid learned codecs extend the old rules; generative ones rewrite them.

The Broader Implication for Metric Design

The Ilmenau paper’s conclusion is cautious but clear: no significant difference in metric reliability between traditional and neural codecs. However, it concludes with a call for further testing, particularly as neural codecs evolve toward more generative behavior.

That’s the real inflection point. If the next wave of codecs, including Deep Render’s successors or diffusion-based architectures, continue to move away from predictive coding, the industry will need new metrics trained directly on those distortions. VMAF may survive in form but not in function; its SVR-based regression model can easily be retrained with new features, but its current feature set was tuned for transform-based compression, not generative synthesis.

Ilmenau’s decision to release its full dataset (sources, encoded clips, subjective ratings, and metric results) under an open-science model is a big deal. It’s the first benchmark that connects traditional and neural codecs within the same controlled subjective framework, giving researchers a bridge dataset for the next generation of metric development.

Practical Takeaways for Evaluators

For developers and streaming professionals trying to make sense of codec evaluations, the lessons are straightforward.

If you’re testing a hybrid learned codec like DCVC, DHVC, or an AV1/VVC variant with neural in-loop filtering, VMAF, PSNR, and AVQBits|H0|f are still reliable guides. They won’t perfectly match subjective scores, but they’ll rank and track quality directionally well.

If you’re evaluating a fully learned, AI-native codec that’s been end-to-end trained on reconstruction loss or GAN feedback, those same metrics can mislead. They’ll penalize detail synthesis, reward structural similarity over perceptual quality, and often underrate results that look better to human eyes.

In these cases, use multiple metrics. Compare their movement, not just their absolute values. Divergence among metrics is often a signal that you’ve left the “safe zone” where they were trained to operate. And when the results really matter—new architecture, new business claim—there’s no substitute for a visual check or a small-scale subjective test.

Conclusion

The lesson from Ilmenau isn’t that VMAF has been redeemed; it’s that it never really failed in the domains it was built for. As we saw with PSNR and SSIM, metrics don’t break, they age out as they are applied to data sets they weren’t designed for. As codecs evolve, their distortions drift, and so does the reliability of the tools we use to measure them.

When Metrics Mislead revealed that conventional metrics fail to capture the performance of generative AI codecs. TU Ilmenau’s work shows they still work perfectly well for learned hybrids.

For now, that’s good news for anyone evaluating practical, hybrid neural codecs. The old instruments still play in tune. But as the next generation of generative codecs comes into view, we’ll need new instruments entirely.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel

Hilariously, the only thing more complex than comparing these codecs than the codecs themselves! Its like trying to judge a book by its cover, but the cover is moving and was painted by an AI that doesnt care about plot holes. Who knew VMAF could be this timeless, still predicting human preference even when the content is basically Photoshop-ifying reality? But hey, at least the neural codecs arent *too* different from their traditional relatives – no sudden leaps into generating entire scenes out of thin air yet. Well just stick to our old metrics for now, unless the next codec starts generating its own subjective rating based on how much it *thinks* it looks good. Stay classy, compression!