For most of the live encoding era, the operator’s main job was picking a bitrate. You’d set a target, the encoder would hit it, and quality was whatever came out the other end. Simple content looked great. Complex content looked terrible. The encoder didn’t care because you told it to deliver bits, not quality.

Then came a generation of smarter modes that flipped the question. Instead of “how many bits?”, you’d specify “how good?” and let the encoder figure out the bits. That was a genuine improvement. But the quality signal was always internal and opaque: a proprietary number, a “quality level” knob, or a perceptual model you couldn’t inspect or independently verify.

Now a third wave is arriving. Several vendors are computing explicit perceptual metrics, specifically VMAF and its real-time variants, during encoding. In some cases, those metrics feed directly back into rate control. The quality signal isn’t hidden inside the encoder anymore. It’s named, it’s measurable, and in the most advanced implementations, it’s driving bitrate allocation frame by frame.

From Bitrate Knobs to Quality Knobs

This post traces that evolution through three phases and looks at what it means for your next encoder decision.

Phase 1: Capped CRF, the Original Quality-First Control

Before vendor-specific quality modes existed, the open-source world gave us CRF (Constant Rate Factor). The core idea: instead of targeting a bitrate, target a quality level and let the encoder vary the bitrate to hit it. Here’s some of the descriptive language.

“The Constant Rate Factor (CRF) is the default quality (and rate control) setting for the x264 and x265 encoders… This is a one-pass mode that is optimal if the user does not desire a specific bitrate, but specifies quality instead.” https://huyunf.github.io/blogs/2017/12/05/x264_rate_control/

In practice, this means the encoder looks at each scene and decides how many bits it needs:

“The x264 encoder uses Constant Rate Factor (CRF) as a rate control mode that prioritizes consistent visual quality over fixed bitrate. The encoder dynamically allocates more bits to complex scenes while using fewer for simpler ones, resulting in variable bitrate output.” https://ffmpeg.party/guides/x264/

When you pair CRF with a maximum bitrate constraint, you get what’s commonly called “capped CRF.” This is the original “set quality, not bitrate” control, and it still underlies a lot of production workflows. It was a real improvement over CBR and basic VBR, but the quality signal is entirely internal. You’re trusting the encoder’s own complexity model, not an explicit perceptual metric. There’s no VMAF score in the loop, no way to say “I want 93 VMAF on this channel.” You set a CRF number and the encoder does its best.

Phase 2: QVBR, EyeQ, and Vendor Quality Modes

The next generation kept the same basic idea, let the encoder decide how many bits each scene needs, but wrapped it in more sophisticated, vendor-specific quality models.

QVBR (AWS Elemental)

AWS formalized the quality-defined approach with QVBR, Quality-Defined Variable Bitrate:

“When you use QVBR, you specify the quality level for your output and the maximum peak bitrate. The encoder determines the right number of bits to use for each part of the video to maintain the video quality that you specify.” https://docs.aws.amazon.com/elemental-server/latest/ug/using-qvbr.html

“With quality-defined variable bitrate mode (QVBR), you specify a maximum bitrate and a quality level. Video quality will match the specified quality level…” https://docs.aws.amazon.com/elemental-live/latest/ug/qvbr-and-rate-control-mode.html

QVBR clearly drives bitrate based on quality, and it’s a real step up from pure CBR or basic VBR. But the quality signal is an internal AWS metric exposed only as a numeric “quality level.” You don’t get to say “target VMAF 93.” You say “quality level 8” and trust that AWS has tuned that number to mean something perceptually reasonable. It works well in practice, but the signal is proprietary and opaque.

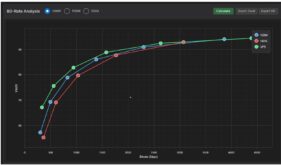

Figure 1. Harmonic’s EyeQ

EyeQ (Harmonic)

Harmonic takes a different approach, leaning heavily on perceptual modeling:

“EyeQ content-aware encoding utilizes AI to dynamically adjust encoding parameters based on what truly matters to the viewer — how the human eye perceives detail, motion, and contrast. By optimizing video quality perceptually, EyeQ achieves visually superior results at significantly lower bitrates. The compression technology intelligently distinguishes between regions of visual importance and less critical details, allocating bits only where they contribute most to perceived quality.” https://www.digitalmediaworld.tv/awards/harmonic-ai-powered-eyeq-content-aware-encoding

That’s from an awards submission, and it reads like one. What we can say from it is that Harmonic describes EyeQ as a content-aware system that uses perceptual models to allocate bits. Whether that’s meaningfully different from other content-aware VBR modes, and what ‘AI’ means in this context, isn’t something the quote establishes. What it shares with QVBR is the key limitation: the quality signal is proprietary and unexposed. You can’t set a VMAF target, and you can’t independently verify what the encoder thinks ‘perceptually optimal’ means.

The Phase 2 Pattern

QVBR, EyeQ, and similar content-aware encoding modes from other vendors share a common architecture: they’ve moved from “you pick the bitrate” to “you pick the quality, we pick the bitrate.” That’s genuinely useful. But the quality signal is a black box in every case. You can’t independently verify what the encoder thinks “good” means, you can’t compare it across vendors, and you can’t set a target in terms of a metric you can measure yourself after the fact.

Phase 3: Explicit Metric-Driven Systems (2024-2026)

This is where things get interesting. A new set of technologies is making the quality signal explicit, named, and measurable. In the most advanced cases, a VMAF-class metric is computed in real time and fed directly back into rate control. The encoder doesn’t just promise quality. It measures quality using a metric you can independently verify.

Synamedia: pVMAF and QC-VBR

Synamedia has been the most explicit about putting a named perceptual metric inside the rate control loop. Their pVMAF (predictive VMAF) is designed specifically for real-time use:

“pVMAF’s ability to deliver highly accurate VQM with only a negligible increase in CPU usage (~0.06% increase in CPU cycles for FHD encoding) — making it ideal for real-time video quality assessment.” https://www.synamedia.com/blog/real-time-video-quality-assessment-with-pvmaf/

That 0.06% CPU overhead number matters. Standard VMAF is too expensive to run inside a live encoding loop. pVMAF is fast enough to run in-line without meaningfully affecting encoder performance. And Synamedia doesn’t just use it for monitoring. They’ve integrated it directly into rate control:

“Proactive rate control: pVMAF can also play an active role in rate control, acting as a feedback mechanism during constant-quality encoding. This functionality is already integrated into Synamedia’s QC-VBR (Quality-Controlled Variable Bitrate) rate control algorithm, ensuring that the target quality is consistently met without sacrificing efficiency.” https://www.synamedia.com/blog/real-time-video-quality-assessment-with-pvmaf/

On the operational side, Synamedia exposes pVMAF as a transparency and targeting tool:

“Unlike traditional ‘black box’ VBR implementations, Synamedia’s vDCM provides transparency through pVMAF-based quality insights… Operators can specify desired pVMAF values to prioritize video quality based on channel importance.” https://www.synamedia.com/blog/streamlining-video-encoding-operations/

This is metric-driven rate control in the most direct sense. The quality signal is a named, VMAF-class metric. It runs in real time inside the encoding loop. Operators can set target pVMAF values per channel. And because pVMAF correlates with standard VMAF, the quality targets are independently verifiable, not just trust-the-vendor numbers.

Real-Time, AI-Powered, and Live-Ready

MainConcept: VMAF-E and vScore

MainConcept has taken a similar approach with VMAF-E, their real-time approximation of standard VMAF:

“VMAF-E, developed by MainConcept and part of the vScore video quality metrics suite, is an AI-powered breakthrough in video quality measurement. Performance closely matches standard VMAF, but is optimized for dynamic, time-sensitive streams… Built into the encoding process, VMAF-E analyzes in coding order, not presentation order, so your results are truly real-time.” https://www.mainconcept.com/vmaf-e

Two things stand out. First, VMAF-E is explicitly designed to match standard VMAF’s accuracy while running fast enough for live encoding. Second, it works in coding order rather than presentation order, which is a technical requirement for operating inside a live encoding pipeline where you can’t wait for future frames.

MainConcept is also explicit about where VMAF-E sits in the encoding architecture:

“VMAF-E is fast enough to be utilized within the rate control loop of the encoding process and can be computed in coding order.” https://blog.mainconcept.com/constant-target-quality-encoding-driven-by-perceptual-fidelity

That’s a direct statement: within the rate control loop. Not monitoring. Not post-encode analysis. The metric feeds back into bitrate decisions during encoding. The broader vScore suite adds operational visibility:

“Real-time feedback empowers operators to optimize encoding settings on the fly, fostering quality control and rapid response at any scale.” https://www.mainconcept.com/vscore

Like Synamedia’s pVMAF/QC-VBR, MainConcept’s VMAF-E/vScore represents explicit metric-in-the-loop bitrate control: a named, VMAF-class metric used during encoding, not just after.

Meta + FFmpeg: In-Loop Metrics at Hyperscale

Meta’s recent work with upstream FFmpeg brings real-time quality metrics into the open-source ecosystem, though with an important distinction from Synamedia and MainConcept:

“Using upstreamed patches and refactorings we’ve been able to fill two important gaps that we had previously relied on our internal fork to fill: threaded, multi-lane transcoding and real-time quality metrics.” https://engineering.fb.com/2026/03/02/video-engineering/ffmpeg-at-meta-media-processing-at-scale/

“In the end, we can produce a quality metric for each encoded lane in real time using a single FFmpeg command line. Thanks to ‘in-loop’ decoding, which was enabled by FFmpeg developers including those from FFlabs and VideoLAN, beginning with FFmpeg 7.0, we no longer have to rely on our internal FFmpeg fork for this capability.” https://engineering.fb.com/2026/03/02/video-engineering/ffmpeg-at-meta-media-processing-at-scale/

What Meta describes is real-time, per-lane computation of quality metrics (PSNR, SSIM, and VMAF-type metrics) during encoding, at hyperscale, using upstream FFmpeg rather than a proprietary fork. That’s significant for the broader ecosystem because it means these capabilities are available to anyone building on FFmpeg 7.0+, not just Meta.

However, it’s important to note what Meta does not describe. The blog frames this as measurement and analysis, not closed-loop rate control. Meta is computing quality metrics inline during encoding, which is a prerequisite for metric-driven rate control, but the blog does not claim they’re feeding those metrics back into bitrate decisions. The primary stated benefits are de-forking (getting off their internal FFmpeg fork and onto upstream) and real-time quality visibility across their encoding fleet. Whether Meta uses these metrics to steer bitrate internally is something the blog doesn’t address.

Ateme: TITAN, VMAF, and Bitrate Savings

Ateme positions its TITAN platform and content-aware encoding around VMAF-validated quality:

“Our encoding solutions consistently deliver up to 20% bitrate savings across a wide range of formats and codecs… The result? … Superior VMAF scores, reflecting true perceptual performance.” https://www.ateme.com/reimagining-video-quality-metrics-and-efficiency/

This tells us that Ateme’s encoding pipeline is designed and tuned around VMAF. Engineering decisions, tuning parameters, and validation workflows clearly use VMAF as a primary quality measure, and the 20% bitrate savings claim is framed in terms of maintaining or improving VMAF scores.

What this does not establish is whether VMAF runs inside the rate control loop during encoding, the way Synamedia’s pVMAF and MainConcept’s VMAF-E explicitly do. Based on this published material, the strongest supported claim is that Ateme is VMAF-tuned and VMAF-validated, delivering documented bitrate savings at equal or better perceptual quality. Whether the rate control loop itself is metric-driven isn’t something the public documentation addresses.

Ateme recently got a valuable technology endorsement when Netflix signed a multi-year deal to deploy TITAN Live for its live streaming workflows. The article notes that TITAN uses VMAF alongside subjective assessments to minimize visual artifacts, which reinforces the VMAF-validated positioning described above.

https://www.broadbandtvnews.com/2026/03/03/ateme-signs-multi-year-titan-live-transcoder-deal-with-netflix/

Pulling the Trend Together

The progression is clear. Phase 1 (CRF) moved us from “you set the bitrate” to “you set the quality.” Phase 2 (QVBR, EyeQ) made quality-driven encoding more sophisticated but kept the quality signal proprietary. Phase 3 is making the quality signal explicit, named, and in the most advanced cases, directly driving rate control.

Two vendors, Synamedia and MainConcept, have published explicit statements that their VMAF-class metrics operate inside the rate control loop. Meta has brought real-time quality metrics into upstream FFmpeg at hyperscale, creating infrastructure that could support closed-loop control even if Meta hasn’t documented using it that way. Ateme has built its optimization and validation pipeline around VMAF, even if the rate control loop role isn’t publicly documented.

Taken together, these examples suggest a clear direction: perceptual quality metrics are moving from post-encode validation tools to real-time encoding signals, and in some cases, to active rate control drivers.

Here’s the landscape in a table:

Live quality control technologies

| Technology / Mode | Provider | Primary Quality Signal | Drives Bitrate? (How) |

|---|---|---|---|

| Capped CRF | Open-source (x264/x265) | Encoder-internal complexity heuristics | Yes — Varies bitrate to maintain a constant CRF-defined quality target. |

| QVBR | AWS Elemental | Internal “Quality Level” (1-10) | Yes — Quality-defined VBR that caps at a user-defined max bitrate. |

| EyeQ | Harmonic | AI-powered perceptual model of human vision | Yes — Content-aware bit allocation; prioritizes regions of visual importance. |

| pVMAF + QC-VBR | Synamedia | pVMAF (Predictive VMAF) | Yes — Explicit feedback loop; pVMAF informs the QC-VBR rate controller. |

| VMAF-E + vScore | MainConcept | VMAF-E (Real-time VMAF proxy) | Yes — VMAF-E runs within the rate control loop in coding order. |

| In-Loop Metrics | Meta + FFmpeg 7.0+ | PSNR / SSIM / VMAF | No (publicly) — Used for real-time monitoring and fleet-wide visibility. |

| TITAN / CAE | Ateme | VMAF used for tuning and validation | VMAF-tuned/validated; closed-loop role not publicly documented. |

What to Demand from Your Next Live Encoder

If you’re evaluating live encoders today, this trend has practical implications for what you should be asking vendors.

First, ask whether the encoder exposes a named perceptual metric during encoding. Not a proprietary quality level, not an opaque score, but a metric you can independently verify, like VMAF or a documented VMAF-correlated variant. Real-time visibility into a known metric lets you compare quality across vendors, across channels, and across time in a way that proprietary quality levels never can.

Second, ask whether that metric is used in the rate control loop. There’s a meaningful difference between an encoder that computes VMAF after encoding (useful for monitoring) and one that uses VMAF to drive bitrate decisions during encoding (useful for quality guarantees). Both Synamedia and MainConcept have documented this capability. If a vendor claims quality-driven encoding but can’t explain what metric drives it or where it sits in the encoding pipeline, that’s worth pressing on.

Third, ask about metric targeting. Can you set a per-channel VMAF target and have the encoder allocate bits to hit it? Synamedia’s pVMAF-based channel prioritization is the clearest public example of this capability. If your operation runs a mix of premium and economy channels, the ability to set explicit quality targets per tier, measured by a metric you can independently measure, is more useful than a single global quality knob.

The broader point is this: quality-first encoding has been available for years, but the quality signal has been a black box. The most interesting development in live encoding right now isn’t a new codec or a faster chip. It’s the emergence of transparent, measurable, independently verifiable quality signals that sit inside the encoding loop. Your next live encoder should let you see them, set targets against them, and ideally use them to drive bitrate directly. That’s where the field is heading, and the vendors who’ve gotten there first are already publishing the results.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel