Before comparing your existing H.264 encodes to HEVC, AV1, or any other advanced codec, you need a baseline you can trust. In practice, many codec efficiency claims collapse once you examine how inefficient the underlying H.264 ladder actually is. This article focuses on fixing that baseline before any codec comparison begins.

Let’s take a step back. This is the second in a series of articles on the steps you should take before adopting a codec other than H.264. The first article is entitled, Beyond H.264. Why Codec Choices Now Carry Legal and Financial Risk, and details how advanced codecs like HEVC, AV1, VP9, and VVC are likely subject to royalties for streaming services that deploy them.

As I discuss in the first article, if you’re deploying any advanced codec for 4K/HDR content, you can’t use H.264. So, it’s use HEVC/AV1 or don’t publish HDR. In these cases, any associated royalties are an unavoidable cost of doing business.

On the other hand, if you’re considering an advanced codec primarily for bandwidth efficiency, content royalties significantly increase implementation cost and demand a more rigorous economic analysis. In my upcoming course, Beyond H.264, I provide tools, templates, and a structured workflow to quantify the real costs and savings of adding HEVC, AV1, or VP9 using your own content, ladders, and viewing distributions. The course is equally relevant if you’ve already deployed these codecs and now need to re-evaluate that decision in light of content-related royalties.

Contents

Towards an Accurate Analysis of H.264

Step 1, discussed in this article, is to ensure you’re encoding your H.264 streams as efficiently as possible. Obviously, any improvements here benefit your operation going forward, and full optimization provides the most accurate baseline for comparing any advanced codec. For the record, Beyond H.264 details similar optimizations for HEVC, AV1, and VP9 to ensure the most accurate comparison.

In the course, I address this complete list for H.264.

H.264 Efficiency Techniques

Major levers

- Per‑title / per‑scene content adaptation

- Per-genre encoding

- Encoder implementation

Medium levers

- Preset / speed–quality point (within an encoder)

- Bitrate‑control mode (CBR, capped VBR/CRF, etc.)

- GOP structure and prediction (size, B‑frames, refs, scene‑cut I‑frames)

Minor levers

- Psychovisual tools (AQ, psy‑RD, grain, denoise, etc.)

In this article, I’ll briefly explore the first two, per-title and per-genre encoding, which have by far the most potential impact, and introduce the tools that I’ve developed to assist this analysis.

The most impactful lever: per-title encoding

Per-title encoding, also called content-adoptive encoding, creates a custom encoding ladder for each video file. Netflix coined the term “per-title” in its seminal article titled “Per-Title Encode Optimization,” published in 2015. The high-level premise is that all video files are different, so using the same ladder for all encodes is inherently inefficient.

In subsequent tests using per-scene adaptations, Netflix claimed, “One can notice that the dynamic optimizer improves all three codecs by approximately 28–38%.” Those figures are directionally useful, but the more important takeaway is that static ladders leave a ton of efficiency on the table.

In addition, the advantages of per-title encoding go far beyond bandwidth efficiency and avoiding pool-related content royalties. It’s an encoding-only solution you can implement without extensive playback or other testing. There’s no double encoding of files, as with another codec, so storage costs decrease, and caching becomes even more efficient because more videos can be cached in the same amount of space. Despite these obvious advantages, the latest Bitmovin Video Developer Report found that only 27% of companies have implemented per-title.

Full stop, take a breath. If you recommend that your service deploy HEVC or AV1 before implementing some form of per-title, you’re committing transcoding malpractice.

How to Implement Per-Title

There are multiple ways to implement per-title encoding. First, you can implement capped CRF encoding, or a similar mode available for all codecs, including hardware transcoding for live use. I just finished a consulting project where the publisher distributed AVC/VP9 for VOD and AVC/AV1 for live using NVIDIA-based transcoding, and all live and VOD streams used a form of per-title encoding to good effect.

Second, you can use an encoding service that offers per-title. AWS, Beamr, Bitmovin, Brightcove, Tencent, and many others all offer per-title encoding. Upload your file, and you can download a ladder customized for the file. Most encoding vendors, like Capella Systems, Harmonic, and many others do so as well.

Or you can create your own per-title, which is what I did for the course. For the analysis in this article and the course, I needed a method that was transparent, repeatable, and codec-agnostic.

To explain, as you’ll see in future articles, in order to understand the bandwidth benefits that advanced codecs deliver, you must create equivalent ladders using all the codecs. So, if you’re starting with H.264, you need to understand the equivalent HEVC or AV1 ladder to compute bandwidth savings and QoE improvements.

To get there, I created two Python apps called Phase 1 and Phase 2. Phase 1 uses a target VMAF value, like 93 or 95, to identify the bitrate required for the top rung of your encoding ladder. You set the VMAF value, identify the file and the codec, and Phase 1 triangulates until the target VMAF is met. It’s a brute-force technique that’s less efficient than the AI, but it works.

Phase 2 uses a technique debuted by Tencent to create the encoding ladder. The top rung, of course, is produced by Phase 1. For rung 2, Phase 2 encodes at the rung-one resolution (say, 1080p) and the next-lowest resolution (say, 900p). Then it measures VMAF for both and chooses the encode with the higher VMAF score. Rungs 3-n start at the resolution of the previous rung and repeat the process. You see the resolutions in Figure 2, along with the rung multiplier (.6), which controls the between-rung bitrate, the minimum bitrate target, and the scaling method.

This is a convex hull “lite” approach. Instead of testing every resolution at every bitrate, as Netflix’s original technique did, it tests the rungs most likely to deliver the best quality. This approach produces consistent ladders while still exposing how different content responds to bitrate and resolution trade-offs, which becomes important when aggregating results across genres.

Identifying the benefits of per-title and per-genre

I used Phases 1 and 2 to create per-title encoding ladders for 18 test files across five genres: sports, anime, news, music, and movies. I needed a fixed-bitrate ladder for comparison, so I used Netflix’s pre-per-title ladder from this article, excluding the three rungs shown below. While Netflix no longer uses this ladder, it remains a useful reference point for evaluating the cost of static assumptions.

I encoded the files via the fixed ladder using the same parameters shown in the command string below. Then, using another applet I wrote called Phase Analyzer, I input the results into Excel and analyzed them overall and by genre. For each file and genre, this produced the results shown in Figure 4.

Using the average values from the genre results shown on the left of Figure 4 for anime, I created genre-based ladders and re-encoded each file a third time. Note that the genres had between two and five files each, which likely affected the results; any publisher creating a genre-based average ladder should include more files.

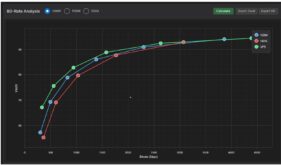

Figure 5 shows the overall results comparing per-title, fixed-ladder, and genre-based encoding with BD-Rate result on the right (click to see the data at full resolution). Overall, the per-title encode was about 35.34% more efficient than the fixed ladder and 2.3% more efficient than the genre-based approach. The genre-based encoding was 23.73% more efficient than the fixed ladder.

For those interested in such things, the aggregate BD-Rate table was computed by averaging the BD-Rate tables across all files, rather than by averaging the ladder data and computing BD-Rate from the average. I’m deliberately not showing aggregated RD curves since, particularly in this case, where there’s a significant disparity in rung count between the different techniques, aggregated BD-Rate curves distort the data.

Figure 6 presents RD-curves and BD-rate data for a 60p soccer clip, which is representative of most comparisons. You can see that Netflix’s top-quality target was likely in the 95 VMAF range, rather than the 93 I targeted, though both per-title and per-genre results are clearly more efficient than the fixed ladder.

As mentioned, with the Phase 1 tool, you can set the VMAF target appropriate for your service and, of course, encode using your current fixed ladder for comparison. That way, you can roughly isolate how much efficiency per-title and per-genre delivers for your content.

I’m certain that the genre results will become less effective than the per-title results as the number of titles in each genre increases. I’ll have updated results in the course when it ships in late February.

Not to belabor the point, but phases 1 and 2, and all associated tools, work with x264, x265, VP9, and SVT-AV1 via FFmpeg. So you’ll be able to identify the optimal per-title and per-genre encoding ladders for any advanced codec, enabling a fully apples-to-apples comparison.

Costs of Per-Title vs. Per-Genre

Between a single global ladder and fully per-title ladders sits a practical middle ground: genre-based ladders that trade some optimality for operational simplicity.

To implement per-title using the techniques shown above, you must encode each file about 15-20 times. This includes 4-7 attempts to find the target VMAF score in Phase 1, and roughly 2x the encodes for the number of ladder rungs below the top rung. This sounds like a lot, but it’s a fraction of the encodes run by Netflix when they started deploying per-title back in 2016. Obviously, this makes sense at Netflix’s view counts, though perhaps not for your services.

On the other hand, to implement per-category, you’ll have to run 10-20 files in each category through this analysis to find a good average ladder. Once you have your genre-specific ladder, your encoding costs won’t change beyond the administrative cost of implementing and monitoring multiple encoding ladders. While this 2-pass fueled technique is obviously designed for VOD, the analysis should apply to live as well.

Summing Up

All that said, I present the above analysis here and in the course more to promote action than to advocate this specific approach. If you’re considering HEVC, AV1, VP9, or VVC and haven’t fully optimized H.264 with per-title or per-genre tools and other efficiency techniques, you’re working in reverse order. Any anticipated benefits that you calculate will almost certainly be overstated.

The course Beyond H.264 gives you a structured methodology, Python tooling examples, and workbook-style models to 1) maximize your encoding efficiency for all codecs, 2) quantify bandwidth and QoE gains from advanced codecs, and 3) factor in royalty and infrastructure costs before you commit. If you want to walk into your next codec discussion with hard numbers instead of anecdotes, that’s exactly what the course is designed to deliver.

What This Article Does Not Prove

I’m adding this section to narrow any unnecessary debate regarding this article.

First, this analysis is deliberately narrow. It does not prove that per-title encoding is always the right operational choice. Per-title minimizes bitrate for a given asset and quality target, but it also increases encoding complexity and ABR-ladder variability. Whether that tradeoff is acceptable depends on your scale, content mix, and operational maturity, not just compression efficiency.

It also does not prove that genre-based ladders will consistently rival per-title results. In this dataset, genre ladders recovered a substantial portion of per-title savings, but that outcome depends on how coherent the genre is and how well the ladder reflects its typical characteristics. Different genres, different libraries, or different quality targets could produce different results.

This analysis does not address playback performance or business outcomes. All comparisons here are codec-side measurements at equal quality, not end-to-end QoE or revenue impact.

Finally, it does not prove that deploying only H.264 is appropriate for all organizations, nor that deploying advanced codecs is inadvisable. The point is to show that codec comparisons are meaningless unless the baseline they are measured against is credible.

What this analysis does show is that if you don’t understand how much efficiency is left on the table in your existing H.264 workflow, any claimed savings from a new codec are, at best, uncalibrated.

Appendix I: Command String

Here’s the promised command string: this is for rung 2. Note that I tuned for PSNR and set aq-mode to 0 to eliminate any psychovisual configurations that would reduce quality metrics scores. I used similar default settings for all codecs, though all can be customized via the command line for each tool.

ffmpeg -i football60_short.mp4 -an -c:v libx264 -preset veryslow -tune psnr -aq-mode 0 -b:v 2070k -maxrate 4140k -bufsize 4140k -g 120 -keyint_min 120 -sc_threshold 0 -threads auto -vf scale=1920:1080:flags=lanczos -pass 1 -f mp4 nul

ffmpeg -y -i football60_short.mp4 -an -c:v libx264 -preset veryslow -tune psnr -aq-mode 0 -b:v 2070k -maxrate 4140k -bufsize 4140k -g 120 -keyint_min 120 -sc_threshold 0 -threads auto -vf scale=1920:1080:flags=lanczos -pass 2 football60_short_r02_same_1920x1080_2070k.mp4

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel