I recently consulted with a company that was running capped CRF-type encoding across four codecs simultaneously: x264, VP9, NVIDIA H.264, and NVIDIA AV1. The first two were VOD encoding, the second two live.

I was genuinely impressed by the sophistication and practicality of this video engineering setup, so I asked the client if I could share what they were doing. The client graciously agreed, but only on the condition that they remain anonymous. So, here goes.

The capped‑CRF and QMAX strategies you’ll see here were all in production before I arrived; my role was to measure how well they worked and help tune them to a consistent VMAF target across genres. You’ll see why in the discussions below.

By way of background, capped CRF is sometimes dismissed as the poor man’s per-title encoding. Single pass, no complexity analysis, no shot detection, no AI, no machine learning. Just set a quality target, cap the bitrate, and let the encoder find its equilibrium.

But the genre data tells a more complicated story. The same QMAX that delivers pristine anime at 985 kbps will leave your sports content looking like it was streamed through a screen door. Sports at QMAX 30 demands nearly 5,000 kbps to hit a 95.60 VMAF. General content at the same QMAX needs 1,014 kbps to reach 89.57. Same parameter, completely different outcome.

That gap is why genre-specific tuning matters and why using a single parameter set across all content is a false economy.

This article walks through the implementation, including command strings and genre tables, across four codecs. A field guide to making capped CRF work in a multi-codec world.

Contents

H.264 – Capped CRF for VOD

The basic capped CRF command used by the client was:

-crf 27 -minrate 1500k -maxrate 6000k -bufsize 3000k

This combination delivered an average VMAF quality of 90, which felt a bit low for a premium content distributor, so I set a target VMAF of 93 for all codecs/encoders.

Testing across multiple CRF values showed that CRF 24 delivered an average of 92.81, requiring a bitrate boost of around 40%. This would also boost low-frame quality, or the quality of the lowest frame in the file, by an average of 6.05 VMAF points. As you may know, low-frame scores can indicate transient quality issues that reduce QoE.

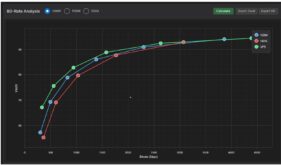

As you can see in Figure 1, the capped CRF was doing its job, adjusting bitrates significantly between the different content genres while maintaining relatively stable quality levels. As Figure 1 also shows, customizing the CRF value by content genre might provide some valuable fine-tuning, enabling more precision in achieving the target quality level at the most efficient bitrate. I’ll discuss my recommendations in this regard at the end of the article.

Reviewing the sports genre, however, revealed that low-frame scores were particularly low. I checked Bitrate Viewer and saw why; the 6 Mbps cap was imposing a hard limit over most of the file. As with CBR encoding, hard limits cause low frame rates.

I then encoded at the same CRF values, with caps of 9 Mbps and 12 Mbps. As you can see in Figure 3, this reduced the average bitrate slightly, from 5,292 for CRF 24 in Figure 1, to 4965 for CRF 26/12M cap in Figure 3. This also boosted low-frame quality from 63.81 to 75.21.

What about bitrate variability, which is always a concern when boosting the max rate? The maximum rate increased from 6211 kbps to 7284 kbps, a 1.1 Mbps increase that isn’t particularly worrisome. However, because the clip achieved VMAF 93 with a CRF value of 26, the overall bitrate dropped from 5542 kbps to 4593 kbps, almost 1 mbps.

The lesson? When adjusting CRF values, it also pays to experiment with maxrate and, sometimes, VBV.

For more on capped CRF settings for H.264, check out my article Choosing the Optimal CRF Value for Capped CRF Encoding, one of the most frequently viewed articles on the Streaming Learning Center.

VP9 and QMAX

With VP9, the client surprised me with a technique I had never seen before. Rather than using capped CRF, which is a legitimate mode with VP9, the client set bitrates extraordinarily low, and set the quality floor using the QMAX control as shown below. Note that this was a two-pass implementation, while the H.264 and two live examples discussed below were all single-pass.

-b:v 1800 -minrate 900 -maxrate 2610 -qmax 30

In theory, it’s a kind of reverse-capped CRF. To explain, with capped CRF, you tell FFmpeg, “encode at this quality level (the CRF value) but don’t exceed this bitrate (the cap).” With QMAX, you tell FFmpeg “encode at this very low bitrate, but don’t let quality drop below this quality level (the QMAX value).”

My first instinct was to try capped CRF. However, after hours of testing, I found that cappedCRF delivered roughly the same quality as the QMAX-based approach, but often at bitrates up to about 50 higher. That was obviously a non-starter. So I pivoted back to QMAX and used VMAF 93 as the target to map out per‑genre QMAX values, which identified where they could safely drop bitrate by 20%+ without hurting quality and where they needed to spend more

Using VMAF 93 as the target, Figure 5 shows the required values to achieve a VMAF score of 93 across content genres. Again, you can see the per-title impact of the QMAX-driven command string, which adjusts the bitrate significantly across content genres while maintaining relatively similar quality. At a high level, the command string clearly worked well.

Interestingly, with sports, which is one of the most costly and high-value content types, the client could achieve the target VMAF at QMAX 33 or 34 while reducing the bitrate by 21%+. Achieving the same quality level as anime would require a QMAX of 25 and a bitrate boost of 32.4%. Again, I discuss my recommendations in this regard below.

Those were the two VOD examples, which I analyzed first. Then it was time to focus on the live applications.

Capped CRF for Live Video

I’ll start by saying that during my time at NETINT, which manufactures ASIC-based transcoders, the company had enabled capped CRF transcoding, which I documented here and here. But I had never worked with NVIDIA transcoders in this mode before.

For NVIDIA H.264 and AV1, the client’s capped‑CRF framework was already sound; my contribution was test the CQ values by genre so the top rung clustered around VMAF 93 instead of overshooting into unnecessary bitrate.

NVIDIA H.264

The client transcoded live streams using the NVIDIA L4 GPU. One of the great things about most hardware transcoders is that their command strings are similar for all supported codecs, and that was the case with NVIDIA’s implementations of capped CRF, which was the same for H.264, AV1, and HEVC, the latter of which the customer did not deploy. Here’s the basic structure.

-c:v h264_nvenc -cq 32 -rc:v vbr -maxrate 6000k -bufsize 6000k

As you can see in the command string, the client’s default value was 32, which delivered an average top-rung VMAF score of 94.76, well above the target. Testing at different CQ values showed that a CQ 34 would achieve the target 93 at a much lower bitrate across all genres except 50 fps sports footage.

NVIDIA AV1

The command string for the client’s AV1 transcoding was this.

-c:v av1_nvenc -cq 43-rc:v vbr -maxrate 6000k -bufsize 6000k

As you can see in Figure 7, the cq-based transcoding proved effective, with bitrates ranging from 1 Mbps for news and drama to 4.7 Mbps for 50 fps, all from the same command string.

However, there was much more diversity in the CQ value that delivered the 93 target with AV1 than with H.264, with values ranging from 41 for high-energy entertainment and 50 fps sports to 45 for news and 25 fps sports, two genres you would never expect to see in the same category.

For the record, though the client didn’t deploy HEVC, the same command string structure worked for HEVC with the L4. Different cq values, of couse, but the same basic command string.

Putting Per-Genre Based Encoding into Action

So let’s discuss what to do about these genre-based findings, which have both theoretical and practical implications.

From a theoretical perspective, the question is how many files do you have to test to identify when a genre is sufficiently different to be treated differently. There is no magic number, but I recommend 10 to 20 test files to demonstrate that the genre is different enough to warrant separate configurations.

From a practical perspective, how easy is it to deploy different values for different content? If you’re a FAST service with news, sports, entertainment, and similar channels, it’s pretty simple. If you’re a traditional broadcaster, with news at 6:00, variety shows from 7 – 8, and then action series from 9 – 11, not so much.

The broader finding is worth stating plainly. If you’re running multi-codec delivery and haven’t done genre-based tuning, you’re likely leaving both quality and bandwidth savings on the table. The genre gaps documented here, like sports versus news, anime versus general entertainment, are real and measurable, and the right parameter values aren’t obvious without systematic testing. That’s where engagements like this one typically start. If you’d like to explore what that looks like for your stack, reach out.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel