On July 3, I spoke with Hari Kalva and Velibor Adzic from Florida Atlantic University about Feature Coding for Machines (FCM), a new MPEG standard being developed for machine-to-machine video applications. You can watch the full interview on YouTube and it’s embedded below.

This blog presents that conversation in a lecture-style format. It follows the slides shown during the session and paraphrases the key insights shared by the speakers. Sit back, learn, and enjoy.

Contents

Meet the Speakers

First, let’s meet the speakers. Hari Kalva is a professor and department chair in Electrical Engineering and Computer Science at Florida Atlantic University. He’s worked in video compression since the days of MPEG-2 and helped develop technologies in MPEG-4, MPEG-7, and the MP4 file format. He now collaborates with OP Solutions on FCM and VVC-related projects. Velibor Adzic is an assistant professor of teaching at FAU and has been active in video and image compression for more than a decade, including previous research with the Multimedia Lab at FAU.

Key Terms and Acronyms

Now that we’ve introduced the speakers, let’s start with the key terms. This first slide outlines some foundational concepts that will recur throughout the discussion.

As Velibor explained, M2M video refers to machine-to-machine video — any system where video is captured for AI analysis rather than human viewing. Common applications include autonomous vehicles, drones, industrial robotics, smart cities, and telehealth. In these scenarios, the video may never be seen by a person; it’s intended for real-time algorithmic decisions.

He also clarified the meaning of features. In the context of neural networks, features are the internal data representations generated by the network’s layers — a compressed, task-specific view of the input. Rather than transmitting raw video, systems can transmit just these features, dramatically reducing data volume while preserving the information the machine actually needs.

Inference is the final step: the point at which the AI system uses those features to make a decision or classification. Once trained, the model runs inference on new inputs to complete a task, whether that’s detecting a pedestrian, identifying an object, or triggering a response.

The next slide expands the acronym list and introduces two specific MPEG initiatives.

As Velibor explained, MPEG-AI is the standards framework within MPEG for video applications that involve artificial intelligence. Within that framework, there are two separate codec efforts: VCM and FCM.

VCM stands for Video Coding for Machines. It’s a traditional video codec that takes video in and outputs video, but it’s optimized for machine analysis instead of human viewing. The output is still a playable video stream but structured to support AI tasks.

FCM, or Feature Coding for Machines, skips the pixels entirely. It works with feature tensors—internal outputs from a neural network—and compresses those instead of raw frames. This approach dramatically reduces bandwidth and processing requirements, especially in systems where no human ever needs to see the video.

Why Do We Need M2M Video Codecs?

With the basics out of the way, the next slide tackles the real question: why do we even need a new kind of codec for machine-to-machine video?

With the basics out of the way, the next slide tackles the real question: why do we even need a new kind of codec for machine-to-machine video?

M2M Use Cases

As Hari explained, traditional video codecs were built for human eyes. They preserve pixels, detail, and resolution because that’s what people care about. But machines don’t. A machine only needs the data required to perform a specific task, whether that’s object detection, classification, or tracking. That disconnect opens the door for a very different kind of efficiency.

By extracting and encoding just the relevant features, FCM dramatically reduces bandwidth usage and power consumption. It also enables compute flexibility. Instead of doing everything on one device or pushing all the work to the cloud, the system can distribute the workload across cameras, edge processors, or central servers. The architecture can be tuned to match the deployment.

Privacy is another built-in advantage. In many public or regulated environments, the ability to transmit only abstracted features rather than recognizable video helps address concerns about surveillance and personal identification.

While security isn’t a codec feature by itself, removing raw visual data from the pipeline reduces exposure and limits how the content could be misused.

Now that we’ve covered the core reasons behind M2M codecs, the next slide introduces a practical use case.

Hari described how a swarm of drones could be deployed for a mission like search and rescue. In traditional architectures, each drone would either need to process video locally, which adds weight and power requirements, or stream raw video back to a central server, which quickly overwhelms available bandwidth.

With FCM, each drone can extract just the relevant features from the video and transmit only that condensed data. The result is a system that uses less power, consumes far less bandwidth, and still delivers what’s needed to make real-time decisions.

This architecture also opens the door to collaborative compute models. Drones can share partial results with each other or with a remote server, distributing the processing load instead of concentrating it in one place.

Security remains a concern in these scenarios, but by sending features instead of raw frames, the risk of exposing sensitive visual data is reduced.

M2M in Autonomous Vehicles

The next use case focuses on autonomous vehicles, where the scale of video data becomes even more extreme.

Hari pointed out that a typical connected car already generates around 25 gigabytes of data per hour, most of it video. Autonomous vehicles take that much further. With multiple onboard cameras, they can generate up to 4 terabytes of data per day. Some estimates put real-time data generation at over 3,000 megabits per second. That data has to be processed locally, remotely, or both, with no tolerance for latency.

This isn’t just about navigation. Autonomous systems often support multiple streams of communication: vehicle-to-vehicle, vehicle-to-infrastructure, and even vehicle-to-grid. Managing all of that traffic at scale means bandwidth matters, compute efficiency matters, and minimizing delay is critical.

Feature-based compression provides a way to manage this load without compromising responsiveness. By transmitting only the data needed to support a specific inference task, vehicles can operate more efficiently and safely in environments that would overwhelm traditional streaming or centralized processing models.

Security is a concern here as well. Compressing and transmitting features rather than video helps reduce the visibility of sensitive raw footage, which is especially important in commercial fleet operations or public transit deployments.

M2M in Object Detection

The next slide explains how a neural network detects objects in a frame and why machines need something different from pixels.

Hari walked through this example of an airplane. On the left is a typical input frame — a regular image captured by the camera. But once it enters the convolutional neural network, the raw frame is transformed into multiple feature maps, shown at the top right. Each of these maps corresponds to one channel in the neural network and captures a specific pattern or region of interest.

The network doesn’t use all of them equally. Only a few maps contain the information relevant to detecting that the object is, in fact, an airplane. Below those maps, the “feature map activations” show which areas light up across different channels. These are the regions the machine focuses on to make a decision.

On the far right, the output detection confirms the result: the network identifies the airplane and highlights it in green.

What matters here is that only a subset of the feature maps are needed for the task. The rest can be dropped or heavily compressed without hurting accuracy. FCM takes advantage of that by transmitting only what’s useful, just like how perceptual video codecs throw away high-frequency data the eye won’t notice. Except in this case, it’s the neural network’s “perception” that drives what gets preserved.

Feature Coding for Machines Compared to Traditional Codecs

This slide compares the traditional approach to inference with the FCM approach and shows where the key differences lie.

Velibor explained that in the traditional setup, shown in the top diagram, video is captured and encoded on the device, transmitted to the server, decoded, and then run through the entire neural network. This is called remote inference. All the compute happens on the server, and the full video needs to be sent over the network.

In the lower diagram, we see the split inference model enabled by FCM. Instead of sending video, the device runs the first part of the neural network locally, producing intermediate features. Those features are encoded by the FCM encoder and sent across the network. On the server side, the FCM decoder restores those features and passes them through the remaining layers of the neural network to complete the task.

This allows the system to offload part of the work to the device while transmitting only a fraction of the data. The end result is the same: an accurate detection or classification, but with lower bandwidth requirements and more flexibility in where computing happens.

FCM in Action. A Visual Comparison

The next slides show how object detection performance holds up under different encoding methods. Rather than focusing on visual quality, the comparison looks at how well a neural network detects and classifies objects in each version of the sequence.

In all four panels, the image is the same: two cyclists riding past a tree, with a third person farther in the background. The top left quadrant shows the uncompressed source, where the network correctly identifies all three people and both bicycles. That serves as the reference.

In the top right, VVC encodes the video at 319 kbps. Detection for the main cyclists is still accurate, but the third person in the background is missed entirely. Compression noise has degraded the input enough that the network no longer sees the smaller object.

The bottom left shows VCM at 175 kbps. It performs slightly better, recovering the third person with lower confidence but still drops detail.

FCM, shown in the bottom right at just 154 kbps, recovers all detections, including the distant person. According to Velibor, the confidence level for that third detection under FCM is 98 percent, nearly matching the 99 percent score for the uncompressed version.

To clarify what you’re seeing here, these are not outputs from the FCM decoder. Because FCM doesn’t reconstruct video, the overlays are applied to the original uncompressed frames for visualization. The detections come from running the neural network on the decoded outputs of each method.

Velibor also explained that FCM includes preprocessing steps like spatial and temporal subsampling to reduce complexity before encoding. VVC, by contrast, simply increases quantization to hit the target bitrate. That helps explain why FCM can deliver better detection accuracy at lower bitrates; it keeps the features the network needs, not the pixels a viewer might want.

Key Benefits of FCM

Hari next outlined the architectural and practical advantages of FCM.

He pointed out that schematically, FCM shifts video coding from a traditional one-to-many broadcast model toward many-to-one or one-to-one architectures where each sensor acts as a feature stream source. Instead of pushing all inference to the cloud, compute is distributed, leveraging on-device AI accelerators to extract features before transmission. This enables scalability, especially in low-bandwidth or remote environments, by reducing both bitrate and processing load on the server side. Hari emphasizes that these accelerators are becoming cheaper and will soon be standard in next-gen sensors.

This approach also enhances privacy by transmitting only task-specific features, not full video. For example, a system counting pedestrians can ensure it captures no facial detail. Unlike traditional video, which allows unlimited reuse, FCM enables tighter control over what downstream applications can infer, making it inherently more privacy-preserving.

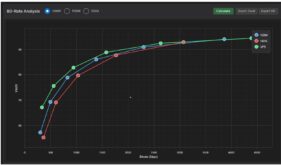

The next question relates to how the performance of FCM is benchmarked. This slide compares object detection performance across bitrates, using mean average precision (mAP) instead of traditional metrics like PSNR.

Velibor explained that while visual codecs focus on human perception, the key metric here is how accurately a neural network detects objects after compression. The chart shows that FCM outperforms VVC at every bitrate tested. At 100 kbps, for example, VVC yields about 26 percent mAP, while FCM is already above 40 percent.

Looking at it from the other direction, if your application requires a certain minimum mAP for reliable detection — say 50 percent, FCM can reach that threshold at about 250 kbps. VVC would require more than twice that bitrate to hit the same level.

The table below the graph shows percentage bitrate savings for FCM versus VVC across different content classes and operating modes. In random access mode, FCM reduces bitrate by over 72 percent overall. Even in low delay mode, which is typically harder to compress efficiently, it still saves more than 57 percent.

Velibor also noted that this performance isn’t just theoretical. The team has already implemented real-time FCM encoding on GPU, well before the final version of the standard is released.

FCM Block Diagram

Velibor revisits the FCM codec architecture to explain what each stage of the pipeline does.

On the encoder side, intermediate features from a neural network are first passed through a temporal downsampling step to reduce redundancy across frames. These are then processed using a multi-scale fusion module that condenses spatial information, essentially an autoencoder trained on feature maps.

Next, the reduced features are packed and quantized, similar to traditional video encoding. This is followed by a feature refinement stage, one of the main innovations introduced by FAU, which prepares the data for entropy coding using VVC. The decoder runs the same steps in reverse: decoding the compressed stream, dequantizing, unpacking, and reconstructing the intermediate features via upsampling and fusion reversal.

Velibor noted that while VVC is currently used for entropy coding, the long-term plan includes replacing it with a neural entropy coder. He emphasized that FCM is specifically designed to exploit GPU and NPU acceleration on edge devices, allowing part of the network to run locally with minimal added compute load.

FAU’s Contributions to FCM

The speaker’s next focused on FAU’s contributions to FCM

FAU has been deeply involved in the development of FCM since the beginning, starting with a joint proposal submitted alongside InterDigital in response to MPEG’s call for proposals. Since then, FAU has contributed multiple technical tools to the standard, including core technology now adopted into the reference software. Their team continues to develop improvements that are currently under evaluation as Core Experiments. FAU is also one of the primary contributors to the anchor results and serves as a main cross-checking group for validating the standard’s performance.

FCM Implementation Timeline: Real-Time FCM

As the speakers concluded the technical deep dive, they shifted their focus to timing and implementation.

Hari explained that the MPEG timeline for FCM calls for a Committee Draft in October 2025, a Draft International Standard in January 2026, and finalization by July. FAU has already built a working real-time implementation and continues to evolve it in parallel with the standard. Because the team participates in every MPEG meeting, they’re able to integrate changes as they happen, keeping the implementation closely aligned with the spec.

Commercialization will be led by OP Solutions, the industry partner sponsoring FAU’s research. According to Hari, FAU’s role is to provide the technology foundation and help support OP Solutions as they bring FCM-based products to market. OP Solutions is expected to raise funding in the second half of 2025 and begin building out the business and sales channel. Hari also noted that early partners may influence the direction of the standard by helping define new use cases and feature requirements for future versions of FCM.

When asked about near-term applications, Hari pointed to several sectors already feeling the pressure of video-scale problems. These include industrial automation, public safety, defense, and autonomous vehicles — all areas where real-time video is used to make decisions, not just capture events. FCM’s ability to offload computation and reduce bandwidth makes it a good fit. He also mentioned infrastructure monitoring, agriculture, and healthcare as areas with strong privacy requirements where a feature-based approach could offer clear advantages. For example, a system designed to count pedestrians could do so without capturing any facial identity, limiting both privacy risk and data liability.

As for licensing, Hari said a royalty-bearing model is likely. Because FCM is expected to be implemented by both device makers and service providers, a structure similar to VVC or HEVC would make sense. He noted that pool-based approaches have worked reasonably well in the past and would be a logical fit here as well.

FAU’s Award Winning Paper

To close, Velibor called attention to a recent paper presented by their team at the 2025 IEEE International Conference on Consumer Electronics, which takes place during CES.

Titled Enabling Next-Generation Consumer Experiences with Feature Coding for Machines, the paper earned a best presentation award and will be shared alongside this recording. Both speakers credited their PhD students for playing a significant role in the standardization effort and expressed appreciation for the chance to present their work to the Streaming Media audience.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel