A few weeks ago, I wrote about the VMAF Phone Model which measures VMAF score for viewers watching on a mobile phone. Since then, I gave a presentation at NAB on VMAF and ran some calculations comparing the phone and default models. I share those below.

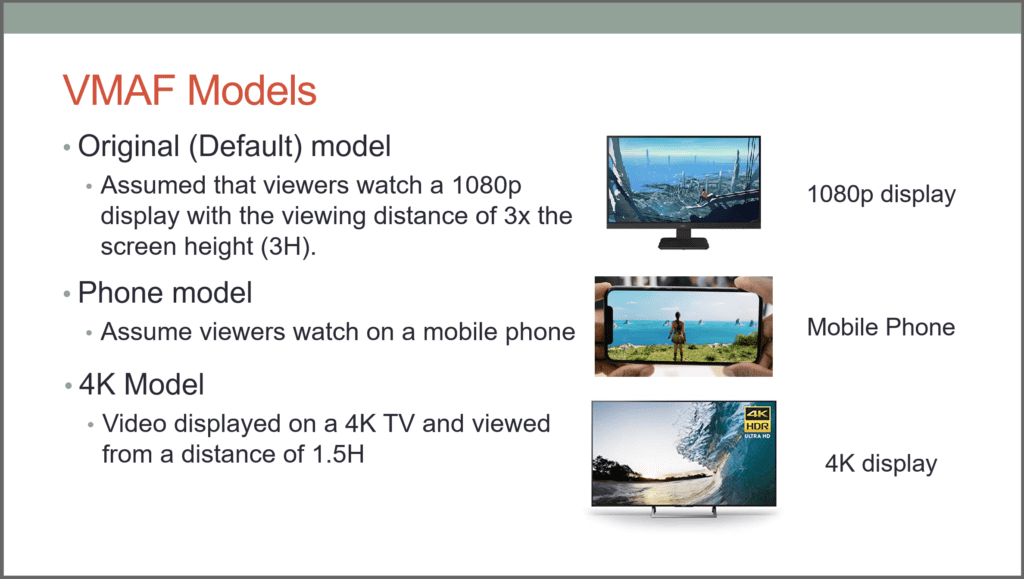

First, have a quick look at the three VMAF models shown on the slide below. The models are self-explanatory. In use, they are separate scores computed by whichever objective quality metric program you’re using.

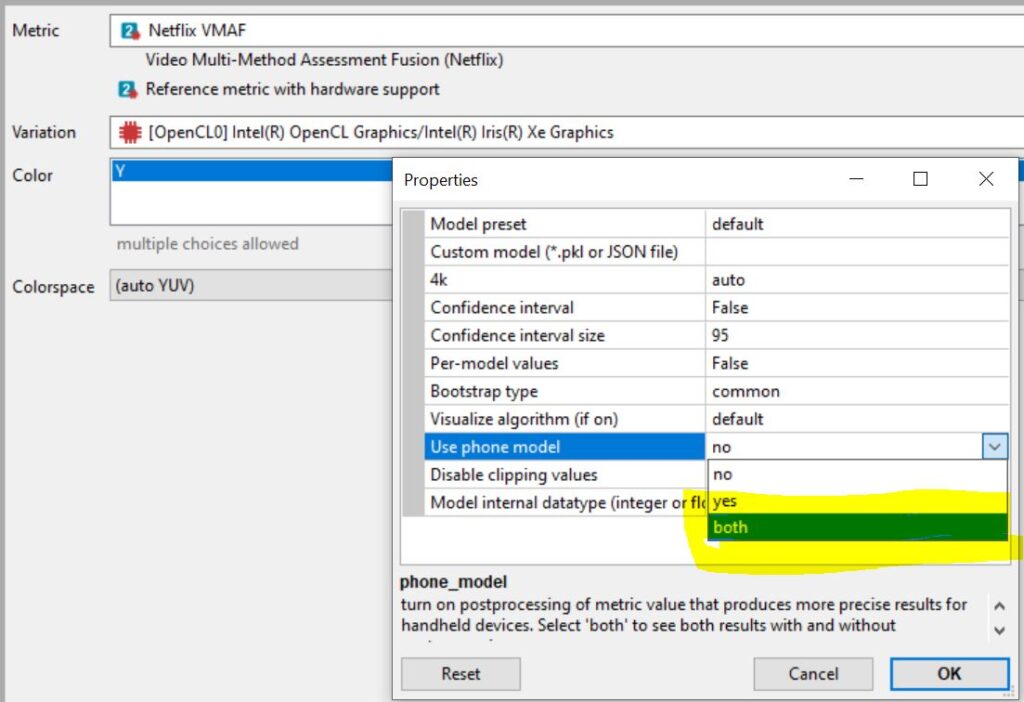

I explain how to use Netflix’s free VMAF Master to compute these scores in this post. With the Moscow State University Video Quality Measurement Tool, my preferred desktop tool, you can designate the Phone model, or run both.

Whichever tool you use, you end up with a different score for the phone model and the default model. How do the mobile and default models compare? Well, remember that VMAF scores map to subjective ratings and that scores higher than 80 predict an excellent rating. Remember also that RealNetworks researchers found that a score above 93 is “either indistinguishable from original or with noticeable but not annoying distortion.” Finally, note that a differential of 6 VMAF points equal a Just Noticeable Difference, defined as a difference that viewers would notice 75% of the time.

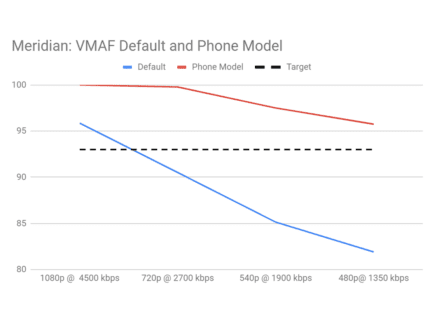

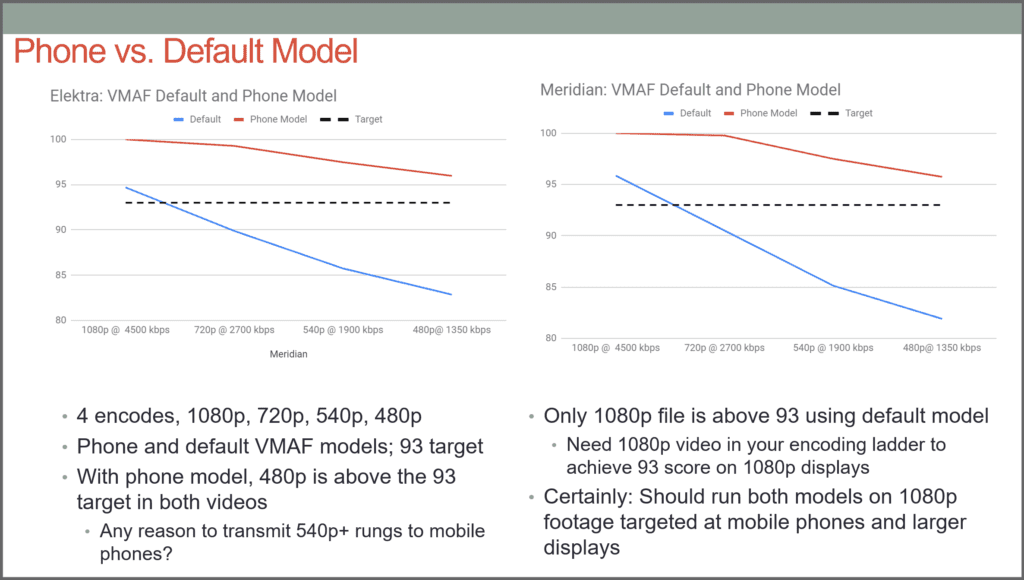

As shown below, I measured the difference between the default and phone models with two two-minute test clips, one from the movie Elektra, the other from Netflix test clip Meridian. Specifically, I created four files each at 1080p, 720p, 540p, and 480p and measured the scores with both models. In both graphs, red is the phone model and blue the default model, and the dotted line is a VMAF score of 93.

For both movies, all four files score above 93 using the phone model, and the difference between the 1080p and 480p files is less than 6 VMAF points so most viewers wouldn’t notice any difference. This seems to indicate that if you’re sending 540p or higher resolution files to mobile phones you could be wasting bandwidth dollars (and more of my monthly allotment) to deliver additional quality that your viewers won’t notice.

At the other end of the spectrum, the 1080p file was the only resolution that posted a default score over 93, so if you’re sending 720p video or below to TV, computer, notebook, or tablet viewers, you might consider upping the resolution to 1080p.

As I understand it, Netflix calibrates VMAF with actual subjective trials which means that these numbers are confirmed with human eyes. You’d certainly want to run your own confirmation tests, but if you’re sending 540p videos or higher to smartphone viewers, you may be able to save some cash without degrading viewer QoE.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel