This article discusses the evolution of the encoding ladder from the fixed ladder presented by Apple in Tech Note TN2224 to context-aware-encoding, which creates a ladder that not only considers the encoding complexity of the content, but also the producer’s QoE and QoS metrics. The encoding ladder embodies the most significant encoding decisions made by encoding professionals, and understanding this evolution is critical to optimal encoding and delivery.

Contents

Introduction

The encoding ladder is the set of configurations used to make live and VOD videos available over a spectrum of data rates and resolutions for display on a range of devices over a range of connection speeds. Every adaptive bitrate technology (ABR) uses encoding ladders to create these multiple files.

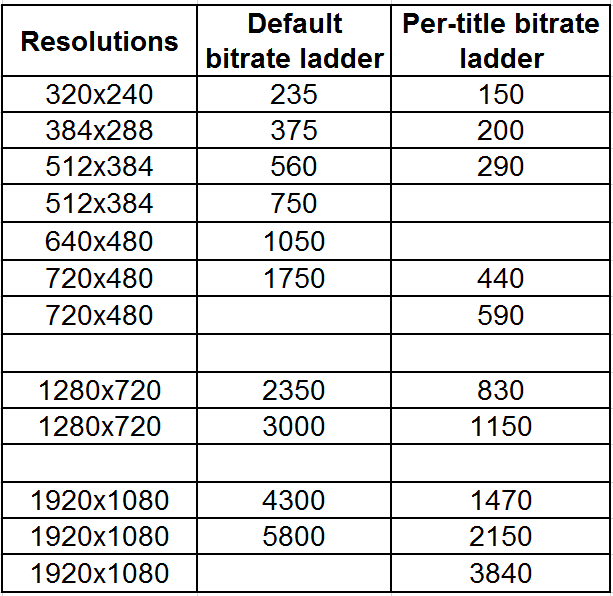

The first major encoding ladder configuration was presented by Apple in Tech Note TN2224, which has since been supplanted by the HLS Authoring Specification for Apple Devices. Table 1 is the encoding ladder from TN 2224.

Table 1. The encoding ladder presented in Apple Tech Note TN2224.

To explain, using this encoding ladder, each VOD or live video file would be encoded into ten different streams, ranging in quality from an audio-only stream at 64 kbps to a 1080p audio/video stream at 8564 kbps. It’s hard to overstate the importance of Apple’s encoding ladder as many large producers used the ladder as is or with only minor modifications.

Optimization

The first major modification on the fixed bitrates shown in Table 1 were frame-by-frame optimization technologies like those marketed by Beamr and EuclidIQ, or available via FFmpeg and some encoding tools as Capped CRF. These techniques are applied to individual rungs in the encoding ladder and adjust the data rate up and down according to the underlying complexity of the content. They don’t change the number of rungs in the ladder or the resolution of those rungs, just the data rate of each rung in the ladder.

Beyond Beamr and EuclidIQ, several vendors offer optimation technologies including Elemental’s QVBR, Harmonic’s EyeQ, and Crunch Media Works.

Introducing Per-Title Encoding

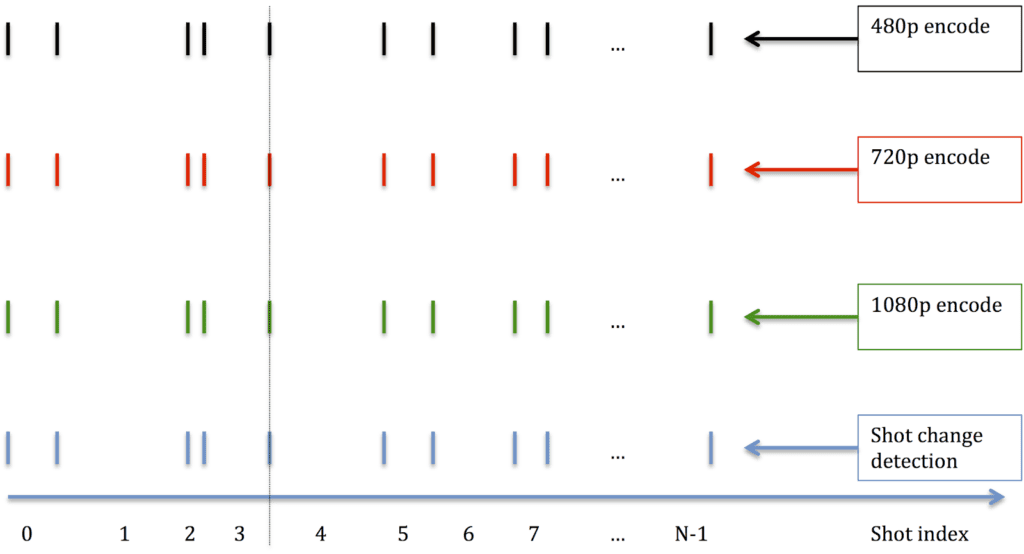

In December 2015, Netflix introduced per-title encoding. To determine the optimal ladder, Netflix encodes each video in multiple resolutions and data rates to identify the rungs that deliver the best quality at each relevant data rate. Unlike optimization technologies, Netflix’s technique can vary both the number of rungs in the ladder and their resolutions. You see this in Table 2, which shows the default ladder used by Netflix before implementing per-title, and the customized ladder for Netflix’s animated original BoJack Horseman.

Table 2. Netflix’s per-title can change both the number of rungs and their resolution.

Changing the number of rungs and their resolution can save both encoding and storage costs. That is, an easy-to-encode video that looks great at 1080p at 3 Mbps doesn’t need the same number of rungs as an action movie that requires 8 Mbps for acceptable 1080p quality. Also, as you can see in the ladder in Table 2, animated videos (and many others) retain higher quality at lower data rates when encoded at a larger resolution. For example, at 1750 kbps, the default ladder encoded at 720×480 resolution, where the customized ladder encoded at 1920×1080 resolution.

Note that Netflix uses a “brute-force” technique that produces hundreds of test encodes for each video to identify the absolutely optimal ladder, which you can do when your videos are watched by millions of viewers. Most companies that have implemented per-title encoding (rather than optimization) analyze the file using a variety of techniques to gauge complexity, and based upon this measure identify the necessary rungs and their resolution. Companies in this space include Bitmovin (Per-Title Encoding), who won the per-title technology bakeoff at the most recent Streaming Media East, and Mux (Per-Title Encoding). One of the few encoders you can actually buy with per-title features is the Cambria encoder from Capella Systems, whose Source Adaptive Bitrate Ladder (SABL) I review here.

While this method is generally more efficient than pure optimization, there are several caveats. For example, you’ll probably want to set a bitrate maximum and may want to specify specific resolutions to be included in the ladder, perhaps to match different windows in your webpages. You may also want to specify a minimum data rate included in the encoding ladder, even if the video is complex and hard to encode. Not all per-title technologies support these options.

Which Transitioned to Shot-Based Encoding

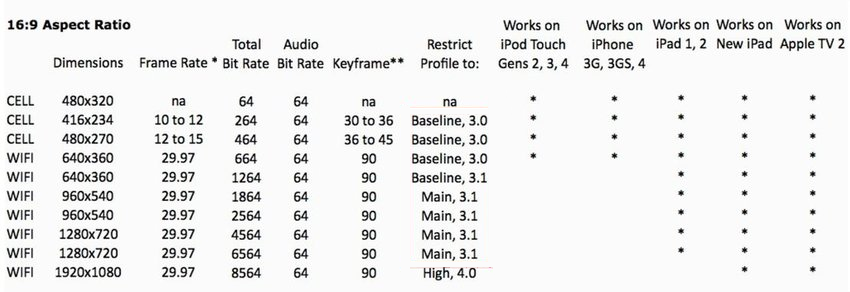

The next major advancement on per-title encoding was shot-based encoding which was also invented by Netflix. Rather than divide the video into arbitrarily-defined chunks sectioned off with regular keyframes, the encoder divides the video into separate shots using scene detection (Figure 1). Then the encoder gauges the complexity of each scene and encodes accordingly.

Figure 1. Shot-based encoding divides the video into shots rather than arbitrarily-sized segments.

Rather than divide the video into 6-second chunks with 2-second keyframe interval, a five-second shot would be encoded as a separate segment, perhaps using a keyframe interval of 5 seconds. As shown in Figure 1, so long as each rung is segmented the same way, the player should have no problem changing rungs to react to changing delivery conditions. According to Netflix, this “Dynamic Optimization” produced bitrate savings of around 30% depending upon the codec and metric used for the analysis.

As far as I know, Netflix is the only vendor that uses shot-based encoding at this point, and, of course, they don’t offer it as a service to third parties. However, I’m guessing multiple vendors will start to offer this as a feature of their per-title encoding in the near term.

And Now Context-Aware Encoding

Where per-title encoding examines only the complexity of the underlying video, context-aware encoding also considers network and device playback statistics to create a unique ladder that optimizes quality according to how your viewers are actually watching your videos. This data can feed in from quality of experience (QoE) beacons in your player or network logs and includes details like the effective bandwidth of your viewers, the devices they’re using to watch the videos, and the distribution of viewing over your encoding ladder.

Brightcove’s Context-Aware Encoding (CAE) was the first to consider this data, and you can read about CAE in a white paper entitled Optimizing Mass-Scale Multi-Screen Video Delivery. You can also watch a highly technical interview with Brightcove’s Yuriy Reznik, one of the inventors of Context-Aware encoding, via a link presented below. At least two other companies—Epic Labs and Mux—revealed their versions of a similar idea at NAB 2019. Epic Labs offers its version in a product called LightFlow and has at least one high-volume user, while Mux added Audience Adaptive Encoding to its encoding stack in April.

Questions to Ask When Considering Per-Title Technologies

When evaluating per-title encoding technologies, ask the following questions.

- Does the technology optimize each rung separate (optimization) or change the number of rungs and their resolution?

- is the technology shot-based or segment-based?

- Does the technology integrate QoE and QoS network statistics? If so, from where can it access this data?

- How does implementing the technology impact encoding costs?

- Can you specify specific resolutions that must be represented in the encoding ladder, as well as other details like minimum and maximum data rates?

- Is the technology codec agnostic or specific to H.264 or a different codec?

To Learn More

Here are some resources you can read/watch to learn more about per-title technologies and optimizing your encoding ladder.

NAB 2019: Epic Labs Talks LightFlow Per-Title Encoding, Streaming Media, April 2019

NAB 2019: Brightcove Talks Cost Savings and QoE Improvements From Context-Aware Encoding, Streaming Media, April 2019

NAB 19 Encoding and QoE Highlights: Here’s What Mattered, Streaming Media, April 2019

Buyers’ Guide to Per-Title Encoding, Streaming Learning Center, May 2019

Per-Title Encoding Resources, Streaming Learning Center, June 2018

Five Checks for your Encoding Ladder, Streaming Learning Center, August 2017

To Implement

If you’re interested in analyzing your encoding ladder or implementing per-title, here is a list of resources.

Saving on Encoding and Streaming: Deploy Capped CRF, Streaming Learning Center, August 2018.

Encoding and Packaging for Multiple Screen Delivery 2019 – this $49.95 course has three relevant modules on analyzing and creating your encoding ladder.

A Survey Of Per-Title Encoding Technologies, Streaming Learning Center, May 2019

I (Jan Ozer) have also helped several companies implement per-title encoding via consulting arrangements. Contact me at Janozer@gmail.com if you’d like to discuss this further.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel