After three years or so in gestation, per-title encoding is becoming a required feature on most encoding platforms, whether in-house software or SaaS cloud providers. In this buyers’ guide, we’ll review a list of features to look for in per-title encoding offerings and present a testing structure to evaluate the contenders that make it to your short list. If you’re willing to get your hands a bit dirty, metaphorically speaking of course, you’ll be able to dig beneath the marketing hype and identify the unique strengths and weaknesses of each encoder that you consider.

By way of background, per-title encoding refers to technologies that assess the encoding complexity of each video and adjusts encoding parameters accordingly. All per-title technologies adjust the encoding data rate, but as you’ll learn, many take it far beyond that.

Contents

Features and Characteristics

Let’s start with a list of features and characteristics to consider when evaluating per-title technologies. I’ll be brief because many of these are detailed, with examples, in the Spring 2018 “Buyer’s Guide to Per-Title Encoding.”

Bitrate-control—Some per-title technologies assess the complexity of the entire video and enable traditional constant or variable bitrate encoding. Some adjust the data rate of individual frames or scenes within the video, preventing CBR or even an organized constrained VBR. As an example, Netflix’s shot-based encoding encodes each shot separately, varying the data rate from shot to shot. While this technique seems to prove that it’s safe to abandon CBR for VOD delivery, many producers continue to insist on CBR encoding. If you’re one of them, make sure your per-title technology enables this.

Per-title or shot-based—Shot-based encoding is superior to older per-title encoding techniques because you can adjust GOP and segment size to fit the content, which makes more sense than arbitrarily fixed durations for both. Few commercial technologies currently offer this, but it is coming. So, take some time to understand how each technology works, because it will impact overall effectiveness.

Changing rungs and resolutions—Some per-title technologies retain the number or rungs in the encoding ladder and their original resolution; others can adjust both. In the latter case, the encoder might produce a soccer match with eleven rungs spread from 180p to 1080p and peaking at 10Mbps, and a screencam with three rungs, one at 720p and two at 1080p with a max rate of 1Mbps. Encoders that can’t adjust rungs and resolution would produce eleven rungs for both, which for the screencam would be a waste of encoding and storage cost with absolutely awful looking video in the lower resolution rungs.

Ability to set minimums and maximums—When you build a fixed bitrate ladder, you choose a minimum rate to deliver-say 400Kbps-because you want to serve viewers connecting at that bandwidth. With some per-title technologies, a hard-to-encode clip will trigger an increase in data rate for that lowest rung, say to 600Kbps. This means you can no longer deliver to that viewer connecting at 400Kbps. Similarly, you may have a maximum bitrate of 8Mbps because you can’t afford to deliver higher-quality streams. Again, with some technologies, you can’t set a maximum data rate, which could blow your economic model. Long story short, the ability to set minimum and maximum data rates are highly desirable features.

Ability to output specific resolutions—Another function of an encoding ladder is to deliver streams of a certain resolution, say to match your website’s viewing window. If a per-title encoding technology can change the resolution of your rungs, check if you can also specify a resolution that it must deliver.

Impact on encoding cost—Some per-title technologies work in a single-pass, some take as many as three passes, which can triple your encoding costs if you run your own encoding farm. This isn’t an issue if you’re using a cloud encoder with a specific price, but if you’re buying an encoder or per-title technology to deploy internally, be sure you understand the impact it will have on encoding time/cost.

Live vs. VOD—There are several live per-title encoding technologies, none of which I’ve tested so I can’t discuss their effectiveness. So, if you’re primarily a live shop, per-title is now an option. However, if you also stream lots of VOD content, be aware that the best live per-title option might not be the best VOD option; depending upon comparative volume, it might make sense to re-encode live-encoded content with a VOD-oriented per-title technology.

Evaluating Top Rung Performance

So, you’ve done your homework, and you’ve narrowed down your options to two or three. What tests can you run to compare them? I would focus on top-rung performance, or how the per-title technology adjusts the configuration for the top rung in your encoding ladder.

Why? Because this is the most expensive stream and in many high-bandwidth countries may be the stream most frequently watched by your viewers. Even if it’s not, this analysis will tell you whether the per-title technology you’re evaluating is more biased for bandwidth saving or quality protection and how it falls into line with other proven per-title technologies.

Here’s how I would go about evaluating top-rung performance.

Set Your Expectations

Start with a look at your delivery logs and assess which streams your viewers are primarily watching. If your current max bitrate is 8Mbps and you’re primarily delivering your top quality stream, per-title should be able to deliver significant bandwidth savings with minimal perceived quality reduction. In addition, in this usage scenario, a system that doesn’t change stream resolution isn’t such a big deal because most viewers are watching 1080p anyway.

If your maximum rate is 4.5Mbps and you’re delivering a lot of 360p, 540p, and 720p streams in the 2Mbps to 4Mbps range, per-title probably won’t save much bandwidth. But it could dramatically improve the quality of experience of your viewers, but much more so if the technology can adjust resolution and rungs to match complexity.

In addition, if your max bitrate is 8Mbps, you’re probably deploying per-title to reduce bandwidth costs without degrading top-rung quality. If your max bitrate is 4.5Mbps, you’re probably seeking to improve quality where necessary but also to reduce bandwidth on easier to encode clips, so your expectations will be completely different. The bottom line is to analyze where you are up front and set reasonable goals and expectations.

Choose Your Test Clips

Choose a small number of test clips, which should include a representative mix of easy-to-encode and hard-to-encode clips. The number of clips and duration is up to you; more and longer is always better, but also more time-consuming.

For guidance, when I evaluate per-title technologies for articles and presentations, I use about 20 clips between 1 and 4.5 minutes in duration, though most are 2 minutes long. However, because I’m evaluating for a wide range of uses, this sample includes clips ranging from screencams and PowerPoint-based videos to soccer matches and music videos. If you’re evaluating for a narrower range of content types, you can get away with fewer test videos.

Encode your Clips at CRF 23

CRF stands for constant rate factor, which is an encoding mode available in x264, x265, VP9, and other codecs. Rather than encode to a target bitrate, CRF encodes to a certain quality level that can range from 1-51. In my experience, encoding a 1080p clip at CRF 23 delivers a file that will have a VMAF value of around 93, which was found in a RealNetworks study to be “either indistinguishable from original or with noticeable but not annoying distortion.” In other words, the quality should be good enough to distribute.

You can access CRF encoding in HandBrake or in FFmpeg via the command script below.

ffmpeg -i input.mp4 -vcodec libx264 -crf 23 -g 60 -keyint_min 60 -sc_threshold 0 output.mp4

Then, if you have the ability to compute VMAF ratings, do so on these clips.

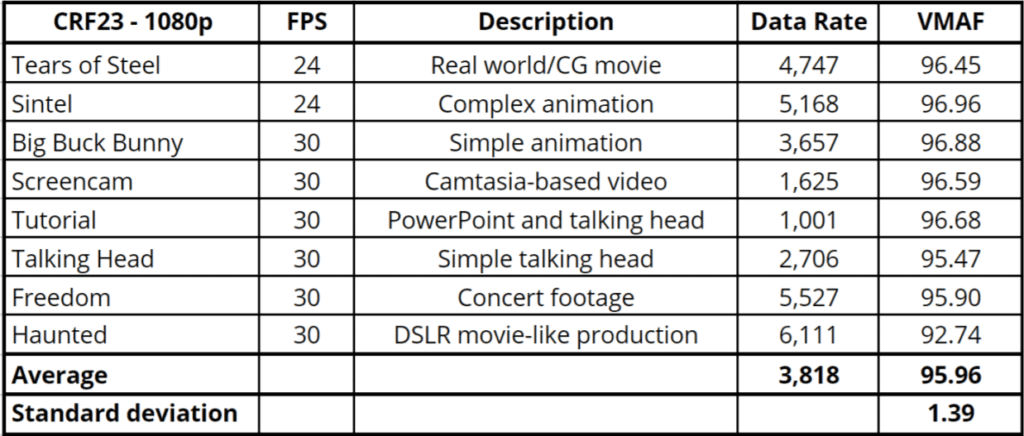

Table 1 shows the results of CRF 23 encoding and VMAF testing on several clips that I’ve used for broad-based testing in the past. Though the CRF 23 data rates vary from around 1 Mbps to over 6 Mbps, the VMAF values only range from 92.74 to 96.96, with a standard deviation of 1.39, a tight grouping which confirms that CRF 23 reliably delivers a VMAF rating of 93.

Table 1. CRF 23 output and VMAF ratings for eight test clips.

Upload Your Clips to YouTube and Test There

YouTube deploys a neural-network-based per-title encoding technology that you can use as a check on your CRF23/VMAF numbers. Upload your files to YouTube and download the top rung via a tool like the Wondershare Video Converter.

Then measure the data rate and VMAF score.

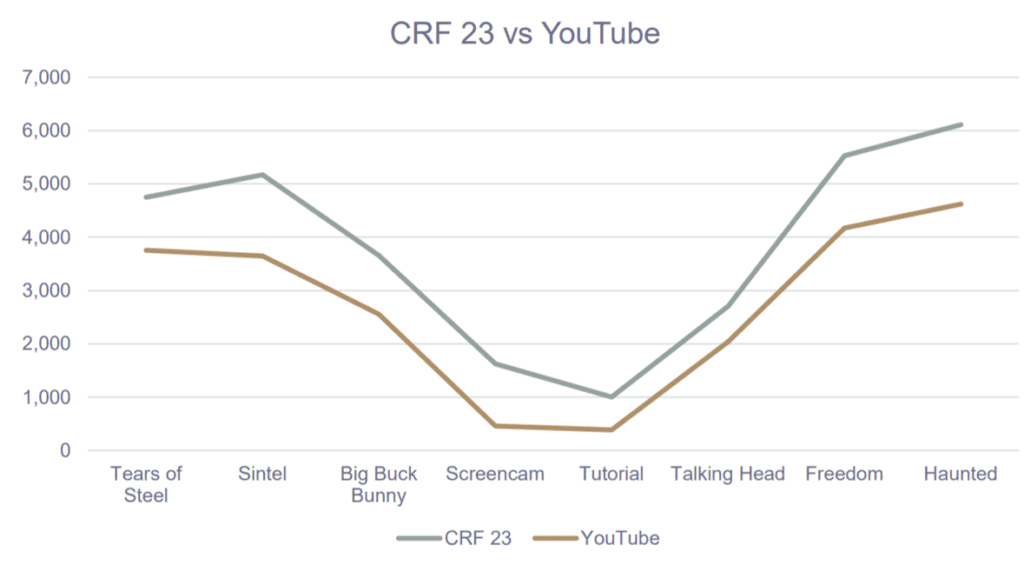

Figure 1 shows the data rates for the eight files in Table 1 as produced by YouTube (brown) and CRF 23 (green). On average, CRF 23 was about 1 Mbps higher and the VMAF score about 3 points higher, but the correlation is obvious; clearly, YouTube’s vision of encoding complexity aligns with that of CRF 23.

Figure 1. CRF23 data rates compared to YouTube at 1080p top rung.

Figure 1. CRF23 data rates compared to YouTube at 1080p top rung.

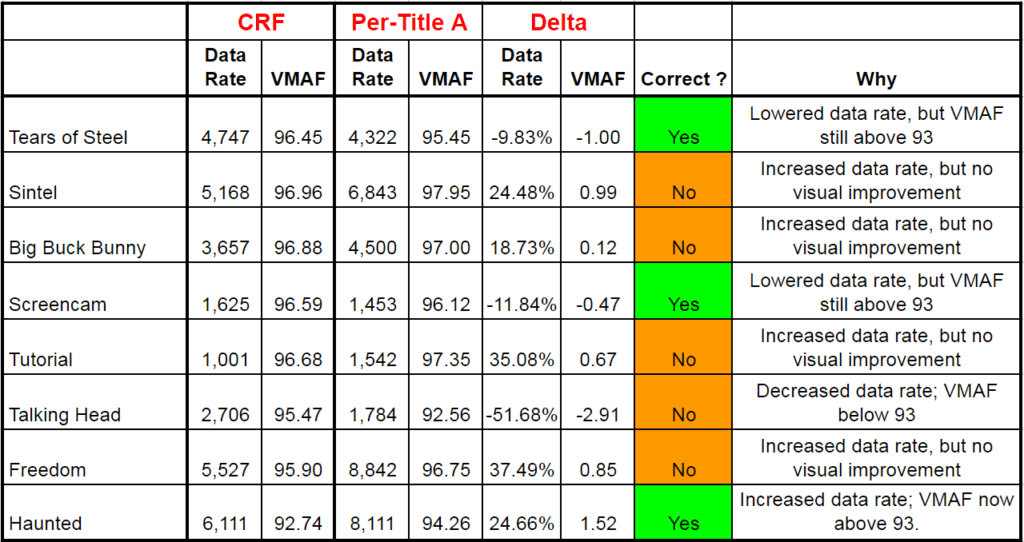

By performing these two exercises, you have the combined weight of the x264 development community, YouTube, and Netflix (via the VMAF rating) suggesting what a reasonable data rate is for the top rung of your encoding ladder. It’s time to test your candidate per-title systems and ascertain the data rate and VMAF value of their top-rung results. Then “grade” your per-title candidates as shown in Table 2, which shows the CRF numbers in the first two columns, the per-title technologies’ in the third and fourth, and the difference in the fifth and sixth. Then it evaluates whether the per-title technology is correct or incorrect.

Table 2. Evaluating per-title encoding technologies.

Table 2. Evaluating per-title encoding technologies.

The grading rules are as follows:

- If the per-title technology decreases the data rate, as Per-Title A did for Tears of Steel, it’s the correct decision so long as it didn’t reduce the VMAF score below 93. In the case of Tears for Steel, the technology correctly perceived that it could produce acceptable quality at a lower bitrate.

- If the per-title technology decreases the data rate but also the VMAF score below 93, as with the talking head video, it’s an incorrect decision. You’re not implementing per-title to create ugly video, particularly not at the top of your ladder.

- If the per-title technology increases the data rate, it’s the correct decision if it increases the VMAF score from under 93 to over 93, as with the Haunted video. The technology correctly perceived that the video was hard to encode and needed a higher data rate for acceptable quality.

- If the per-title technology increases the data rate when the VMAF value is already over 93, as with the Freedom video, it’s an incorrect decision. Why boost the data rate by 37% when the video is already good enough for distribution and the .85 VMAF increase will be imperceptible to viewers (a change of 6 VMAF points constitutes a Just Noticeable Difference that will be perceived by 75% of viewers).

To be sure, there’s nothing magical about a VMAF score of 93; 92.9 isn’t dramatically ugly and 93.1 isn’t LA LA Land projected in Dolby Cinema. But, typically per-title technologies err dramatically as you see with the Freedom video, where the data rate increase clearly is unwarranted. Now you have the theoretical structure to call BS when the per-title technology’s machine-learning, artificial intelligence, neural network, or whatever the special sauce says the data rate should be 50% higher than that suggested by CRF23/VMAF/YouTube.

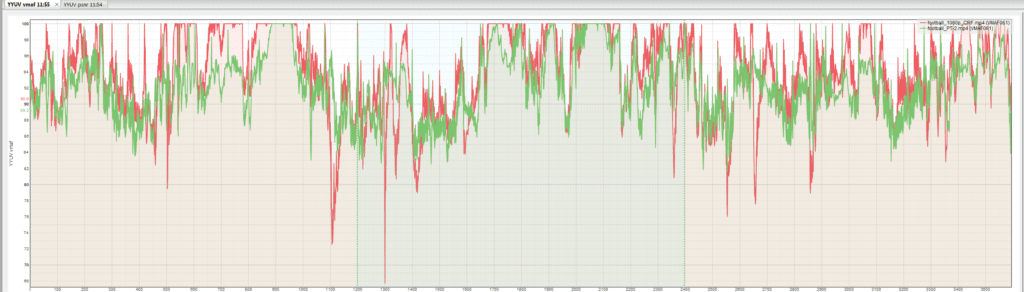

If you have a product like the Moscow State University Video Quality Measurement Tool (or Elecard VideoQuest), analyze the streams for transient quality problems, or dramatic drops in quality. You see this in Figure 2, where I’m comparing capped CRF (red), a rudimentary per-title approach, with another per-title technology (green). Though the average score is close, the multiple downward red spikes potentially foretell quality issues that would degrade QoE. I would be particularly concerned about this for shot-based or frame-based per-title technologies.

Figure 2. Analyzing the videos for transient quality issues.

Figure 2. Analyzing the videos for transient quality issues.

What about the other rungs of the encoding ladder? When I analyze per-title technologies for the technology developer, I examine each rung as shown in Figure 4 to ensure optimal quality and decision making for technologies that can change the number of rungs and their resolution. For most companies comparing two technologies, however, that’s simply too much work. For them, I would disqualify any system that measures complexity substantially differently than CRF23 and VMAF 93 or exhibits the quality issues shown in Figure 2, and then choose among remaining systems based upon features and price.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel