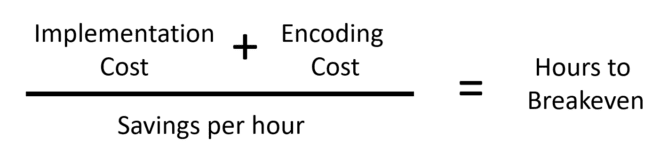

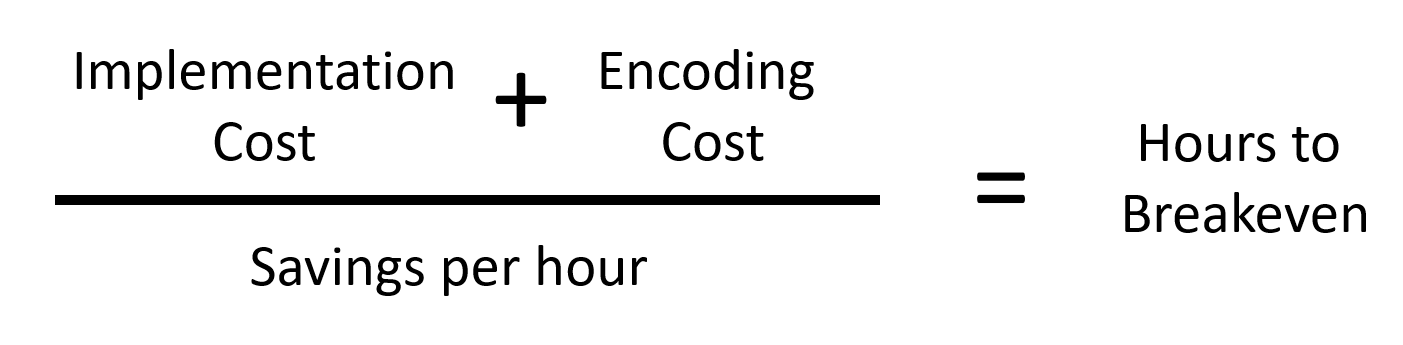

To a great degree, video codec adoption is driven by the simple break-even formula presented above. You put your costs on top, your savings per hour on the bottom, and come up with the number of hours of video you have to distribute to recoup your costs and start hitting the plus column.

If you’re in a TL/DR frame of mind, jump to the end of this article for a Google Sheet that can help with that analysis. I’ll also talk about exceptions to this formula, and what it means for VVC, AV1, and LCEVC.

For those who value the journey as well as the destination, let’s start with a historical view.

The last great streaming codec migration was from VP6 to H.264 which was driven by Adobe’s integration of H.264 decode into Flashback in 2007.

What did the numerator look like?

Well, implementation cost, which covers player development and testing, was minimal because Adobe was in charge of making H.264 work in Flash in the handful of browsers available. Since most large producers were either already supporting H.264 for mobile or soon about to, for many companies encoding costs actually dropped when they stopped producing VP6.

What about the cost savings side?

In 2010, I estimated that H.264 was about 15% more efficient than VP6 when it came to compression performance. In the same year, Dan Rayburn pegged CDN pricing at as low as $0.015/GB for very large producers.

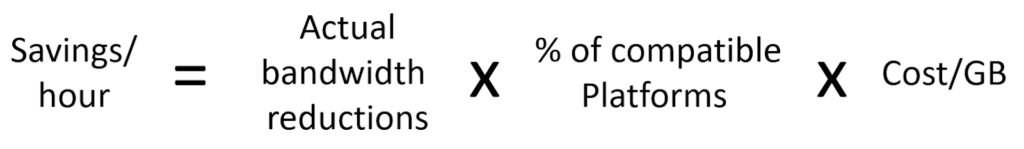

So large producers would save about $0.00225/GB on streams to viewers who could watch H.264, which was almost all viewers watching via browsers. At that time, this was most viewers for many services, so producers could harvest this savings on almost all streams that they delivered.

So, while producers didn’t save huge dollars switching from VP6 to H.264, since the costs of switching were so low, they broke even almost immediately.

Contents

Skyrocketing Implementation Costs

What’s happened since 2010? Both elements of encoding costs have skyrocketed while bandwidth savings have plummeted. This means that the number of streaming hours it takes to break even from adopting a new codec has increased tremendously.

Why the boost in implementation cost? Markets have fragmented and there’s no Adobe to point a finger at if playback doesn’t work. Every device you target for the new codec has to be tested, which is time-consuming and expensive.

Encoding costs themselves have also increased with the complexity of the algorithms. AWS Elemental MediaConvert charges $0.042/minute of H.264 1080p (two-pass) video, $0.336/minute (8x H.264) for HEVC, and $1.728/minute (41x H.264) for AV1.

If you encoded the 4K HEVC SDR ladder in the Apple HLS Authoring Specifications using MediaConvert, it would cost over $250 for one hour of video.

While these prices are top of the market, if it made economic sense to encode all videos to AV1 YouTube would be doing it. Instead, only the most popular videos get encoded with AV1; the vast majority still use H.264 or VP9.

So for the cost side of the equation–the numerator–the increase in the number of platforms supported and the boosts in encoding costs combine to dramatically increase the costs that must be recouped before breakeven.

Plummeting Savings

At the same time, bandwidth savings are plummeting as bandwidth costs continue to shrink, making it difficult to recoup the previously discussed costs. According to this May 2020 article, large-volume publishers were paying as low as $0.0006/GB, roughly ten percent of what they paid ten years before.

The fragmentation discussed above also limits the markets that you can target with the new codec. HEVC doesn’t play in most browsers and likely never will, so producers can’t spread encoding costs over streams distributed to these targets.

AV1 plays in most browsers, but in very few TVs (though many 2021 models), and mobile deployments appear to be pushed out to 2026-2027 (see David Ronca’s interview with John Porterfield here, about 31 minutes in). So, producers can’t spread the cost of encoding over devices in these markets.

Finally, though all three codecs deliver impressive efficiencies, remember that only top of ladder savings deliver bandwidth savings – the rest deliver improved QoS (see here).

That is, if your H.264 ladder has a top rung of 6 Mbps and your VVC ladder a top rung of 4 Mbps, you save 50% on all 4 Mbps streams that you deliver. But if you’re serving a 2 Mbps stream to a mobile viewer with an H.264 ladder, when you switch to VVC they still retrieve a 2 Mbps stream; there is no saved bandwidth. For rungs delivered below the top rung, there are quality improvements but minimal savings.

I don’t mean to minimize the benefits of quality improvements in low ladder rungs; if you’ve got substantial viewers there, improved quality will increase viewing time and reduce churn. Still, these numbers are hard to compute as compared to actual bandwidth savings.

So, you’ve got dramatically lower savings per GB delivered, and they only apply to only a fraction of your target viewers. Beyond that, unless your video distribution is top rung only, your actual bandwidth savings will be a fraction of the BD-Rate savings that codec developers claim. As these savings get smaller, the number of hours to break even gets larger.

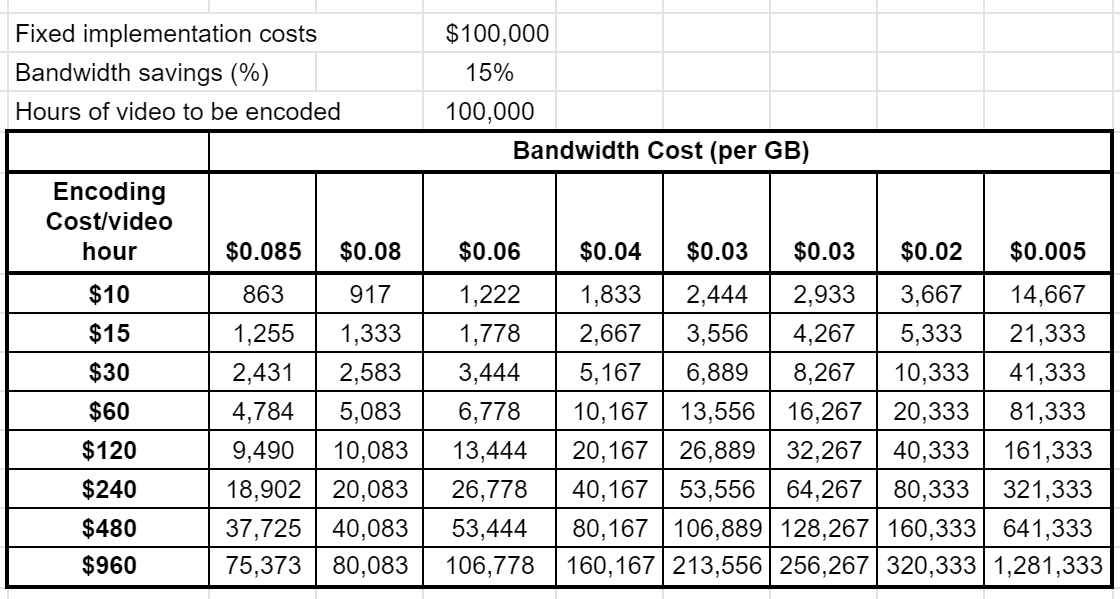

To help put numbers to these concepts I’ve created a shared Google Sheet that you can access here. You can’t change the numbers but you can download the spreadsheet to Excel and edit that. To drive the spreadsheet, you:

- Insert fixed implementation costs.

- Insert bandwidth savings percentage, or the savings the codec will deliver over the full encoding ladder (e.g. not BD-Rate). To learn how to compute this, click here.

- Insert the number of hours of video to be encoded with the new codec (to spread implementation costs overall videos).

- If desired, change encoding cost and bandwidth cost per GB.

The result in the table is the number of hours of viewing to break even on encoding and allocation of fixed costs.

After running the numbers, two interesting conclusions become evident. First, implementation costs, while a scary number, get amortized overall videos that you encode to that format. So, if you’ll be encoding significant hours with the new codec, the breakeven is only minimally impacted. In addition, while the hours of video to achieve breakeven are out of reach for most casual streamers, they’re certainly not for large OTT shops and many live linear services.

What are the Exceptions?

Over the years, different versions of this analysis have led me to conclude that only the largest content distributors adopt a new codec for bandwidth savings. Other producers typically only add a codec when it opens up new markets. HEVC now dominates the living room because of 4K and HDR, not because of bandwidth savings over H.264.

What’s this mean for the upcoming crop of codecs?

On the cost side, VVC was about 50% more complex than AV1 in my tests so an encoding cost of over $2.00/minute seems assured. On the savings side, VVC may never play in browsers and is 2-3 years away from appearing in mobile devices and the living room in relevant quantities.

I think 8K will follow 4K pretty naturally though there’s general agreement that 8K TVs won’t improve the actual viewing experience over 4K. Will VVC enable any new applications that drive adoption?

Will AV1 adoption in the living room negate the need for VVC? I have no idea, but if I’m a publisher I’m deferring any VVC deployment thoughts until 2023 or so.

AV1 plays in most browsers already but won’t be available at critical mass in the living room for at least a couple of years and mobile later than that. So your ability to spread encoding costs over multiple platforms will take a while. Clearly, only the highest volume producers should be considering AV1 at this point.

This leaves us with LCEVC, or Low Complexity Enhancement Video Coding, a.k.a the V-NOVA codec. LCEVC flips the switch because it’s actually cheaper to encode than H.264, and because the streams should be backward compatible in most H.264 players. LCEVC also has a very publisher-friendly royalty policy that encourages adoption, so the lack of direct browser support won’t be a significant deployment issue.

LCEVC is also very lightweight on the decoding side and should play on most computers and mobile devices, and some STBs, OTT devices, and smart TVs without the need for hardware acceleration. The only thing LCEVC lacks at this point is a technology confirming adoption from one of the behemoths.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel

Thanks Jan, love your articles.

Regarding specifically LCEVC, I’ve been doing some research and reading articles and watching your videos about it, and I’m very interested in its gains on both the lower complexity, higher quality, and lower bitrate fronts, something rarely (or never?) seen with new CODECS.

It seems too good to be true, but reading the white papers on it, I suppose it makes sense, at least from this layman’s interpretation. I was curious if you would be willing to delve into the fundamentals of this CODEC, and explain how it can achieve all those improvements with seemingly little negatives. I’m fascinated by the completely different approach it takes by encoding at smaller resolution, and then upscaling. Why does does this method achieve an overall better result than encoding at higher resolutions to begin with? Why aren’t more big players hopping on this bandwagon?

And then finally, I do question LCEVC’s claim of backward compatibility, however. Sure, you have the tiny base-encoded video that can be played back on any player, but won’t that look terrible because it will only be at 270p for example (1/4 of 1080p). Can you really claim a 1080p stream played back at 270p is backwards compatible without a big asterisk?

I’ll post a similar comment on one of your Youtube videos just in case that will get better views. Your knowledge is too valuable to go unnoticed 😀

Ok thanks!

Hey Farfle:

Thanks for the kind words. On LCEVC, I’ve done the testing but I’m not an expert on how the codec works. V-Nova has put out lots of technical articles. I’m not sure why it’s not been adopted yet; my tests were favorable as were MPEGs. Fingers crossed that this will change soon.

Good point on backwards compatibility, though if you think about it, it’s not that different from how ABR encoding ladders work. Are you saying that HLS should have an asterisk because at low bandwidth, you might get a low rez video (I’m having a slow day today, but I’m thinking that 1/4 of 1080p is 960×540 which looks a whole lot better than 270p).

Please don’t post your questions anywhere else – I just want to answer them once.

Cheers, thanks for posting, and have a great weekend.

Jan