So a potential client calls and says, “we’ve reached encoding capacity and we’re about to buy another encoding station. Can you look at our presets and see if we can improve throughput and delay the purchase?” “Sure,” I responded. “Send me your encoding presets and I’ll have a look.”

Once the presets arrived, I noticed that the encoder was x264-based, and that the presets were using the Very Slow preset. I called and asked about this, and the client explained that they had tweaked the default presets a few months before to improve quality.

Tweaking presets like this isn’t uncommon, and and there’s lots of support for making these adjustments. For example, in their recent blog post, More Efficient Mobile Encodes for Netflix Downloads, Netflix described deploying “more optimal encoder settings,” with a “larger motion search range” and “more exhaustive mode evaluation.” These types of adjustments are those you get with more advanced profiles, which improve quality at the cost of encoding time. The obvious questions are, how much quality and how much encoding time?

How Much Quality and How Much Cost?

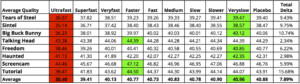

Fortunately for this client, I was in a great position to share data on both points, having completed the substantial research that went into my latest book, Encoding by the Numbers. For example, in Chapter 10, I analyze the quality impact of the various x264 presets, shown below in Table 1. Briefly, I tested eight 720p videos using identical parameters except for the preset, and measured the PSNR quality of all outputs.

As expected, the fastest preset delivered the lowest quality video (red is bad), while Veryslow proved highest on average (green is good). However, the total difference in quality from low to high was only 7.89%, and the difference between the default Medium preset and Veryslow preset is only .13 dB, or an unnoticeable .3%. So while Veryslow was best overall, it wasn’t that much better.

Interestingly, the three easiest videos to encode, a talking head, a Camtasia-based screencam, and a PowerPoint-based tutorial, actually peaked in quality using the Faster preset. If you encode lots of training videos, you may be able to improve quality and improve throughout/increase capacity using the Faster preset.

Table 1. The impact of x.264 preset on PSNR quality.

Table 1. The impact of x.264 preset on PSNR quality.

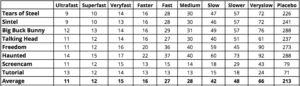

How much time difference between the presets? Table 2 shows the encoding time in seconds for the files encoded on an HP Z840 40-core workstation. For perspective, all files are 2 minutes long, again at 720p. As you can see, jumping from Medium to Veryslow more than doubles the encoding time.

Table 2. The impact of x264 preset on PSNR quality.

Table 2. The impact of x264 preset on PSNR quality.

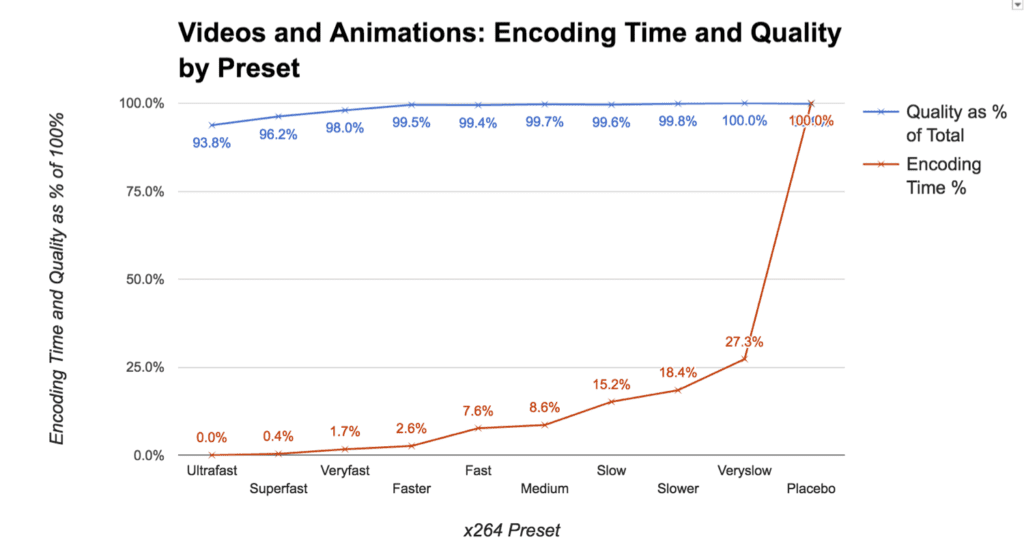

Figure 1 synthesizes this data, showing the percentage of total quality and encoding time for each preset. This makes it easy to see that using the Faster preset, which is what I recommended to the client, you harvest 99.5% of total available quality as measured by PSNR. More to the point, you drop encoding time from 66 seconds for the Veryslow preset to 16 for the Faster preset, improving your throughput by a factor of four. Total cost in quality? The difference between Veryslow (40.96) and Faster (40.77), or less than .5%

Figure 1. Encoding time and quality by x264 preset.

Figure 1. Encoding time and quality by x264 preset.

For my client, not only did this enable her to avoid buying a new encoding station, it accelerated her day to day encoding and freed up plenty of excess capacity to spare. The bottom line is that while it’s important to know which parameters deliver the absolute best quality, it’s also important to know how much better the quality actually is, and how much extra time/cost it takes to get there.

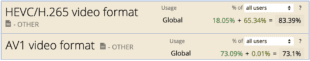

As with all things, time is money, and if the qualitative difference is truly negligible, it make better economic sense to settle for lower quality at a dramatically reduced cost. This only gets more important when integrating VP9, HEVC, and ultimately AV1 into your workflows, since encoding times can easily triple or quadruple as compared to H.264.

As a final note, all of this analysis should be considered within the perspective of the quantity of video that you deliver. Coupled with other encoding gains, the improvements identified above allowed Netflix to drop the data rate of their x264-encoded video by 15% while maintaining identical quality to their standard encode. When a file is downloaded or streamed millions of times, these bandwidth savings far outweigh the additional encoding cost. For many other producers, especially those staring at a $25,000 CAPEX hit, taking an imperceptible drop in quality in exchange for greater capacity makes a whole lot more sense.

Of course, from a business perspective, this entire exercise converted a potential $5,000 consulting gig into 30 minutes at my hourly rate and a book sale. As my 16-year old daughter would say, “I’m down with that.” Just don’t tell my wife.

About the Book

To offset the revenue loss, please indulge my shameless plug and a few words about the book. What I tried to do in the book was to quantify all encoding decisions with quality metrics, and occasionally encoding time. No opinion, all data-driven recommendations. That’s why I called it Encoding by the Numbers. This approach allows readers to make informed decisions about their encoding parameters, and when it’s worth going for maximum quality and when it’s not.

For those interested in doing their own testing, the book also details how to create your own test files, and how to measure quality with the Moscow University Video Quality Measurement Tool and the SSIMplus Quality of Experience Monitor. That way, you can measure the qualitative and temporal aspects of all encoding decisions with your own test clips.

The book costs $49.94 on Amazon, and is available in PDF format for $39.99 from a service called SendOwl.

[/fusion_text][/fusion_builder_column][/fusion_builder_row][/fusion_builder_container]

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel