Welcome to this introduction to live streaming, which details what live streaming is, how it works, and technology alternatives to implement live streaming, from third-party service providers to DIY (do it yourself).

What is Live Streaming?

Live streaming is a one-to-many technology that:

- Inputs a single video stream from a camera or video mixer

- Transcodes that stream into multiple streams to deliver to viewers watching on different devices over different connections

- Packages the streams for delivery via an adaptive bitrate technology (ABR)

- Delivers the stream to the viewers

- Enables playback on their devices

Typical applications for live streaming include sports, performance arts, religious services, governmental meetings, and many other sources of content. Most live events that you can watch via the internet in real-time, while the event is occurring, are produced via live streaming.

Live streaming is different from conferencing, where multiple participants send relatively low-quality video streams to each other. It’s also different from webinars, which again typically involves a single relatively low-quality video stream that is not delivered via ABR technologies.

How Live Streaming Works

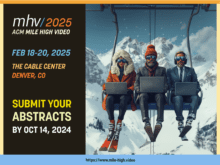

Live streaming involves the five steps shown in Figure 1. First is capture where the feed from the camera or video mixer is encoded and uploaded to a service in the cloud. There the video is transcoded into multiple streams to deliver to different viewing platforms connecting at different speeds. Next, the video is packaged to meet the precise requirements of the adaptive bitrate formats deployed.

Figure 1. The five processes involved in live streaming.

Figure 1. The five processes involved in live streaming.

At this point, the video is ready for delivery, so it’s transferred to a content delivery network, or CDN, for delivery to the individual viewers. To watch the video, each viewing platform needs a video player that runs on that platform.

Let’s take a quick look at the individual steps.

Capture

During a live event, a single camera or multiple cameras capture the action. With single-camera productions, capture involves connecting the camera to a capture device that can input the video, compress the video for more efficient transfer to the cloud, and then upload the stream to a service in the cloud for further processing. You see a simple capture scenario in Figure 2, where a single camera is attached to the LiveU Solo, which encodes the stream and delivers it to a cloud service.

Figure 2. Capturing a single camera shoot with the LiveU Solo.

Figure 2. Capturing a single camera shoot with the LiveU Solo.

When multiple cameras are involved, the video is processed through a video mixer that switches between the cameras and provides functions like titles, graphics, instant replay and others. Some video mixers can compress the output and transmit the streams to a cloud service for further processing. Others can’t, so again you’ll need a separate device to capture the video, compress it, and deliver it to a cloud service.

There are many, many forms of capture devices, from $30 dongles that connect to a computer USB port to $50,000 boxes that can process multiple inputs simultaneously. There are also many types of video mixers, from free open-source software programs to mixing appliances that cost well over six figures. From our perspective, all we care about is that the device or software program can encode the video and deliver it to the cloud.

Transcoding

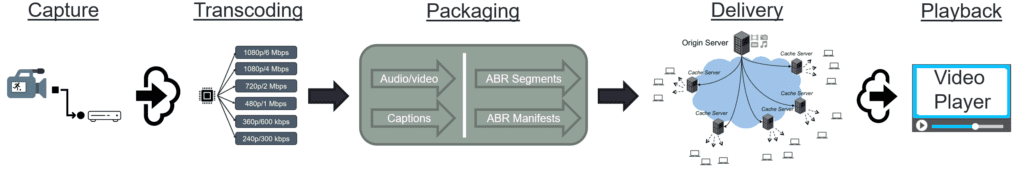

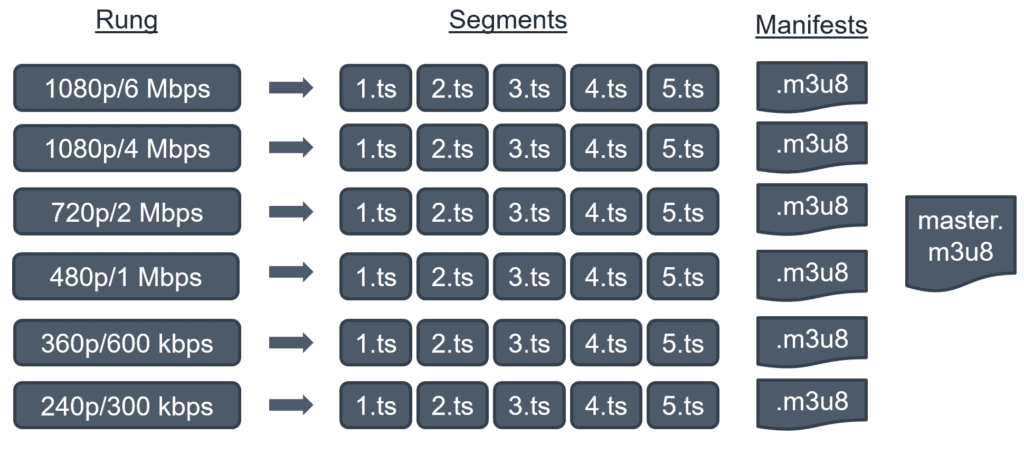

All live streaming products and services deliver to their viewers using adaptive bitrate (ABR) technologies like Apple’s HTTP Live Streaming (HLS) or the Dynamic Adaptive Streaming over HTTP (DASH) standard. All ABR technologies use what’s called an encoding ladder to deliver to different viewing platforms connecting at different speeds. During live streaming, the stream delivered by the capture device to the cloud is transcoded into multiple streams as shown in Figure 3.

If you’re not familiar with the technical lingo, 1080p means a full resolution HD stream, while 720p, 480p, 360p, and 240p are smaller, lower-resolution streams. Mbps is megabits per second and kbps is kilobits per second and higher values deliver better quality. Accordingly, the 1080p/6 Mbps stream is the highest quality, while the 240p/300 kbps stream is the lowest.

In essence, ABR technologies reward viewers watching on powerful platforms via fast connections with top quality video. In Figure 3, the top two streams are delivered to smart TVs and other devices connected via cable modems or other broadband connections. Those watching on less capable devices via slower connections still get to watch, but the quality is degraded.

This schema also allows the ABR technology to change streams when bandwidth drops. Even if you’re watching via a cable connection, another viewer in your home or office could start playing another video file or start other activities that reduce your bandwidth. Rather than simply stopping playback when the top quality file can’t be retrieved, all ABR technologies drop to a lower-quality file that can be retrieved and continue playback. You might have noticed sudden quality drops during playback of Netflix or Amazon Prime videos, which occur when the ABR technology drops to a lower quality file.

Figure 3. An encoding ladder for ABR distribution.

Figure 3. An encoding ladder for ABR distribution.

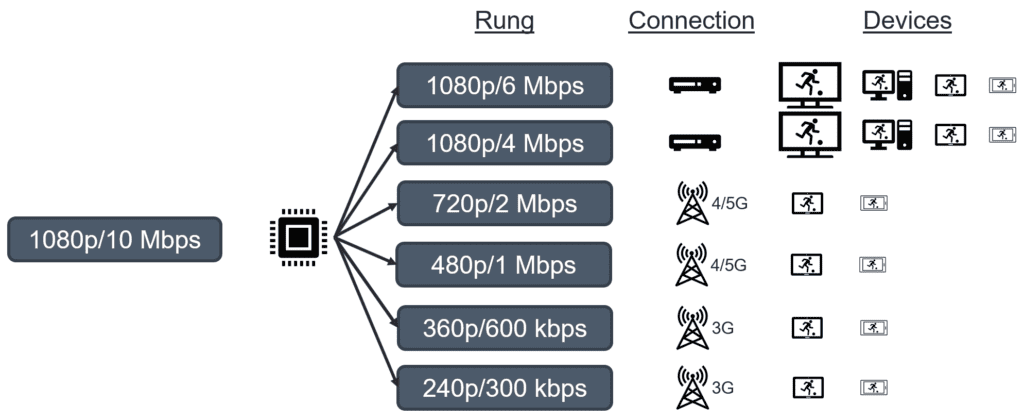

After the individual files are created, they must be packaged for ABR distribution.

Packaging

During the packaging phase, each rung is divided up into multiple smaller segments, usually between 2-10 seconds long. In addition, manifest files are created to provide the player with the location information necessary to retrieve the files. The master manifest to the right is the first file retrieved by the player and contains information like resolution, bitrate, and compression technology used to compress the file that allows the player to choose the optimal rung. The player then retrieves the manifest file for that rung and starts playback.

Figure 4. Packaging for ABR delivery.

Figure 4. Packaging for ABR delivery.

The smaller segments allow the ABR technology to quickly change rungs when bandwidth conditions worsen or improve. When the player notices that buffer levels are getting low, indicating the inability to retrieve the rung that it’s playing, it refers back to the master manifest file for a lower quality rung and grabs the next segment from that rung to continue playback.

If your video has multiple audio renditions or captioned, these are also packaged during this phase. If you’re protecting your content with digital rights management, this occurs as the final stage of packaging.

I should mention here that many very large and established broadcasting companies use on-premise systems for transcoding and packaging and then deliver the finished streams to the cloud. This schema has two disadvantages; first, on-premise encoders/packagers are extremely expensive, and outbound bandwidth requirements to deliver the packaged output to the cloud is very costly. Unless you’re a very big budget 24/7 operation, sending a single stream into the cloud and transcoding and packaging there is almost always the most efficient and economical workflow.

Delivery via Content Delivery Network

Once the rungs are created and packaged, they are delivered to a content delivery network, or CDN, which is a specialized service designed to efficiently deliver high bandwidth video files. Without using a CDN, a typical website would likely only be able to deliver a few dozen simultaneous streams before exhausting its capacity and degrading delivery and quality of experience to all viewers.

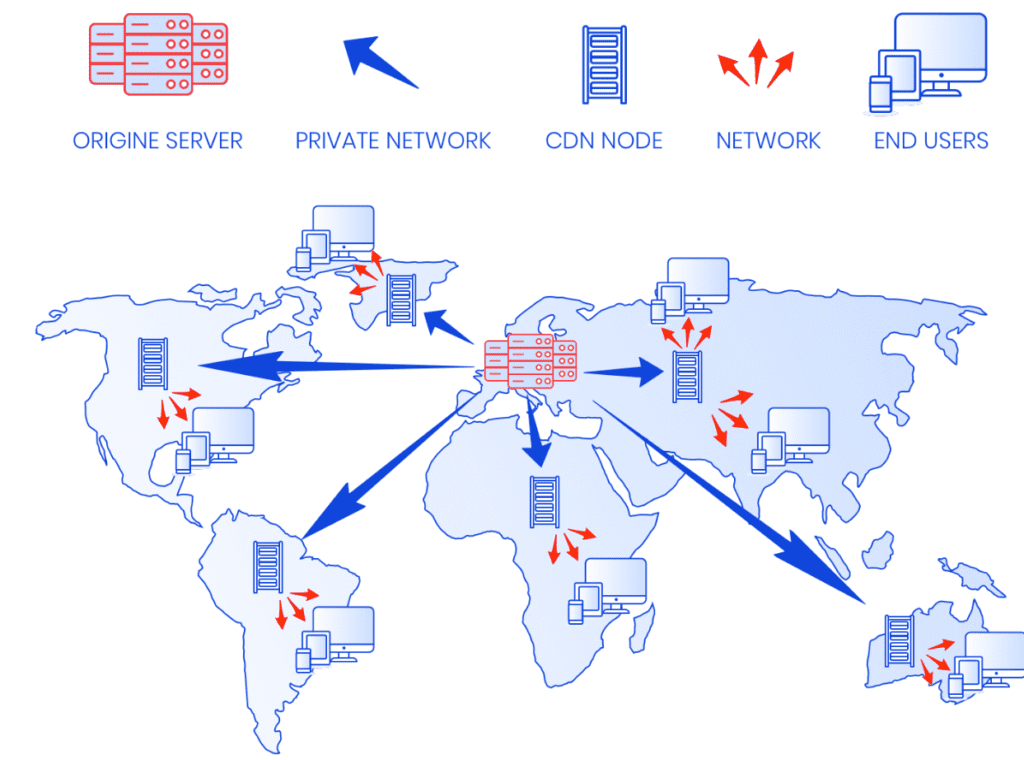

Figure 5 shows a typical CDN architecture and operation for a CDN based in Europe. Essentially, a CDN is a number of servers connected via a very fast private network. In a live streaming scenario, the private network delivers the live streams to regional servers for delivery to viewers in that region.

Imagine a soccer match in Europe delivered without a CDN. If a viewer in Australia clicked to watch the match, the video segments would be delivered over the regular internet which involves lots of “hops” between disparate networks. Each viewer in Australia would retrieve a unique set of video video segments.

With a CDN, the segments would be delivered to the Australian server via the private network, which is more efficient, and the same segments would be sent to all viewers watching in Australia. Using the private network to serve multiple local viewers around the world is faster, cheaper, and improves the experience for all viewers. For this reason, almost all live streams are delivered via CDNs.

Figure 5. A content delivery network architecture (from http://bit.ly/cdn_arch).

Figure 5. A content delivery network architecture (from http://bit.ly/cdn_arch).

Player

Once delivered, in order for your video to play on any device, you have to supply a player to retrieve the video, decode the compressed segments, and pass them along to your graphics card for playback. The player also handles the ABR playback logic with all the stream switching described above and provides an interface for displaying captions and alternative language audio streams. If you’re protecting your content with DRM, the player has to communicate with the DRM servers to obtain the necessary decryption key to play the video.

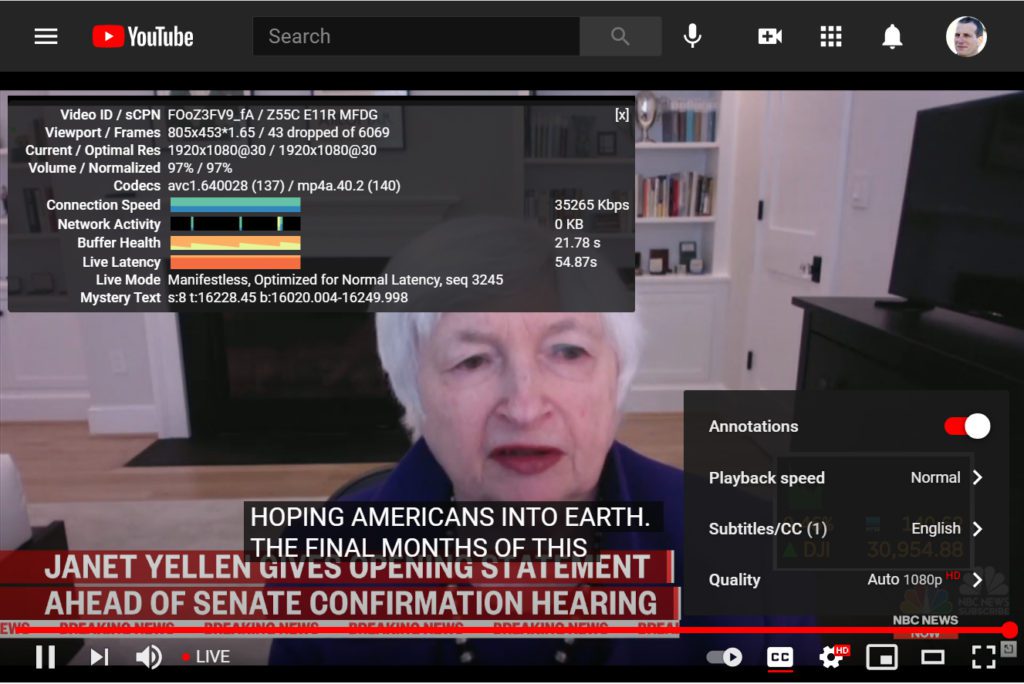

Figure 6. YouTube’s highly featured player includes closed caption support,

Figure 6. YouTube’s highly featured player includes closed caption support,

adjustments to quality and playback speed, and the always illuminating Stats for Nerds.

The player also determines the features that you support. For example, if you want viewers to be able to choose a specific rung to watch or to speed up or slow down video playback, you have to design those features into your player. If you want to match the feature set provided by YouTube (Figure 6), you’ve got a lot of work in front of you.

Other Technologies

Note that the five functions shown – capture, transcoding, packaging, delivery, and player, are the bare bones requirements for any live streaming ecosystem. You’ll probably want to convert the live feed to on-demand files for later viewing; this means you need a media asset manager to handle the conversion and make the video available to viewers.

You’ll want playback analytics that tell you how many viewers watched and for how long. This takes an analytics package. You may want to identify problems that your viewers experienced and which rungs of the ladders they watched. This requires a quality of experience (QoE) monitor and more analytics. You may want to track the performance of your delivery ecosystem, essentially how the CDNs that you deployed performed during the broadcast. This requires quality of service (QoS) monitoring and even more analytics.

I mention these to highlight just how complex and expensive developing your own fully-featured live streaming ecosystem would be. Most newbie live event producers are much better off choosing one of the more turnkey approaches discussed next.

Technology Alternatives

OK, now you know the basics required. How do you get them? Let’s go through the various alternatives, which includes social media platforms, live streaming service providers, best of breed technology selection, and DIY (do it all yourself).

Social Media

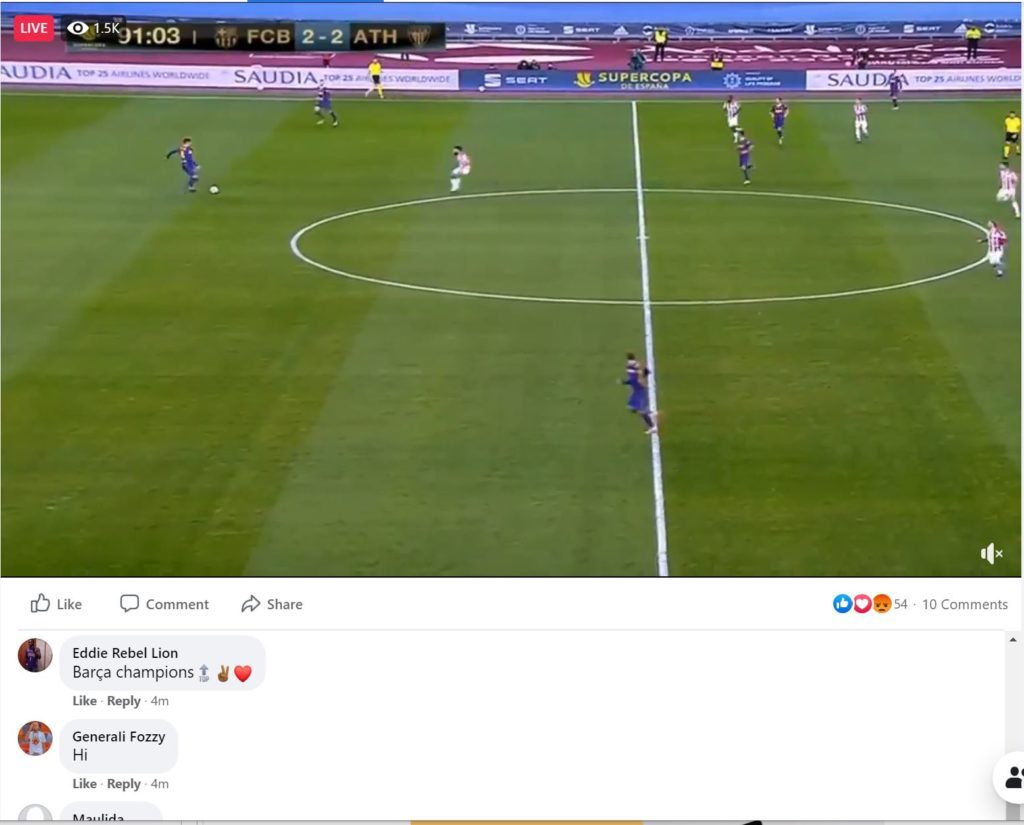

Social media platforms like YouTube and Facebook provide all the basic infrastructure described above at no charge to users, a definite plus. They also provide very engaging players that allow viewers to comment, like, and otherwise interact with the producers and other viewers.

Figure 7. Facebook gets your live videos in front of your most ardent viewers.

Figure 7. Facebook gets your live videos in front of your most ardent viewers.

What they don’t provide is technical support, so if your broadcast is mission-critical, you’re in big trouble if the stream goes down. You also don’t get the ability to display the stream in your own branding, or control over what types of videos surround yours when live or later when available on-demand.

For this reason, rather than solely streaming via social media channels, many companies choose another alternative to live streaming that allows them to also send the live stream to social media platforms for viewing there. This form of syndication is almost ubiquitous in the next category of providers called live streaming service providers.

Live Streaming Service Providers

Live Streaming Service Providers provide all the basic functions detailed above, plus much, much more. As I wrote about in the TechRadar article Live Streaming Comparison Guide, service providers targeting different types of customers offer features like scoreboards (for high school sports), donations (for houses of worship), pay-per-view (for those seeking to monetize their live events, single sign-on and town hall functions (for internal enterprise broadcasts), recommendation engines (for marketers), advertising services, digital rights management, and 24/7 network operation centers (for regional or national broadcasters).

I list a number of service providers in the TechRadar article, including DaCast, Streamshark, Vimeo, Wowza Streaming Cloud, StreamingChurch.tv, SermonCast, Sunday Streams, ChurchStreaming.tv, and Worship Channel, Brightcove, JWPlayer, Kaltura, IBM Video Streaming, Kaltura, Microsoft Stream, MediaPlatform, Panopto, Qumu, Vidizmo, iStreamPlanet, JWPlayer, Akamai, and Limelight.

These types of service providers are ideal for producers who would rather leverage existing technology platforms than try to build their own. One downside of this approach is design flexibility; if you need a custom application or workflow for your live streams, you may not be able to achieve it through a service. Another downside of this approach is cost; if you have the technical resources and scale, you may be able to assemble a best of breed solution using technologies and services available from a number of companies. That’s the next category.

Best of Breed Integrated Solution

If a live streaming service provider doesn’t work for you, your next option is to assemble a best-of-breed solution from third-party developers and service providers. This allows you to choose the technologies that you want to use, though in some cases you’ll need to buy/lease, install, and operate them and in all instances you’ll have to integrate them.

Transcoding/Packaging

You can obtain transcoding and packaging services in multiple ways. There are service providers that you can deliver your stream to and they will transcode and package and deliver the files to the CDN of your choice. Vendors here include companies like Bitmovin, Mux, and StreamGuys.

Alternatively, you can buy or lease streaming servers that transcode and package your live streams. Companies here include Ant Media (Ant Media Server), Infrared5 (Red5 Pro), Softvelum (Nimble Streamer), and Wowza (Wowza Streaming Engine).

There are also cloud products that you can deploy for transcoding, including ATEME Titan Live, AWS Elemental MediaLive, and Harmonic VOS360. Both the ATEME and Harmonic products can also package for ABR delivery with AWS Elemental MediaPackage performing a similar function for MediaLive.

Delivery

Content Delivery Networks also fall into two categories; standalone CDNs like Akamai, Limelight, and Fastly, and CDNs available as a component of a cloud platform, like Amazon Cloudfront, and the CDN available as a service in Microsoft Azure, Microsoft’s cloud platform. You can read more about what to look for in a CDN in my article 6 Best CDNs for Live Streaming.

The availability of CDN services as a component of a greater cloud platform brings up two important points. First, most media companies will need a cloud provider for storage, and most cloud platforms offer a full range of media services, including transcoding, CDN services, and even a video player. Similarly, most larger CDNs also offer a range of media services, including storage, transcoding/packaging, and a video player. In both cases, choosing one or more services from the same vendor will likely simplify the integration and perhaps reduce operating costs.

In this regard, if you’re hosting on the AWS platform, it makes sense to consider AWS Elemental products for encoding and packaging and Amazon CloudFront as your CDN. If you choose Akamai as your CDN, you should consider Akamai for all live streaming-related services.

Player

Players are also available from two basic sources, open-source players like Dash.js, MediaElement.js, Plyr, Shaka Player and Video.js, and commercially available players from companies like Bitmovin, Flowplayer, JWPlayer, and THEOPlayer.

Do It Yourself (DIY)

Most of the top of the pyramid live streaming producers like Facebook, Netflix, and YouTube have developed their own live streaming ecosystem from transcoding, to delivery and player, in many cases using the same building blocks as used by third-party service providers. For example, the open source encoding FFmpeg is used in virtually all transcoding facilities, typically paired with an open-source packager like Bento4 or MP4Box. There are also for-fee packagers that offer more features and better support, like Unified Streaming’s Unified Origin.

Building your own CDN is beyond the scope of this paper, but if you Google “how to build your own CDN” you’ll be rewarded with several hundred resources. Finally, building your own player would typically involve one of the open-source players discussed above.

As stated at the top, most newbie live streamers are best served by streaming via a social media site or live streaming service provider. Those working in established companies should also be aware of the other technological alternatives.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel