The mandate for streaming producers hasn’t changed since we delivered RealVideo streams targeted at 28.8 modems; that is, we must produce the absolute best quality video at the lowest possible bandwidth. With cost control top of mind for many streaming producers, let’s explore five codec-related options to cut bandwidth costs while maintaining quality.

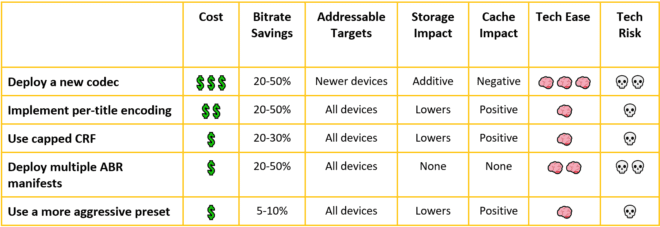

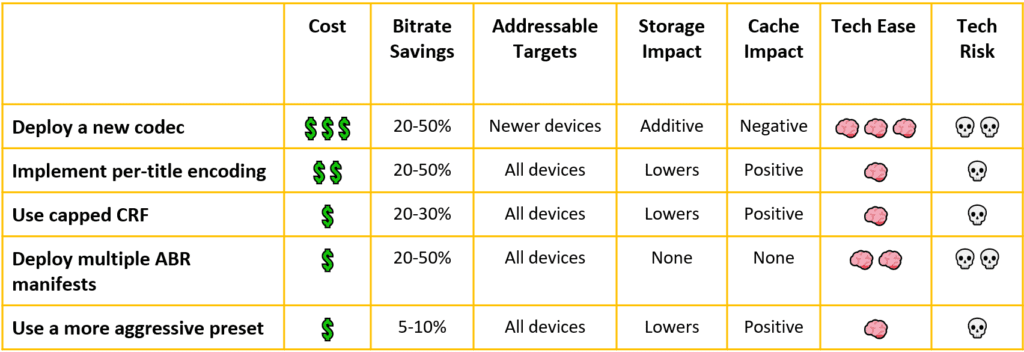

For each, I’ll consider the factors summarized in the table above and below: cost, potential bitrate savings, the addressable targets, the impact on storage and caching, the ease of implementation, and technology risk. Some comments on these factors:

The rating system is necessarily subjective, and reasonable minds can differ. If you strongly disagree with a rating, send me a note at janozer@gmail.com or leave a comment on the article or one of the social media posts related to it.

Assessing the potential bitrate savings is challenging. If you’re distributing all streams to the top of your encoding ladder, changing from H.264 to AV1 should drop your bitrate costs by 50%. If you’re primarily delivering to the middle of the ladder, your bandwidth savings will be minimal, though QoE should improve. I’m assuming the former in these estimates.

Cache impact refers to how the technique impacts your cache. Adding a new codec reduces the effectiveness of your cache because you’ll be splitting it over two sets of files. Most of the others reduce the bitrate of your video so you can fit more streams in the same cache, which has a positive impact.

Tech ease measures the difficulty of implementing the technique, including necessary testing to ensure reliability. Technology risk assesses the likelihood that you’ll break something despite your testing.

On cost, I hate to be the bearer of bad news, but the Avanci Video pool covers “the latest video technologies, AV1, H.265 (HEVC), H.266 (VVC), MPEG-DASH, and VP9.” This means H.264, which comes off patent starting in 2023, is safe, but later codecs aren’t. I have no idea what the costs are or even if this pool will ultimately succeed. At this point, however, if you’re considering supplementing your H.264 encodes with a newer codec, you have to consider the potential for content royalties.

Contents

Option 1: Deploying a New Codec

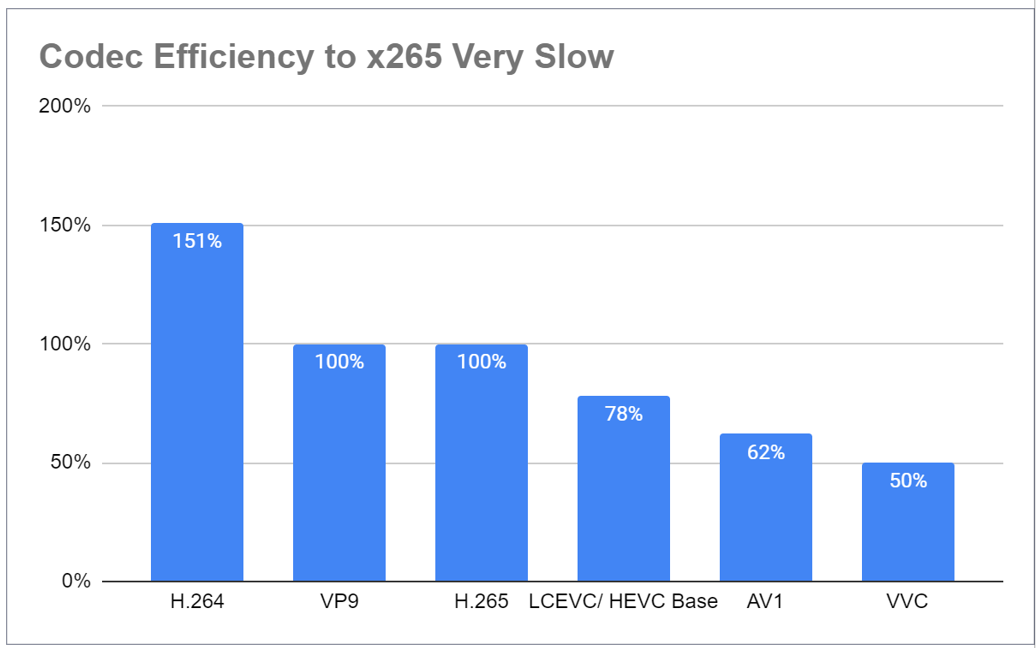

Opting for a codec like HEVC or AV1 can dramatically reduce file sizes, minimizing storage and bandwidth expenses. The chart below is from a presentation that I gave at Streaming Media East in May 2023 (download the handout here). Borrowing a technique started by the great folks at Moscow State University, I normalized quality on H.265 at 100%.

Some notes to reduce blood pressure among the readers: the AV1 and H.265 results are open-source versions of the codecs, and there are optimized proprietary versions that deliver better quality. LCEVC performance will depend on the base layer that you choose, while VVC was the Fraunhofer version, which is capable, but higher quality versions also exist (see the MSU reports here). There are also other quality observations in the presentation download.

All that said, if you’re still encoding with H.264 (x264), you can shave about 33% for files encoded with HEVC (x265) and 58% with AV1 (Libaom). Again, the benefit is mostly in the top rung; most lower rungs will deliver improved QoE but not bandwidth savings.

Looking at the table, the cost is the highest of all alternatives. That’s because:

- You’ll have to learn how to encode with a completely new codec and integrate that into your encoding infrastructure.

- All encoding costs will be additive to H.264, since not all target players are compatible with these new codecs.

- Player and related testing will be extensive and expensive.

Moving through the table, adding a codec adds to your storage expenses because you will still be producing and storing H.264. The new codec will have a negative impact on your CDN caching because you’ll have to share H.264 and HEVC files. This means either a higher caching cost if you add to your cache or higher bandwidth costs and potentially lower QoE because less data is cached at the edge.

As discussed, this alternative involves a lot of work; hence, the three brains and moderate technology risk that you obviously can mitigate with rigorous testing. Don’t get me wrong; hundreds of companies now produce in HEVC and AV1 and made it through unscathed; just don’t minimize the required effort. There’s also a difference between implementing a new codec yourself and flipping a switch in your Brightcove or JWPlayer console to add a new codec, with the end-to-end transcoding to playback already tested by earlier pioneers.

All that said, I’ll share these observations:

- By far the most new codec adoption is to address new markets, primarily high dynamic range in the living room. This made HEVC table stakes for premium content producers.

- Most other advanced codec adoption is by companies at the tippy top of the pyramid, like YouTube, Meta, Netflix (VP9/AV1), and Tencent (VVC), who have the scale to really leverage the bandwidth savings and the in-house expertise to minimize the risks and costs.

Most smaller companies, not distributing premium content, still stream H.264, either predominantly or exclusively. We love to talk about new codecs, but we seem to loathe adding them to our encoding mix to achieve bandwidth savings.

2. Per-Title Encoding: Customizing Your Bitrate Ladder

Per-title encoding, also called content-adaptive encoding (and many other things), analyzes individual video content to generate custom bitrate ladders for each. You can get a good overview of the technology in this StreamingMedia article: The Past, Present, and Future of Per-Title Encoding.

Unless you’re Netflix-sized, you’re probably best off either licensing per-title technology from a third party or using a per-title feature supplied by your cloud encoding vendor (we’ll explore a DIY option next).

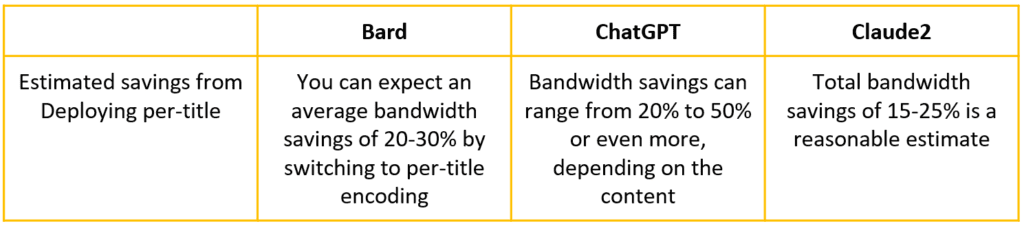

How much bandwidth will a per-title technology save? Like you, I’ve experimented and tested various ways to integrate AI into my writing, with mixed results. I do trust AI services to have a better grasp of overall available data than I do, so I present the following responses to the question, “What’s the approximate bandwidth savings that streaming publishers can expect by switching to per-title (or content adaptive) encoding.

You see the results in Table 2. Take them with however much salt you’re giving AI today; I’m sure they incorporated far more data than any of my guesses could.

Otherwise, Bitmovin claims up to 72% savings here, while Netflix claims a 20% savings for the top rung here.

In August 2022, I published two reports analyzing the per-title encoding features of five service providers. At a high level, I created a per-title encoding ladder for 21 test files using x264/x265 and the slow preset and compared the results to per-title H.264/HEVC output produced by AWS, Azure, Bitmovin, Brightcove, and Tencent. Azure has since exited the market.

I compared each service to the x264/x265 ladders but not to a fixed encoding, so I have no number to compare to Table 2. I analyzed the bandwidth savings produced by each service using H.264 and HEVC for three distribution patterns: top-heavy (viewers watched mostly top rungs but some middle and lower rungs), mobile (mostly middle rungs), and IPTV (viewers watched only the top two rungs). You see the recommendations for HEVC.

How does per-title stack up to the other alternatives? Looking at our table, it will cost a bit more since per-title is a premium option for all service providers. You see the bitrate savings, which will vary with your distribution pattern.

Since you’re not adding a new codec, the addressable market is all existing targets for that codec, which is good, and a lower storage cost because the bitrates will be lower. These lower bitrates have a positive impact on caches since you can fit more files in a fixed cache size and satisfy more viewers.

It’s very simple to implement technically, particularly if you’re already using a service like Bitmovin or AWS. Just flip a switch, and you’re outputting in per-title format. The technology risk is also minimal because you’re using the same codec that you’ve always used.

I’ve been a huge advocate of per-title since Netflix debuted it in 2015, and if it’s not a technology that you’ve explored to date, you’re behind the curve.

3. Deploying Capped Constant Rate Factor Transcoding

Capped CRF is an eminently DIY per-title method that is also unquestionably less effective than the techniques discussed in the previous section. Still, it’s an encoding mode that can shave serious bandwidth costs off your top rung and can be very simple and risk-free to implement.

As explained here, capped CRF is an encoding technique available for most open-source codecs, including x264, x265, SVT-AV1, libaom, and many others, including all codecs deployed in the NETINT Quadra. With capped CRF, you choose a quality level via CRF commands and a cap, and the relevant commands might look like this:

ffmpeg -i input_file -c:v libx264 -crf 23 -maxrate 6750k -bufsize 6750k output_file

In the command string, I’m encoding with the x264 codec and choosing a quality level of 23, which generally hits around 93 VMAF points. I’m also setting a cap of 6.75 Mbps. During easy-to-encode segments in a video, the x264 codec can achieve the CRF quality level far below the cap, generating bandwidth savings. During high-motion sequences, the cap controls, and you’re no worse off than you would have been using VBR or CBR. You produce capped CRF output in a single pass, a slight savings over 2-pass encoding, and you can use the technique for live video as well as VOD.

Looking at the chart, capped CRF is inexpensive to deploy but won’t save as much as more sophisticated per-title techniques (see here for why). You’re not changing your codec, so you can address all existing targets and it will lower your storage requirements and improve cache efficiency. Ease of implementation is low, and the only tech risk is a slightly greater risk of transient quality issues, particularly in high-motion footage. It’s a very solid technique used by more top-tier streaming publishers than you might think.

4. Different Ladders for Different Targets: Mobile Matters

It doesn’t take as high a quality video stream to look good on your mobile phone as it does to look good on your 70” smart TV. So, you shouldn’t make the same streams available to mobile viewers as you do for desktop viewers. You can do this by creating separate manifests for mobile and smart TV devices and identifying the device before you supply the manifest.

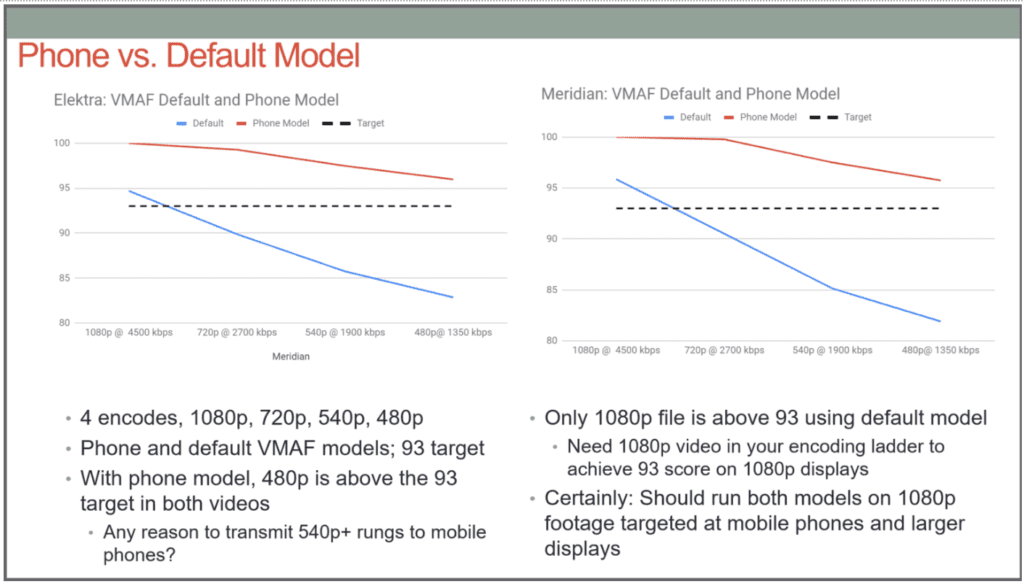

You see two examples of this in Figure 2, which is from an article appropriately entitled You Are Almost Certainly Wasting Bandwidth Streaming to Mobile Phones. To produce the two charts, I encoded each movie twice at 1080p, 720p, 540p, and 480p and measured VMAF quality using both the default (in blue) and phone model (in red).

As you can see, using the phone model, all four videos are above the 93 VMAF target many publishers use for top rung quality, and the two top rungs are at or close to 100 VMAF points. Multiple studies have proven that few viewers can discern any quality differential above 93-95, making the bandwidth associated with these higher-quality files a total waste.

Looking at our scoring in Table 1, this approach should be inexpensive to implement and could save significant bandwidth. It works on all devices that you’re currently serving, with no impact on storage since you’re creating the same ladder, just not sending the top rung to mobile viewers. It has minimal impact on the cache because you’re distributing the same files. I rate the tech ease and tech risk as slightly higher than capped CRF, but this is a well-known technique used by many larger streaming shops.

5. Use a Higher Quality Preset

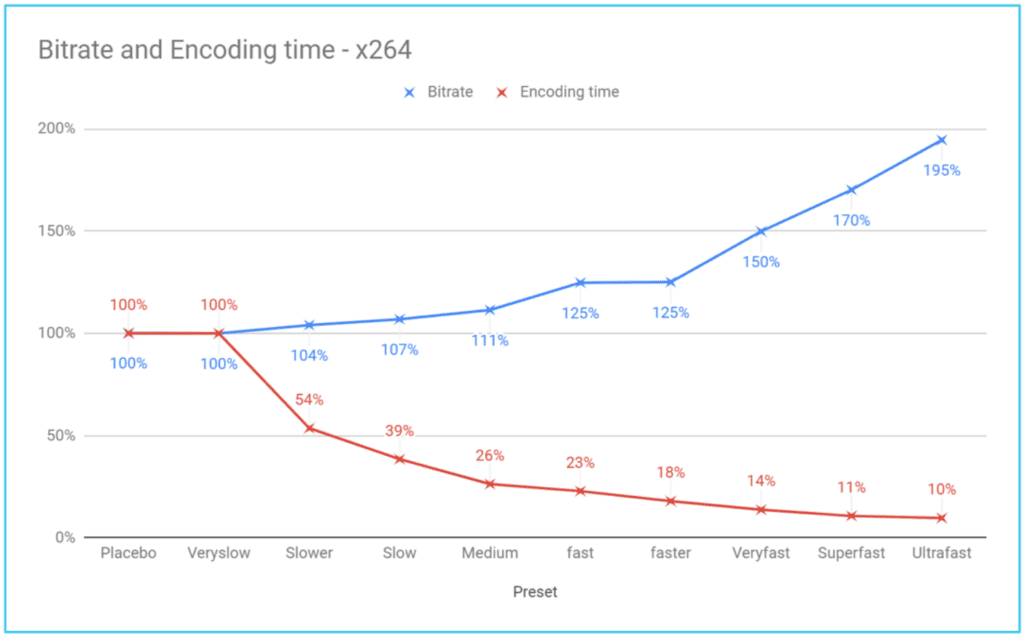

Most streaming producers associate preset with encoding cost, not bandwidth. As I explained in my article The Correct Way to Choose a Preset, bandwidth and encoding costs are simply two sides of the same coin. Figure 3 shows you why.

Specifically, the blue line in Figure 3 shows how much you have to boost your bitrate to match the quality produced by the very slow preset. The red line shows how much using the lower-quality preset saves in encoding time. If you use the default medium preset, you cut encoding time/cost by 74% as compared to very slow, which is great. But you have to boost your bitrate by 11% to achieve the same quality as the very slow preset. In this fashion, choosing the optimal preset involves balancing encoding costs against bandwidth costs.

As the Correct Way article explains, depending upon your bandwidth costs, if your files are viewed more than a few hundred times, you save money by encoding using the highest-quality preset. This is why YouTube started deploying AV1 even when encoding times were hundreds of times longer than H.264 or VP9. It’s why Netflix debuted their per-title encoding technique using a brute force convex hull-based encoding technique that was hideously expensive but delivered the highest possible quality at the lowest possible bitrate. When your files are watched tens or hundreds of millions of times, the encoding cost becomes irrelevant.

As you can read in the Correct Way article, if your bandwidth costs are $0.08 (Amazon Cloudfront’s highest rate), and your video is watched for only 50 hours, veryslow is the most cost-effective preset. So, if you’re using the Medium preset or even the slower preset, check out the article and reconsider.

Obviously, changing your preset is very inexpensive to implement, benefits all existing targets, lowers your storage costs, improves cache efficiency, is easy to implement, and has a negligible tech risk. Unfortunately, it also offers the least potential savings.

That’s it; if you’re already doing most of these, congrats, you’re on top of your game. If not, hopefully, you’ve got some new ideas about how to reduce your bandwidth costs (and you see this before your boss does).

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel