This post provides an introduction to video codecs. It’s an entry-level article designed to help streaming newbies understand what codecs are, what they do, how to compare them, and why they succeed or fail.

Video codecs are compression technologies that reduce the bitrate of digital video to manageable sizes. Video codecs are not only central to streaming, but they’re also integral to digital camcorders, video editing, contribution, and broadcast. If you’re watching a video on a digital device, it’s almost certainly been compressed using a video codec and it’s been compressed and decompressed multiple times with different video codecs during recording and production.

Contents

What does codec mean?

Codec is the contraction of enCOde/DECode. That’s because codecs work by encoding the video for distribution or storage and decompressing for playback or other use. Note that while this article will discuss video codecs only, audio is typically encoded for distribution using a different set of audio codecs.

Where do codecs come from?

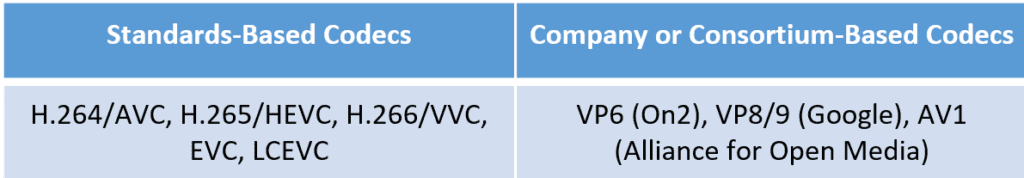

Codecs come from two sources as shown in Table 1; Standard-based codecs are created by standard-setting organizations like the Motion Pictures Expert Group (MPEG) and the International Telecommunications Union (ITU). In fact, the H.264/AVC, H.265/HEVC, and H.266/VVC codecs have two names because they were created in concert by MPEG, which called them AVC, HEVC, VVC, and the ITU (H.264, H.265, H.266).

Table 1. The two sources of video codecs.

Private companies and consortiums also develop codecs. A company named On2 developed the VP6 codec, which was very popular with Flash video until replaced by H.264. Google bought On2 in 2009 and later open-sourced VP8 and VP9 under the WebM structure.

Google was far along with the development of VP10 before becoming a founding member of the Alliance for Open Media (AOMedia), which created the AV1 codec using VP10 and codec technology contributed by Cisco, Mozilla, Microsoft, and other founding members. Like VP8 and VP9, AV1 is available via open-source.

What the heck do all these initials mean?

Sorry. Here you go.

AVC – Advanced Video Coding

HEVC – High-Efficiency Video Coding

VVC – Versatile Video Coding

EVC – Essential Video Coding

LCEVC – Low Complexity Enhancement Video Coding

AV1 – AOMedia Video 1

Otherwise, VP6, VP8, VP9, and VP10 are codec names and not acronyms.

How much do codecs cost and who pays?

All standards-based codecs shown in Table 1 are subject to royalties, though typically they’re paid by companies that deploy the codecs in their products, like mobile phone and television manufacturers. Some codecs like H.264 have royalties on pay-per-view or subscription content, but the numbers are typically small with large de minimus exceptions, like the first 100,000 subscribers are free. There are no standard-based codecs that charge royalties on video distributed for free over the internet, though the HEVC patent pool run by Velos Media hasn’t ruled this out.

Open-source means that any developer can use any of these codecs without paying Google or AOMedia license fees. However, it does not mean that these codecs are royalty-free. Patent-pool administrator Sisvel has launched patent pools for VP9 and AV1, claiming that the codecs utilize techniques protected by patents owned by the pool members. Not surprisingly, AOMedia disagrees.

Why are there multiple codecs from different developers?

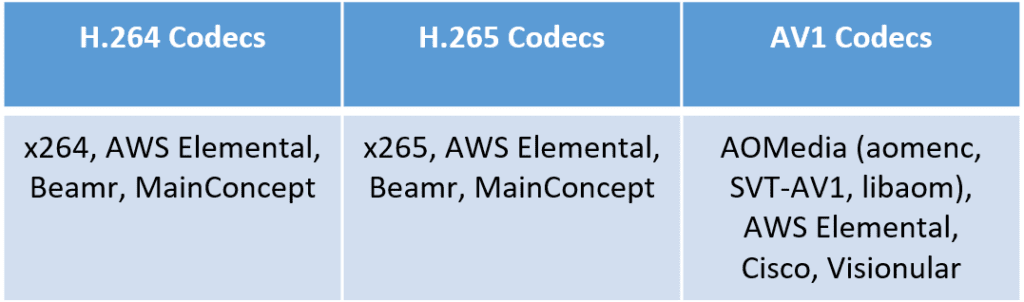

Whether standards-based or designed by a company or consortium, codecs are defined in detailed specifications and multiple companies can develop their own implementation so long as it meets that specification. Companies either license these codecs to third parties for their use or deploy them in their own encoding products.

As shown in table 2, There are multiple H.264 codecs, like x264, the H.264 codec in open-source encoder FFmpeg, along with codecs from Beamr and MainConcept, which these companies license to different users, and the AWS Elemental codec, which AWS Elemental uses in encoding products like MediaConvert. You see the same pattern in H.265 and AV1.

Table 2. Some of the developers of the most commonly used codecs.

As you would expect, the different codec implementations have different performance characteristics. For example, you can read a comparison of four AV1 codecs here and a comparison of multiple HEVC codecs here. The vast majority of streaming producers simply use the codec included in the encoding tool or service that they use; relatively few companies license codecs directly.

Which codecs are essential and why?

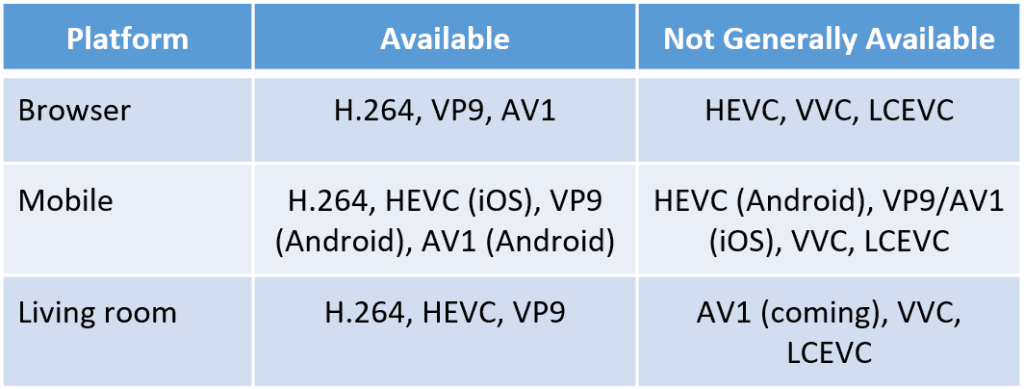

It’s often said in sports that availability is the best ability. With codecs, the most relevant ability is playability. That is, streaming producers use the codecs that are available on the platforms that they target, which typically falls in the three markets shown in Table 3. You’ll notice that H.264 plays in all three markets, browsers, mobile, and in the living room via OTT devices like Roku and Apple TV and directly in smart TVs. This means that a streaming publisher can encode their source files once using the H.264 codec and distribute those files to every target platform.

Table 3. The most important codec ability is playability; here’s where the various codecs play.

HEVC on the Android platform is worth some additional discussion. Specifically, if you check www.caniuse.com, you’ll see that neither the Android browser nor Chrome on Android supports HEVC playback, even though the Android OS includes HEVC decode capabilities, though it’s pretty anemic. However, most recently shipped premium Android devices likely have HEVC hardware decode, if only to compete with Apple which included HEVC hardware on all devices starting in 2017.

If you’re distributing video to Android devices for playback in the browser, HEVC is not a simple option. On the other hand, if you’re distributing for playback in an app, as most premium services do, you can access either the HEVC software decode in the Android OS, or more likely the hardware decode. So, if you’re distributing via an app, and are encoding to the HEVC format for the living room or iOS devices, you can probably send those streams to Android devices as well.

Why do publishers start supporting new codecs?

Good question. Because no single codec can replace H.264 in all target platforms, when a publisher starts to deploy a new codec, all encoding, and other related costs will be in addition to H.264-related costs, and these can be substantial. First, the publisher must experiment to produce the optimal encoding parameters for the new codec. Then, the publisher must add playback support for the new codec to all targeted players.

Whether the publisher encodes in-house or via a service, deploying a new codec adds encoding costs which are often more expensive than H.264 because the newer codecs use more complex encoding techniques and take longer to encode. Costs become a particular concern when publishers decide to re-encode their existing libraries with a new codec. After encoding, you have to store the additional encoded files which is an additional cost.

For this reason, most streaming publishers add new codecs only when it enables entry into a new market. For example, many streaming publishers started encoding with HEVC to distribute 4K movies into the living room, particularly those distributed in high dynamic range formats like DolbyVision. That’s because H.264 is too inefficient for use on this content.

Top of the pyramid publishers like YouTube, Netflix, Amazon, Facebook, Hulu, and some others also deploy new codecs to reduce bandwidth costs or improve the quality of experience in existing platforms, but this is much less common. More on the impact of encoding cost below.

How are codecs compared?

We’ve covered two of them; royalty cost and playability. Otherwise, quality, encoding time, and decoding requirements are also important. I’ll cover each in turn.

How is codec quality measured?

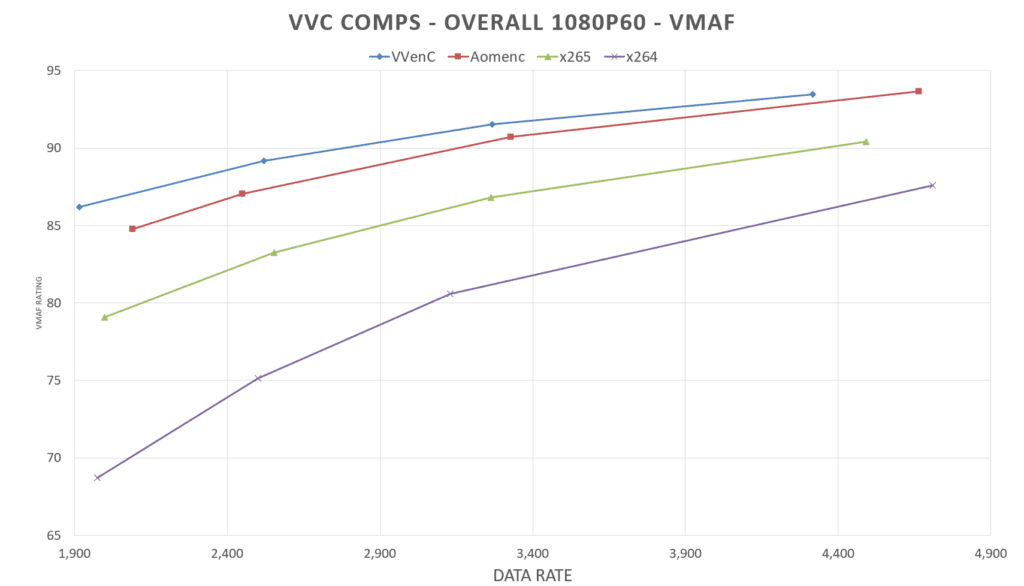

Codec quality is typically measured two ways, graphically via rate-distortion curves, and numerically via BD-Rate comparisons. Figure 1 shows a rate-distortion curve graph. To create the chart, you encode to four different data points with the tested codecs, which in the chart are VVenC (Franhaufer’s VVC codec), Aomenc (the Alliance for AOMedia’s AV1 codec), x265 (an open-source HEVC codec), and x264 (an open-source H.264 codec). I’ll be using VVC as an example frequently in this article as it’s a high-profile new codec and I have relatively new data to share.

Rate-distortion charts display the file’s data rate on the horizontal axis and file quality on the verticle axis. In Figure 1, quality is measured by quality metric VMAF, though you can use any metric or even subjective human-eye comparisons. Whatever the metric, the higher the series, the better the quality. In the chart, you see that VVenC is the highest series, closely followed by Aomenc, then x265, with x264 trailing badly.

Figure 1. A rate-distortion chart comparing implementations of VVC, AV1, HEVC, and H.264.

Rate-distortion charts are great for understanding how codec quality compares at a glance. However, what you specifically want to know is how much bandwidth a different codec (or codec implementation) will save you. That’s where BD-Rate figures come in.

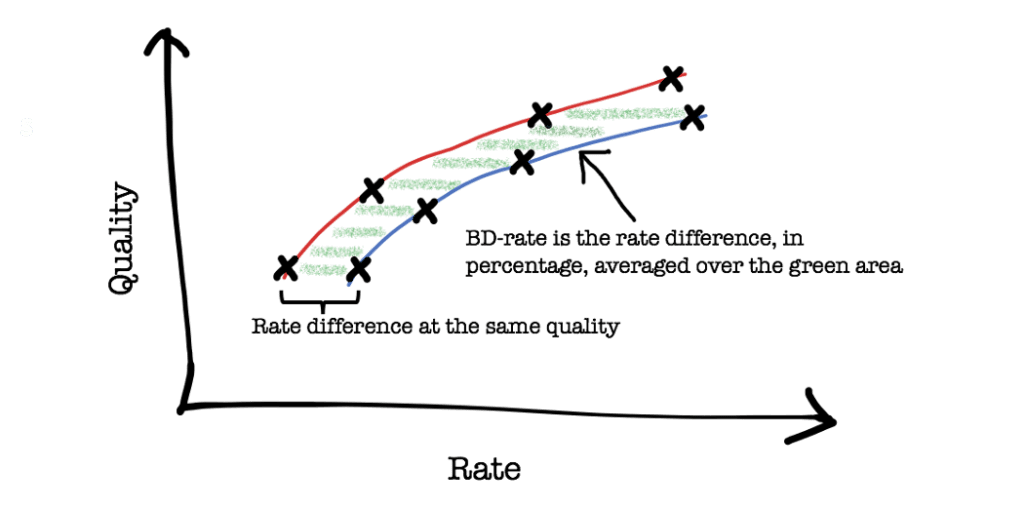

Figure 2. Definition of BD-Rate from the Netflix blog.

BD-Rate stands for Bjontegaard and it’s a “measure of the average percentage bandwidth savings for equivalent quality level over the range of quality levels common to both curves in the plot.” You can see what I mean in the Netflix drawing shown in Figure 2. You often represent BD-Rate data in a table like Table 4, which shows the BD-Rate computations for the rate-distortion curve shown in Figure 1.

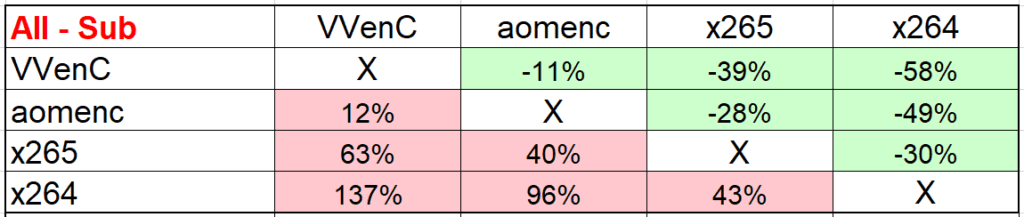

Table 4. BD-Rate comparisons for the same codecs as shown in Figure 1.

You read the data in Table 4 by choosing a line and moving through the columns. Negative numbers, in green, indicate the savings that the codec will deliver over those shown in the columns. In Table 4, VVenC can deliver the same quality as aomenc (AV1) at an 11% lower data rate, the same quality as x265 (HEVC) at a 39% lower data rate, and the same quality as x264 (H.264) at a 58% lower data rate.

Conversely, positive numbers, in red, represent the increase in the data rate necessary to deliver the same quality as the codec shown in the column. So, x264 needs to boost the data rate by 137% to deliver the same quality as VVenC, 96% to deliver the same quality as aomenc (AV1), and 43% to deliver the same quality as x265 (HEVC).

As a caution, recognize that just because BD-Rate figures show that VVenC can produce the same quality as x264 at a 58% lower data rate doesn’t mean that switching to VVC will save you 58% of bandwidth costs. Results will vary depending upon your encoding parameters and the typical bandwidths your viewers use to watch your videos. You can learn more about how to compute actual savings from a new codec here.

Why does encoding time matter?

Encoding requirements translate directly to encoding costs. For example, this article found that VVenC took about ten times longer to encode than x265. If you’re running your own encoding farm at capacity, this means that you’ll need ten times the infrastructure to produce VVenC at the same speed as HEVC. Though no cloud encoding facilities are currently pricing VVC, AWS Elemental charges $0.864/minute for AV1 encoding (HD@30 fps), compared to $0.336/minute for HEVC and $0.042/minute for H.264. So, it’s 40 times more for AV1 than H.264.

As you may know, most video streamed over the internet is delivered via adaptive bitrate technologies that encode your source files into multiple files at different resolutions and bitrates to deliver to viewers watching on different platforms and connection speeds. These files are called an encoding ladder. So, when computing your encoding cost, you have to multiply that per-minute charge by the number of minutes in your file times the number of files in your encoding ladder, which adds up.

If your customers are watching your videos in Netflix-style quantities, you can easily make up the encoding cost in bandwidth savings. However, if only a few thousand viewers watch your typical video, it’s much more challenging to recover the encoding cost. This is why YouTube encodes only the service’s most popular videos using the AV1 codec; the rest are delivered in H.264 and VP9.

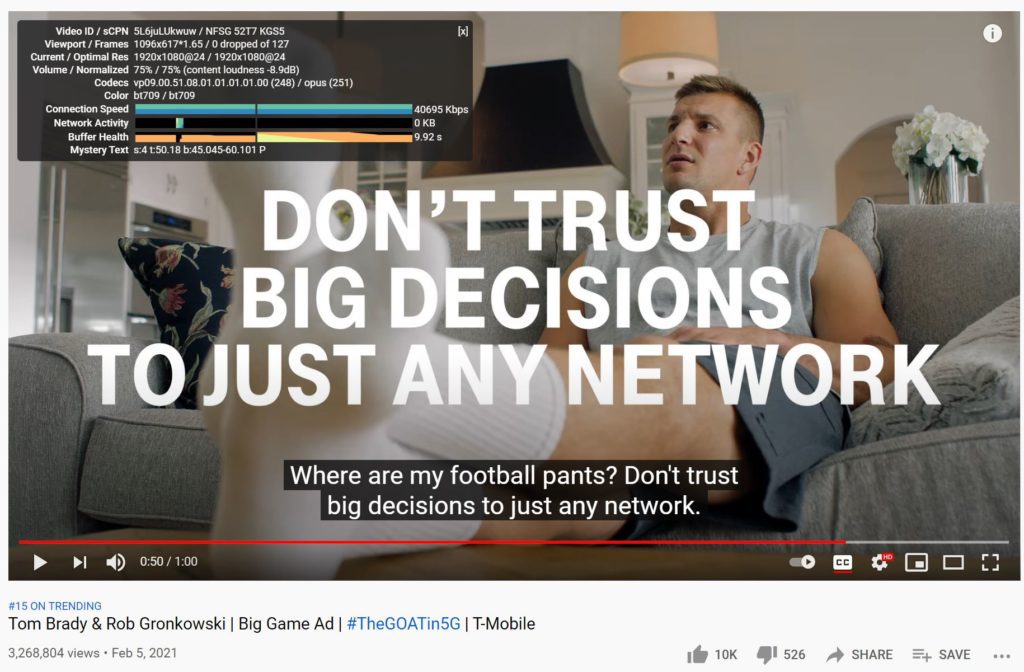

Figure 3. 3.3 million views in one day wasn’t enough to get this video encoded with AV1.

You see this in Figure 3, a Super Bowl advertisement on YouTube that garnered over 3.6 million views in a day, but was encoded with VP9, not AV1. This Cardi B video had over 14 million views in a day but was also encoded in VP9, not AV1 (I couldn’t find a PG 13 frame in the Cardi B video so I went with Brady and Gronk). I was surprised that YouTube didn’t use AV1 for these videos, as I’ve seen much less popular videos encoded with AV1 in the past. That said, YouTube encodes relatively few videos with AV1 because the encoding costs are significant. Unless your typical video is viewed by seven-figure audiences, it may be difficult to recoup AV1’s encoding costs with bandwidth savings.

Why do decoding requirements matter?

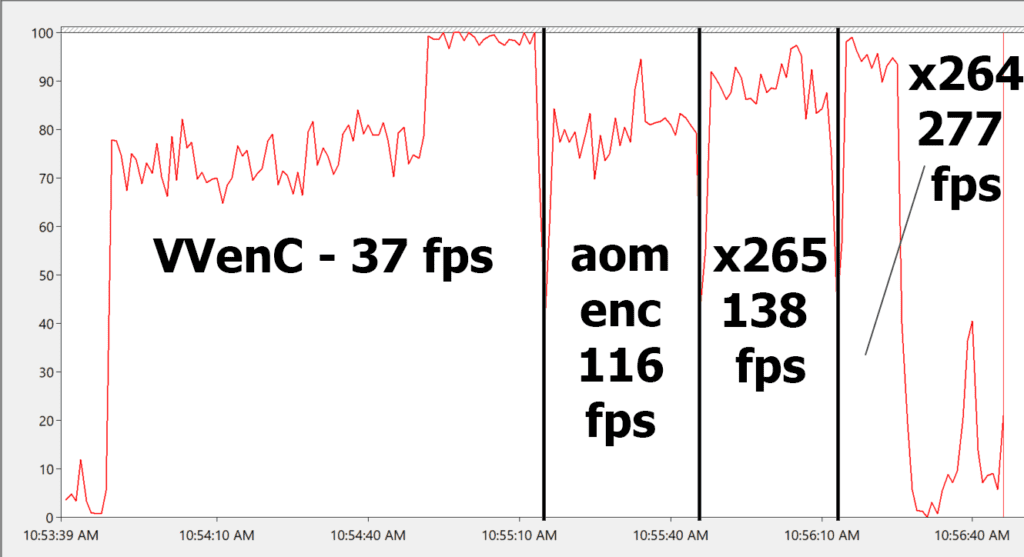

Figure 4 shows the decoding speed and CPU requirements for VVenC, aomenc, x265, and x264, all without hardware acceleration on an HP ZBook Studio G3 notebook with an 8-core Intel Xeon E3-1505M CPU running Windows 10 on 32GB of RAM. You see that VVenC was much slower and consumed much more CPU at peak decode than the other codecs.

If the notebook was running on battery, VVC playback would seriously degrade battery life, which is an obvious disincentive to use the codec. If this powerful notebook can only decode VVC at 37 fps, it’s doubtful that VVC will play at the full-frame rate on a mobile phone or tablet without special VVC-related decoding hardware, another disincentive.

Figure 4. VVC decode testing was too slow for deployment without hardware acceleration.

This means that VVC won’t be deployable on phones, tablets, smart TVs, and OTT devices unless and until VVC specific hardware decoding circuits are added to the device’s CPU, graphics processing unit (GPU), or system on a chip (SoC). The development of these chips and their integration into consumer electronic products typically takes about two years after the specification is finalized, which for VVC occurred in July 2020. This means that devices with VVC hardware decoding won’t appear on the market until mid-2022, and a critical mass of devices with VVC decoding hardware won’t accumulate until 2-3 years after that.

Another new MPEG sponsored codec, LCEVC, encodes more efficiently than H.264 and is very efficient during playback as well, which means that it potentially can achieve market penetration much faster than VVC. You can read more about LCEVC here.

How do codecs get adapted?

In this article, How to predict codec success, I listed nine factors that impact codec success. Many, like cost, comparative quality, encoding, and decoding requirements, we’ve already discussed. Probably the most important factor that we haven’t discussed is the impact of the Alliance for Open Media.

By way of background, H.264 and HEVC launched when cable TV and satellite broadcast distribution was king and streaming markets were secondary. The success of these standard-based codecs in broadcast markets like cable, satellite, and smart TVs was assured. With H.264 adopted by Apple for iOS devices and Adobe for Flash, H.264’s success in streaming markets was assured as well.

From a royalty perspective, the launch of HEVC was totally botched (see here). This failure spurred Google and Microsoft to found the Alliance for Open Media and launch the AV1 codec. In the interim, traditional markets like broadcasting started diminishing and IP-based streaming became paramount.

AOM members dominate multiple critical submarkets like desktop and mobile operating systems (Apple, Google, Microsoft), browsers (Apple, Google, Microsoft, Mozilla), phones and tablets (Apple, Google, Samsung), and OTT devices (Amazon, Apple, Google). As mentioned at the top, the most critical codec ability is playability. If AOM members decide not to deploy a codec, it’s unusable in that segment of the market, like HEVC in computer-based browser playback.

Conversely, when AOMedia members and publishers like Amazon, Google, Hulu, and Netflix deploy a new codec, it’s a powerful incentive for even non-member consumer electronic companies to support that codec on their phones/tablets, OTT devices, smart TVs, and other platforms. If these content publishers don’t support a new codec, it’s a disincentive for manufacturers to invest the development dollars and royalty cost to integrate that codec into a consumer product.

The bottom line is that the Alliance for Open Media has a tremendous influence on future codec adoption, and an incentive to promote AV1 over any standard-based codec. While the lack of AOMedia support doesn’t doom a codec, it does make it more challenging to deploy.

Another factor that impacts deployment is when a codec’s royalty cost becomes known. As mentioned above, HEVC’s royalty situation was badly managed, with one patent pool administered by Velos Media launched four years after the specification was finalized.

Many chip vendors and consumer electronic manufacturers started integrating H.264 and HEVC into their products before royalties were set because they assumed that the codec would succeed and that royalties would be reasonable. Given the HEVC royalty mess and the importance of AOMedia, neither of these assumptions seem reasonable at this point. Accordingly, it’s unclear how many chip or consumer-electronic companies will start to support a new codec until the royalty costs are fully known. Typically, this takes a year or two after the codec is finalized as companies owning patents related to the technology form patent pools and set royalty policies.

When do you need to care about new codecs?

If you’re a content producer when it becomes available on target platforms that you care about. If you’re a consumer electronics manufacturer when the royalty terms are set or the IP picture is otherwise clear.

Resources

Codec-Related Articles on the Streaming Learning Center (covers most current codecs, including HEVC, AV1, VVC, EVC, and LCEVC)

What is AV1 – Streaming Media Magazine

What is VP9 – Streaming Media Magazine

What is HEVC – Streaming Media Magazine

What is H.264 – Streaming Media Magazine

Author’s note: If you’d like to learn more about the audio side of codecs, check out this article entitled What is an Audio Codec – Simple Explanation for Non-Audiophiles.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel