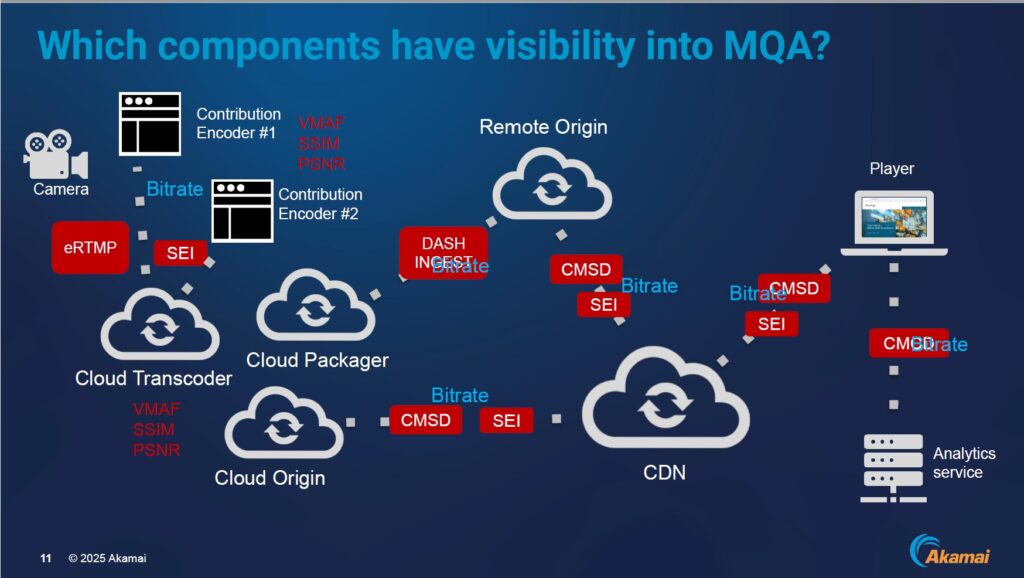

I’ve been tracking CMSD-MQA for a while now. Briefly, Common Media Server Data for Media Quality Assessment (CMSD-MQA) is a draft SVTA standard that defines how video quality scores like VMAF, PSNR, and SSIM, generated at the encoder, can be carried downstream through the delivery chain as standardized metadata, giving packagers, origin shields, and monitoring systems actionable quality information without requiring them to decode or re-analyze the content.

A December 2025 online workshop hosted by Alexander Leschinsky of G&L Systemhaus, featuring Will Law of Akamai, Adrian Roe of Norsk/id3as, and Brenton Ough of Touchstream, provided valuable insight into the emerging spec. Leschinsky organized the presentation around a three-part framework: See It, Score It, Switch It.

See It means surfacing quality information that already exists at the encoder rather than letting it disappear downstream. Score It means attaching standardized names and numeric values to those quality dimensions. Switch It means carrying those metrics downstream and letting components make switching decisions based on them.

This article is a summary of those discussions. The video is embedded below.

Contents

Introduction

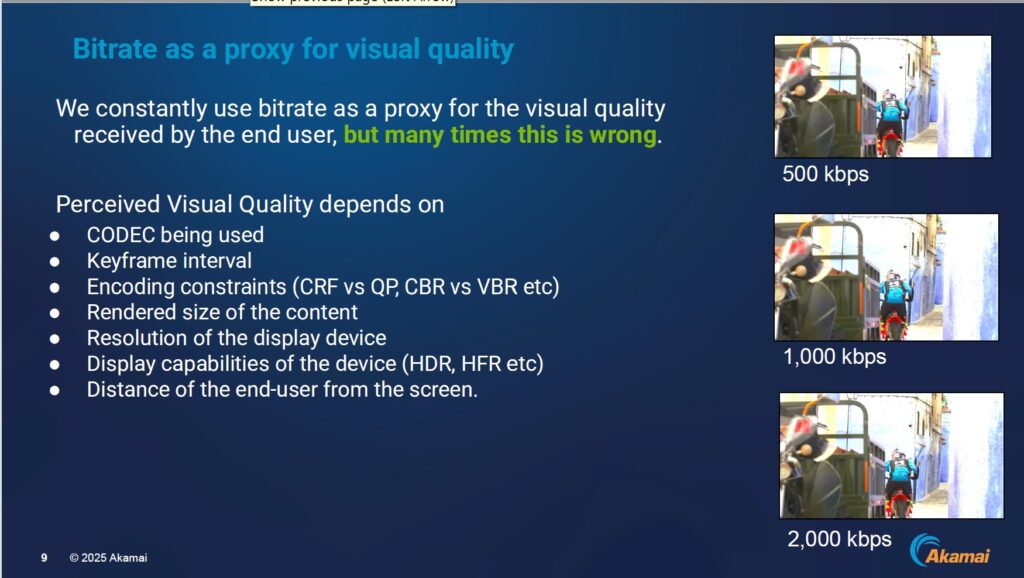

By way of background, for years, live streaming operations teams have monitored bitrate as their primary indicator of signal health. It is a convenient proxy, but as Will Law, Chief Architect, Cloud Technology Group at Akamai Technologies, framed the problem, it is often the wrong one.

“We constantly use bitrate as a proxy for the visual quality received by the end user,” Law said, “but many times this is wrong.”

The Problem: Quality Dies at the Encoder

As Law explained, perceived visual quality depends on the codec in use, keyframe interval, encoding constraints such as CRF versus QP, the rendered size of the content on the display, and the viewer’s distance from the screen. Bitrate captures none of this.

Established full-reference metrics such as PSNR (Peak Signal-to-Noise Ratio), SSIM (Structural Similarity Index), and VMAF (Video Multimethod Assessment Fusion) capture it, but they require a copy of the source from which the content was encoded. So the only component capable of generating accurate quality scores is the encoder.

“The encoder already knows a lot about quality,” Leschinsky explained. “Quality of the actual video content often needs to be rediscovered later down the signal chain, and this is extra decoding and tooling, which is generating costs and time and effort and can go wrong.”

Without that information, he noted, operations teams making decisions about switching or quality ratings fall back on whatever metrics are available: network connectivity between the packager and the encoder, ping latency, or simply bitrate. “That does not necessarily tell you something about the quality of the actual signal.”

The Standard: org.svta.mqa in CMSD Headers

Then Will Law explained the genesis of the spec. The Streaming Video Technology Alliance’s QoE Working Group began work on the Transmission of Media Quality Assessment (MQA) data project in January 2025, building on a patent submitted by Dr. Urvashi Pal at Akamai in October 2023 and granted in December 2025 as U.S. Patent No. 12,499,527. Use of the patent is royalty-free for the media industry.

MQA covers both video and audio. The project defines a standardized method for transmitting quality scores using Common Media Server Data (CMSD) response headers, as well as via SEI messages embedded in H.264 and H.265 streams, eRTMP, and DASH Live Ingest.

The spec highlights multiple sources of metrics such as,

- VMAF in standard, mobile, HD, and UHD profiles;

- PSNR; SSIM; IMAX proprietary scores;

- Synamedia’s pVMAF; and

- AWS Elemental’s Media Quality Confidence Score.

It also defines two generic fields, MQA-VIDEO and MQA-AUDIO, which composite scores designed to reflect actual perceptual quality rather than just encoding fidelity. How those scores are constructed and why the distinction matters are covered in the implementation section below.

For contribution workflows where CMSD headers are unavailable, quality data can be embedded directly in the H.264 or H.265 bitstream as SEI messages, with keys indicating whether the data applies at the frame level or is aggregated across a GOP. For live ingest where segments are POSTed rather than fetched, the spec uses CMCD (Common Media Client Data) request headers instead, with nearly identical syntax. The same CMCD approach applies when a player reports quality data back to an analytics service.

In low-latency configurations, response headers are sent before a segment has finished encoding, so per-GOP quality scores aren’t yet known. “To solve that, we invented a scheme where the data for Segment N can actually be communicated in Segment N plus one,” Law explained. “When you receive segment N plus one, it’ll carry the data for the prior segment.”

Encoder and Packager Implementation

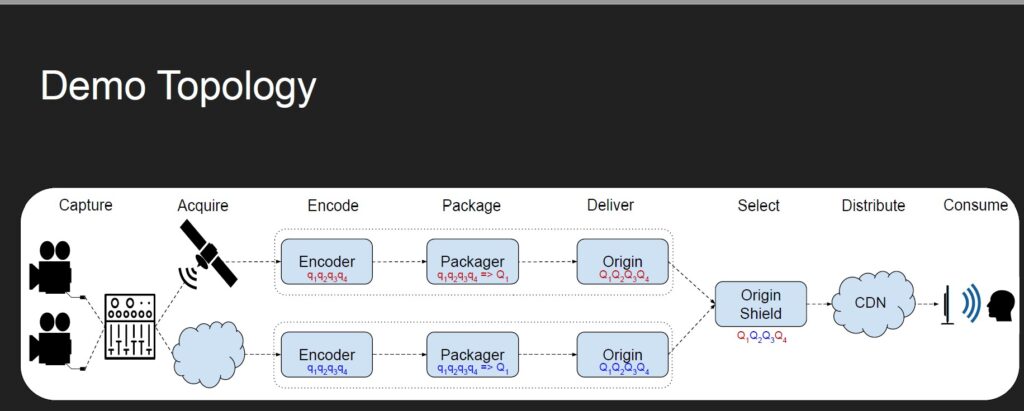

Next up was Norsk’s Adrian Roe, who discussed Encoder and Packager implementations. Using Norsk Studio, Roe ran a single-source signal through two independent encoders in separate regions, each tagging quality metrics at the frame level via SEI messages, aggregating them at the packager into per-segment CMSD headers, delivering them to a quality-aware origin, and flowing them through a CDN to an end-user player.

According to Roe, the architecture reflects standard industry practice: running redundant parallel pipelines so that if any component in one path fails, the other is already running and can take over instantly. That availability story has been well solved for years.

What CMSD-MQA adds is a second dimension: the origin can now compare quality scores arriving from both paths and switch to the better one even when both are technically healthy. Roe described a scenario where rain degraded one of the signals. As he explained, without MQA scores, the origin has no way to distinguish between them. With them, it sees the quality score on path A drop and automatically serves from path B instead.

Roe’s framing for why the approach matters centered on availability versus quality: “For quite some time, there has been a good story around high availability in a live context by using redundant signals. What CMSD-MQA brings to the table is a similar conversation about how you do that based on quality as well as availability.”

Fidelity Is Not Quality

The most counterintuitive point in Roe’s presentation was that PSNR and VMAF scores can actively mislead in exactly the scenario where quality monitoring matters most: rain fade on a satellite contribution link.

“What you observe with rain fade is that you get pixelated content,” Roe said. “If you simply look at the VMAF scores and the PSNR scores of that content, it’s counterintuitive, but actually you get higher scores, because pixelated content has less information in it. I’ve got the same amount of bandwidth to encode that less information, and so I get a higher fidelity score.”

His vocabulary adjustment: PSNR, VMAF, and SSIM are “fidelity scores and not necessarily quality scores.”

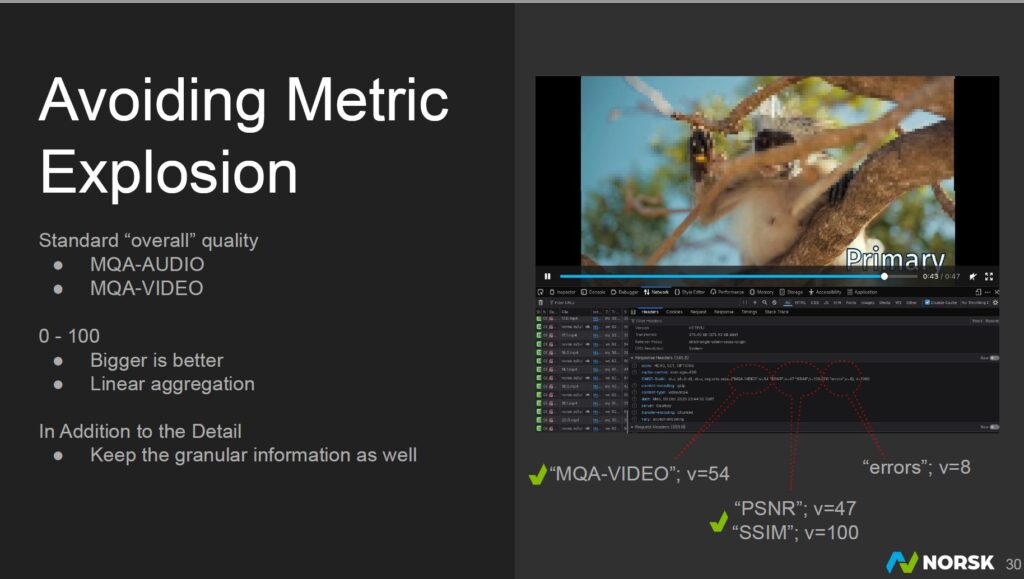

The solution is filters. Alongside standard reference metrics, Norsk augments each stream with metadata from the transport stream layer (sequence continuity errors), the decoder step (dropped frames, invented or duplicated data), and audio-level analysis (loudness, clipping). Roe demonstrated a segment where MQA-VIDEO scored 54 despite a PSNR of 47 and an SSIM of 100, because the TS error count of 8 pulled the composite score down. “You get both a well-defined standardized decision metric, and you get the granular detail behind it too.”

Avoiding Metric Explosion

Because a fully instrumented dual-path workflow can easily surface 50 or more individual metrics per segment, the spec mandates the two composite fields: MQA-VIDEO and MQA-AUDIO, both on a 0 to 100 scale, where larger is better and linear aggregation is used. These give a packager or origin from a different vendor a single, interoperable number to act on, regardless of which underlying metrics an encoder vendor chose to compute.

“A packager from a different vendor than the encoder will see MQA scores at a frame level; they know exactly how to produce overall quality scores,” Roe said. “They know exactly how to do quality-based decisioning.”

Consistent Segmentation

Quality-based switching works best when the two redundant streams are segmented identically. If a switch is made mid-GOP, or if the incoming and outgoing streams are not aligned to the same frames, the viewer will experience either a repeated sequence or a skip, either of which is more disruptive than the brief quality degradation the switch was meant to avoid.

Norsk addresses this by injecting SEI timecodes at the source and propagating them through both encoder chains, giving each packager a common reference for where to start segments and I-frames. In Roe’s 24-hour test, a frame-by-frame comparison of the two encoder outputs showed a scene transition occurring at exactly the same frame in both chains.

Monitoring and Switching

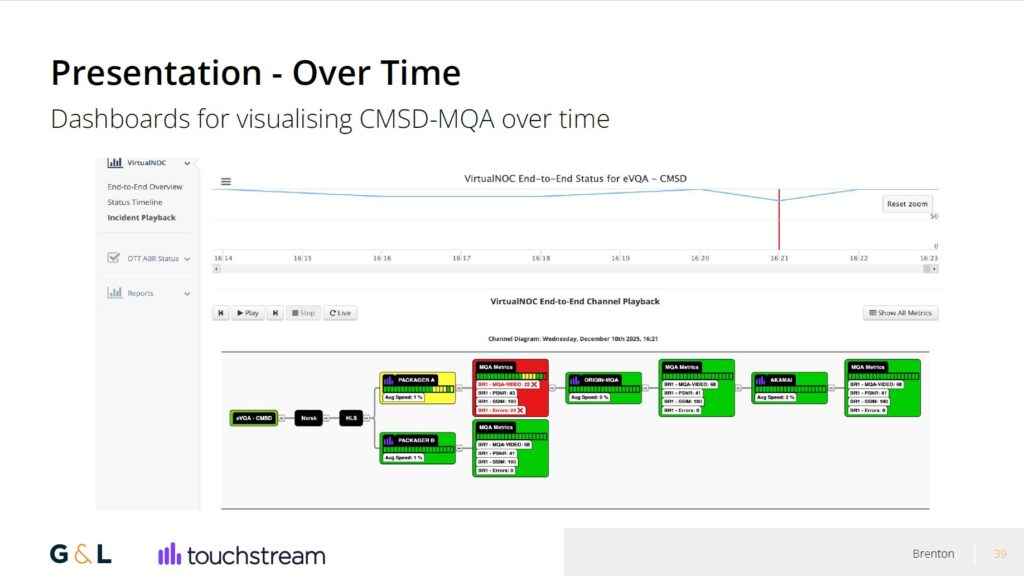

Touchstream’s Brenton Ough demonstrated how CMSD-MQA data surfaces in a monitoring environment. The CMSD-Static header carrying the quality scores is visible in browser developer tools and parseable by any HTTP-aware monitoring agent. Touchstream’s product, which it calls eVQA, decodes the structured header field into human-readable form and collects it from multiple points along the delivery chain simultaneously: both packagers in a redundant A/B configuration, the quality-aware origin, and the CDN.

The live dashboard displays each node in the workflow as a tile with its current MQA-VIDEO, PSNR, SSIM, and error counts. A time-series view plots all four metrics over a rolling window. When a packager’s MQA-VIDEO score drops below a configurable threshold, the tile turns red and an alert is sent to Slack or email, showing which node triggered and the scores at that moment.

In the demo, Ough introduced 2% packet loss into the Encoder A path to simulate rain fade. The quality-aware origin evaluates MQA-VIDEO scores on every incoming segment and switches delivery to the Encoder B path as soon as B outscores A. “It doesn’t take much to give a significant impact,” Ough noted. The Touchstream dashboard showed Packager A in red with an MQA-VIDEO score of 22 and 24 errors while the origin and CDN continued serving clean content from the B path. After the packet loss was removed, the system waited five segments before switching back to the primary, deliberately avoiding thrashing if quality fluctuates briefly around the threshold.

“You can see the score dropped below 60, down to 42, when the errors went up to 14,” Ough said. “We can track it, we can see exactly what time it happened, we can see if it’s repeated.”

Use Cases Beyond Fault Detection

Next, Law outlined five primary applications for MQA data, each addressing a different point in the delivery chain where quality decisions are currently made blindly.

At the cloud encoder or packager, MQA enables intelligent selection between two contribution sources that may be running at identical bitrates but delivering very different quality. This is exactly the rain fade scenario the demo illustrated. Without quality scores, the packager has no basis for choosing between them. With them, it can switch automatically to the cleaner signal.

At the packager, MQA provides the data needed to embed quality-based switching signals directly into HLS or DASH manifests, giving downstream components a standardized basis for routing decisions without having to independently assess the content.

At the origin shield, or the caching layer that sits between the cloud origin and the CDN, MQA enables the same kind of intelligent selection between redundant origin sources. If two origins serve the same content at the same bitrate but one has a lower quality score due to an upstream issue, the origin shield can route around it.

At the player, the use case shifts from switching to auditing. This is where MQA becomes a tool for accountability. A subscriber paying for an HD tier should be receiving HD-quality content. When ads are inserted from third-party servers midstream, there is currently no standardized way to verify that the inserted ad matches the visual quality of the surrounding content, a mismatch that viewers may notice. MQA gives the player a per-segment quality record across the entire session, including ad breaks, so operators can verify what was actually rendered rather than just what bitrate was requested.

At the analytics provider level, that same per-segment quality record can be aggregated across millions of sessions, giving operators a genuine quality-of-experience picture rather than a bitrate distribution. The difference matters: a session that downloaded consistently at 4 Mbps but suffered rain fade on the contribution side may have looked terrible despite other numbers looking fine.

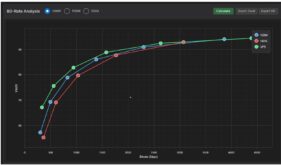

Roe added a longer-term angle: quality scores could eventually enable smarter ABR ladder management. With codecs like AV1 on efficient content, the perceptual difference between adjacent rungs on the bitrate ladder can be negligible. “If you’ve got relatively simple content, the quality difference between your eight-megabit stream and your one-megabit stream is negligible,” he said. “In which case, why send them all?” The implication is that quality-aware routing could allow operators to collapse redundant ladder rungs on the fly, reducing CDN egress costs without degrading viewer experience.

Adoption Status

At the time of the workshop, the SVTA technical publication had not yet been formally released. Norsk/id3as and AWS Elemental MediaLive/MediaPackage v2 were the identified early adopters. Akamai supports constructing arbitrary CMSD headers at the edge but does not automatically add MQA headers. Unified Streaming supports CMSD at the origin level.

Law was direct about the adoption question: “Ask it six months to 12 months after the spec has been published, and then we can try to judge the adoption rate.” His recommendation for operators evaluating vendors in the interim was straightforward: “Ask your encoding vendor when they are going to support it, and you can put that in RFPs as well.”

Leschinsky echoed the point: the standard is still early enough that requiring CMSD-MQA as a mandatory RFP criterion may be premature, but including it as a weighted evaluation factor is reasonable. “It’s definitely something that you want to know about and that you can attach a weight to in answering the quality of a certain RFP quote.” He also noted a broader problem with how quality is specified in tenders: “We often see CDN tenders where it says the quality has to be high all the time. You have to define what is high. Having just something like ‘high quality’ is not helpful. Looking into what you really need and defining that in objective terms that you can already measure is helpful.”

The SVTA technical publication and working group information are available at svta.org. Here’s the video, also available here.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel