Recently, video quality metrics have gotten a lot of hate, primarily from codec vendors. For example, yesterday on LinkedIn, respected Beamr CTO Dror Gill wrote, “everyone agrees that subjective (human) testing is the only accurate way to measure true perceptual quality.” After I pointed out industry support for using video quality metrics, Gill graciously changed this to “everyone agrees that subjective (human) testing is the most accurate way to measure true perceptual quality,” which is correct, but far from a hearty endorsement of objective quality metrics.

Why Codec Developers Hate Objective Metrics

While working at MulticoreWare, current Beamr VP of Strategy Tom Vaughan wrote an article entitled, “How to Compare Video Encoders,” which has since been taken down (the whole site, not just the article). So, I’m paraphrasing here, but one key point in the post was that objective quality metrics are not an accurate way to compare codecs or assess comparable codec performance. To this, I agree, at least for now.

Why? Because there’s a disconnect between how older video quality metrics work and how codecs optimize quality. That is older metrics like Mean Square Error (MSE) and Peak Signal to Noise Ratio (PSNR) work by analyzing the mathematical differences in the source and the compressed file. The more differences, the lower the score.

Codec vendors don’t care about scores; they care about subjective quality. Accordingly, there are multiple classes of techniques that improve visual quality but can degrade metric scores because they increase the “differences” between the source and compressed file. One example is adaptive quantization (AQ), which allocates bits to different regions in the video to improve visual appearance.

In his book, Decode to Encode, author Avinash Ramachandran explains, “As we know, our eyes are more sensitive to flatter areas in the scene and are less sensitive to areas with final details and higher textures. AQ algorithms leverage this to increase the quant offset in higher textured areas and decrease it in flatter areas. This, more bits are given to areas where the eyes are sensitive to visual quality impacts.” These types of allocations increase differences and lower metric scores.

Many codecs include tuning mechanisms for PSNR, SSIM, and/or VMAF that disable features like adaptive quantization when the video will be measured via objective metrics. But that negates the benefit of techniques that optimize visual quality. It’s like test-driving a Porsche in rush hour traffic in Manhattan – you really don’t get to test what makes the car special.

Why Codec Evaluators and Producers Love Metrics

Given this, why does MPEG still use metrics to evaluate codecs? Certainly, gross differences in excess of 10 – 15% accurately reflect codec quality, as tuning for metrics seldom makes more than a 3-5% difference. So, if codec A is 40% more efficient than codec B as measured by PSNR BD-RATE, it’s a much more efficient codec. If the difference is 5% – 10%, not so much.

Why do producers love metrics? Because they are getting better and delivering an increasingly higher correlation with subjective evaluations. I called out MSE and PSNR above because they are fixed algorithms that haven’t changed since they were created, and they don’t consider aspects of the human visual system that impact subjective ratings.

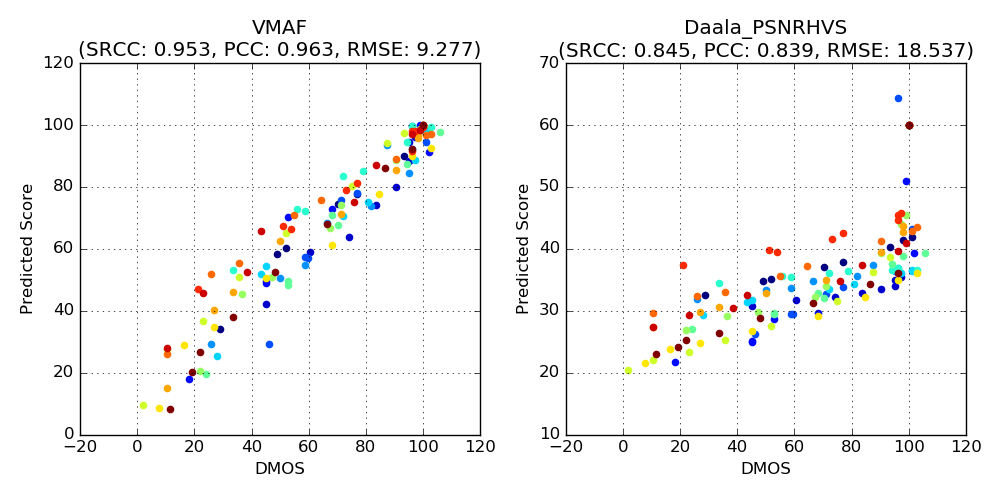

In contrast, VMAF is a trainable algorithm that already correlates well with subjective ratings and will improve over time. This is shown in Figure 1, which compares VMAF on the left to PSNR on the right. Both scatter graphs plot the objective metric scores (vertical axis) and subjective ratings (horizontal axis) of a test file. A perfect correlation would be a straight line from the lower left to the upper right. VMAF shows a tighter concentration along this line indicating a closer correlation between the objective and subjective metric than PSNR, which is all over the map.

Figure 1. VMAF scores (on the left) more accurately correlate with subjective ratings than PSNR.

Figure 1. VMAF scores (on the left) more accurately correlate with subjective ratings than PSNR.

Are their outliers that don’t correlate? Certainly, and compressionist extraordinaire Fabio Sonnati wrote a fascinating blog post about one here. But when you’re evaluating millions of encoded segments a week, as Netflix does, it’s better to be close on most evaluations than to not try at all. Besides, Netflix isn’t comparing codecs or even encoding parameters; they’re comparing the same codec using the same encoding options at different resolutions and bitrates. Visual optimizations are enabled in all encodes which tends to offset any related differential.

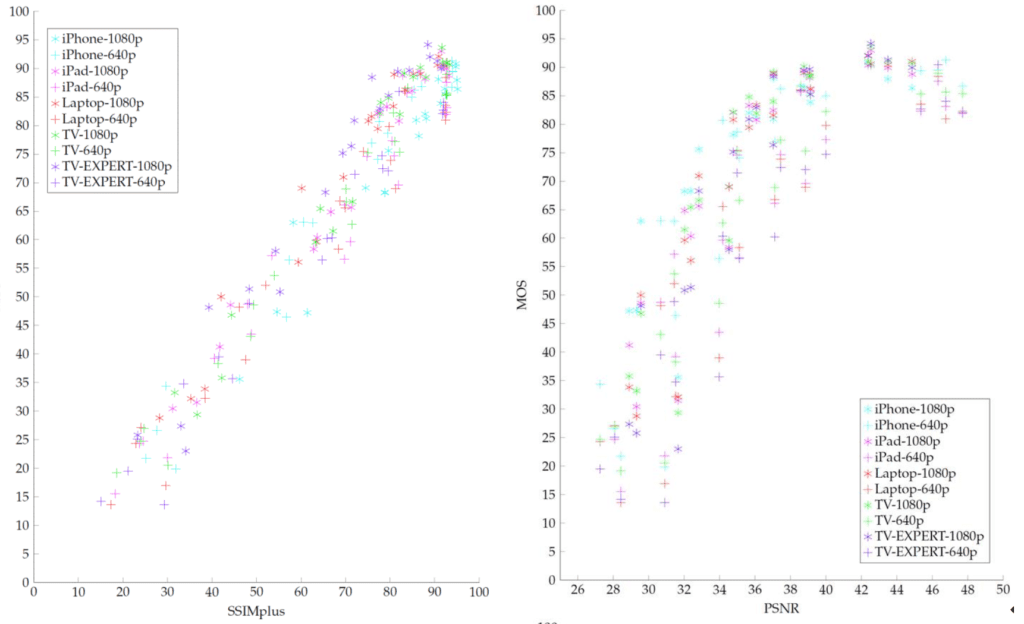

As shown in Figure 2, SSIMWAVE’s SSIMPLUS metric (on the left) enjoys a similar advantage over PSRN. Though SSIMPLUS doesn’t use formal machine learning it’s continually being advanced by mathematicians on staff and is also continually improving.

Figure 2. SSIMPLUS on the left, PSNR on the right.

Figure 2. SSIMPLUS on the left, PSNR on the right.

Beyond Netflix’s encoding farm, want to know another tool that relies on objective metrics? Beamr’s own video optimization tool. Here’s a snippet from a white paper you can download here (emphasis supplied).

During the process, the original compressed frames are decoded, and then re-encoded using more aggressive compression parameters, delivering a more compact frame. This frame is then decompressed, and compared to the original using Beamr’s Quality Measure Analyzer. This returns a score that numerically represents whether the frame is perceptually identical to the original frame…If the score is within a certain threshold, Beamr Video accepts the frame and moves to the next.

Is Beamr’s own metric 100% accurate? Unlikely, but the metric is comparing encodes using identical parameters save QP value, again negating the effect of AQ and similar settings. The correlation with subjective ratings is undoubtedly very high, proving that used the correct way, objective metrics provide unquestionable value.

Beyond these current uses, understand that via machine learning and/or process improvements, video quality metrics will continue to get more accurate. It’s silly to think that we can trust machine learning to drive a car but not to assess subjective video quality at some point in the future.

How Do I Recommend Using Objective Metrics?

So, what’s the net/net? When comparing codecs, I use objective metrics to identify problem spots in the videos, but always supplement them with subjective ratings using a service called Subjectify.us. You can see an example of this in this article. The objective and subjective scores generally correlate but there are usually some differences.

When using metrics for production, perfect is the enemy of the good. No metric is correct 100% of the time, whether it’s VMAF in Netflix’s encoding farm or Beamr’s Quality Measure Analyzer in Beamr Optimizer. But both assess very limited test cases and both produce significant benefits.

When evaluating encoding parameters within the same codec, I use metrics as a guide, but always with subjective verification, usually with the Moscow State University Video Quality Measurement Tool. Basically, my procedure is this:

1. Compute the number.

2. View the results plot to identify any key differences or any transient issues (image below).

3. View the actual frames to determine if differences are noticeable.

4. Load significant regions as video files to determine if the typical viewer would notice them.

This video, from my course on Computing and Using Video Quality metrics, goes through the process.

When comparing codecs, I use metrics to identify transient problem areas in the videos and other regions of interest. I always recommend using subjective measurements to supplement these efforts.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel