Overview: When measuring video quality with metrics like VMAF you should measure and report consistency (transient quality and standard deviation) as well as mean quality. If you’re interested in the quality consistency of AV1 codecs and typical configuration options, you can attend a webinar ($149) or take a course on AV1 production that I will release by the end of September.

Most of the time, when you see a video quality metric like VMAF or PSNR cited in a white paper or codec comparison, you see a single score or multiple scores over a test range defined by QP values or bitrates. However, many times, a single number can obscure or misrepresent codec quality, particularly when using 5 – 10-second test files.

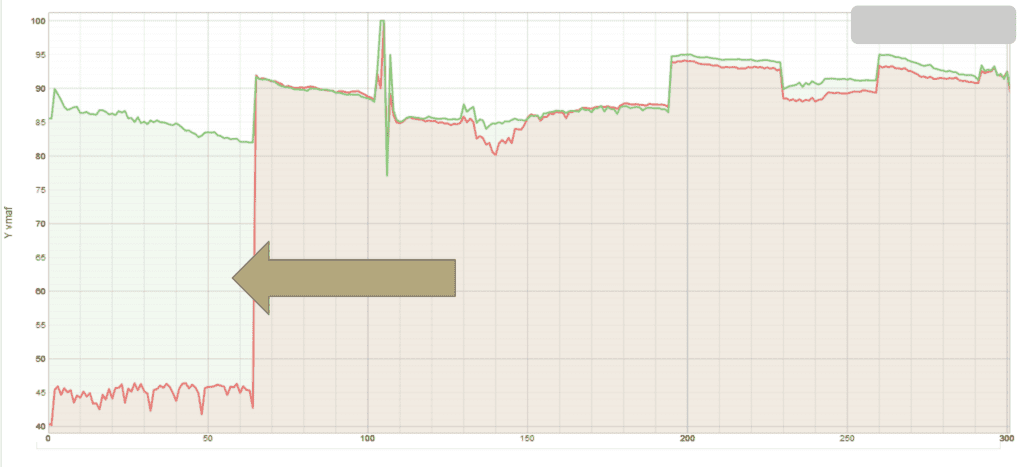

For example, during a recent comparison of AV1 codecs for Streaming Media Magazine, I noticed the pattern shown below with one of the codecs (this is from the Results Plot of the Moscow State University Video Quality Measurement Tool – see here). With this codec, scores up to the first I-frame were abnormally depressed. In the context of a 90-minute video, the impact of this quality deficit would be irrelevant and lost in the fade from black. But in the context of a 10-second clip, it can dramatically affect the overall score. Had I not checked this visualization tool, I wouldn’t have noticed this issue. Note that the Streaming Media article isn’t online yet; I’ll link to it once it is.

To address this in the aforementioned article, I had to add four seconds to the start of each test clip, encode as normal, and extract the four seconds before measuring quality. It took several days to accomplish this workaround, but otherwise, the VMAF scores would have misrepresented real-world codec quality. Until the codec developer fixes this problem, every codec comparison that doesn’t address this issue may be misrepresenting quality.

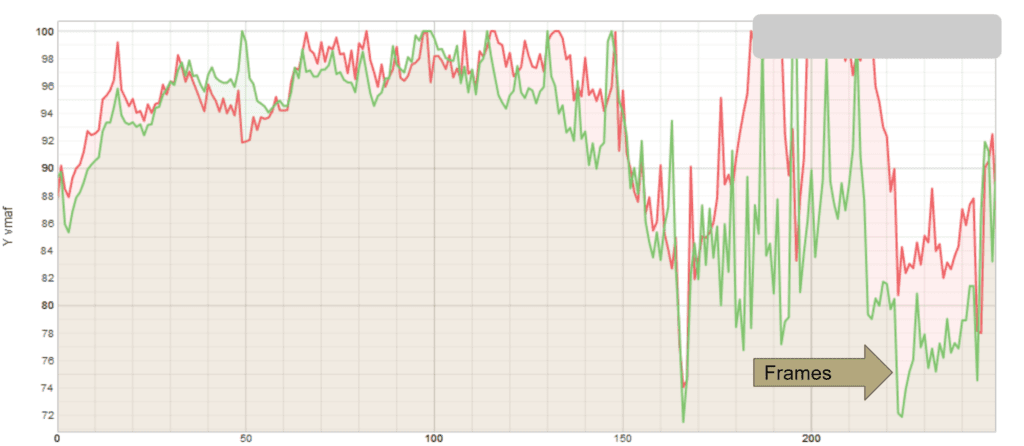

Of course, these transient issues can occur anywhere within a clip. Consider the graph shown below. Here the total quality difference between the codecs is about 2 VMAF points which most of the time would be irrelevant. However, since there are significant quality drops within the clip, the codec represented by the green line might reduce the viewer’s quality of experience beyond what you would expect for an overall 2 point VMAF difference. Again, a single score doesn’t adequately represent real-world quality.

How should a researcher or video quality metric practitioner account for these types of issues? By measuring and reporting quality consistency.

Measuring Quality Consistency by Codec

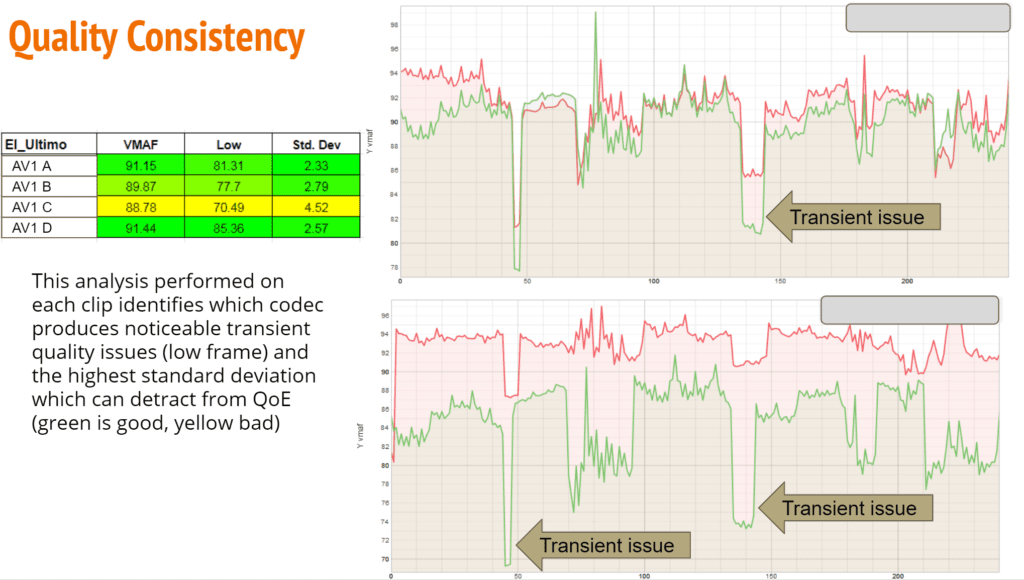

In the Streaming Media article, I compared four AV1 codecs and reported overall mean VMAF and SSIMPLUS scores for the sixteen test clips. Unfortunately, there wasn’t room for quality consistency data, which I measured with the following three data points:

- Mean VMAF

- Low-frame VMAF, or the lowest VMAF score for any frame, which is a measure of transient quality

- Standard deviation, which measures the quality variability in the stream

These data points are all supplied by the MSU tool and services like the Hybrik Video Quality Analyzer. As you can see below, for this particular clip, codec C performs poorly, with the lowest mean and low-frame score, and the highest standard deviation. As between codec A and D, you see that D had a significantly higher low-frame value, but trailed A slightly in standard deviation. The graphs augment these numbers with visual input.

Though these results didn’t make it into the Streaming Media article, I’ll present some of this data in a webinar I’m hosting on September 24 (the webinar costs $149). If you’re interested in learning the quality consistency produced by the four AV1 codecs and how that impacts QoE, you can learn it at that webinar, or later in a course on producing AV1 video that should be released by the end of September (see here for all Streaming Learning Center courses).

Quality Consistency and AV1 Configuration Options

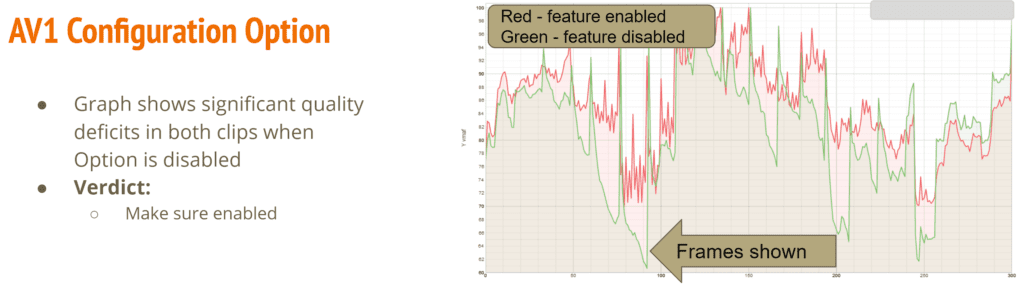

While producing the data for the Streaming Media article, I experimented with many of the configuration options available for each codec and quality consistency played a very significant role in determining which to enable or disable. Specifically, I tested each configuration option by encoding two test clips with and without that option enabled or using different settings or strengths. Then I measured the impact on encoding time, overall quality, low-frame quality, and standard deviation.

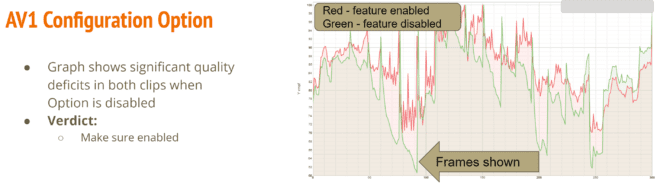

Again, while the overall scores were often close the quality consistency achieved by including or excluding any particular feature was often significant. One look at the graph below makes it clear that you definitely want this feature enabled. During the webinar, I’ll review common options for each codec and present a recommended command string for each codec.

The Bottom Line

It’s great that tools like FFmpeg can measure overall VMAF (or PSNR/SSIM) quality and in a production environment, where you’re adjusting known quantities like data rate or resolution to find the optimal output parameters, a single score works just fine. But when you’re comparing codecs or experimenting with codec encoding options, you should also explore quality consistency; otherwise, you’re getting an incomplete picture of how they might impact viewer QoE.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel