HLS just got really complicated.

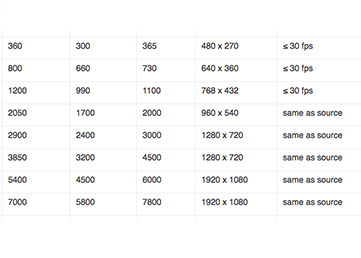

On September 9, 2017, Apple updated their HLS authoring requirements to include HDR and 4K recommendations. The updated ladder is shown below.

Here are some of the new/updated requirements for HEVC and HDR. There are more in the spec, but these are the ones I wanted to comment on.

1.6. Profile, Level, and Tier for HEVC MUST be less than or equal to Main10 Profile, Level 5.0, High Tier.

This makes sense. This means you can encode using the Main profile, or Main10.

1.7. High Dynamic Range (HDR) HEVC video MUST be HDR10 or DolbyVision.

This also makes sense. This means that the Apple players are compatible with both.

1.20. For HDR content, frame rates less than or equal to 30 fps SHOULD be provided.

Apple seems to be wanting to push 60 fps down our throats, as another requirement dictates the live/linear video “SHOULD be 60 or 59.94 fps,” while another states, “You SHOULD de-interlace 30i content to 60p instead of 30p.” For HDR content, you should provide at least one set of streams that are 30 fps or slower.

1.23. If HDR streams are provided, then they SHOULD be provided at all resolutions.

This is new and significant. Some producers have postulated that a mixed H.264/HEVC ladder would be the most efficient, say with H.264 at 720p and lower, and HEVC at 1080p and higher. Apple clearly doesn’t think so.

Note that in the sample presentations supplied by Apple in their HTTP Live Streaming Examples, the HEVC stream includes nine HEVC variants and nine H.264 variants. So if you’re going to provide HEVC with HLS the Apple way, you should probably do the same.

1.24. For backward compatibility, SDR streams MUST be provided. (See also 6.16)

So, if you’re going to supply HDR, you must supply SDR streams, though it’s not clear if these must be HEVC or whether H.264 will work. The referenced section 6.16 states that “For backward compatibility SDR trick play streams MUST be provided.” Note that section 6.1 states, “I-frame playlists (EXT-X-I-FRAME-STREAM-INF) MUST be provided to support scrubbing and scanning UI.”

So, those supporting HDR should consider supplying three sets of streams, and three sets of I-frame playlists.

About the Encoding Ladder

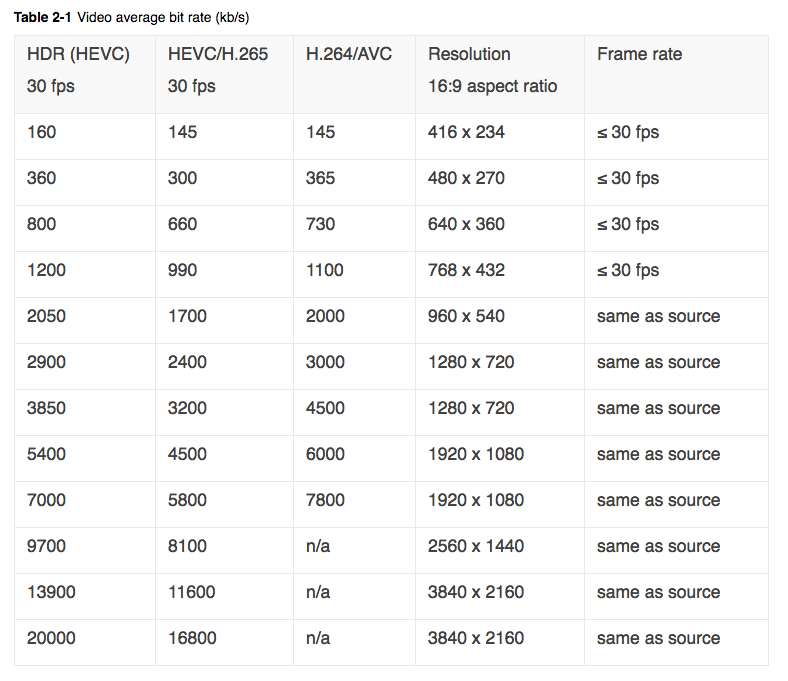

Here’s the updated encoding ladder.

Table 1. Apple’s updated HLS encoding ladder.

Table 1. Apple’s updated HLS encoding ladder.

For the first time, Apple indicated that their data rate suggestions are not set in stone, stating in a note below the encoding ladder, “the above bitrates are initial encoding targets for typical content delivered via HLS. We recommend you evaluate them against your specific content and encoding workflow then adjust accordingly.” Another note states that “24fps HEVC content should use bit rates reduced by about 20% from the values above.” Both comments make perfect sense.

Finally, apropos of my comment above about Apple pushing 60p encoding down our throats, the specification states, “30i source content is considered to have a source framerate of 60 fps.”

I detail why I don’t like 60 fps streaming, with examples, in the blog, Apple Makes Sweeping Changes to HLS Encoding Recommendations. The CliffNotes version is that 60 fps degrades image quality at some bitrates, as you can see in the blog. Fortunately, few producers shoot at 30i any longer, and 30p is still 30p.

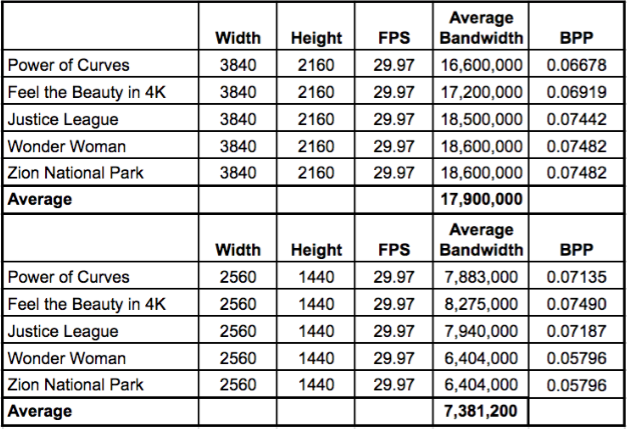

How do Apple’s 2K/4K recommendations look? Below is some comparative data from YouTube, all in VP9 format. Assuming similar quality between HEVC and VP9, Apple’s recommendations are comparative, though the 2160p data rate might be a bit high.

Table 2. Data rates for 2K/4K from YouTube.

Table 2. Data rates for 2K/4K from YouTube.

Why is HDR so much higher than HEVC in the Apple spec? That’s an interesting one. At first glance, you would think it relates to the bump from 8-bit HEVC to 10-bit in HDR. However, in my article Netflix Talks Dolby Vision and HDR10, Netflix indicated that such a jump wasn’t needed. I asked:

Ozer: HDR is 10-bit. Does this mean to get the equivalent quality of 4K@16Mbps in 8-bit, you need to encode to 20Mbps?

Netflix: Our CE4 profiles are all 10-bit whether Rec. 709 or HDR, and our 4K bitrate maxes at 16Mbps. Moving from 8-bit to 10-bit does not significantly impact bitrate.

Apple’s bump also isn’t necessitated by the HDR metadata according to this question and answer.

Ozer: What’s the bandwidth of the HDR10/DoVi metadata? Is that streamed along with the video?

Netflix: Both Dolby Vision and HDR10 have static metadata repeated at regular intervals. Dolby Vision also has dynamic metadata. The metadata is typically a very small part of the encoded bitstream such that it is not a factor.

If you’re encoding to HEVC SDR using the Main10 profile, the HEVC data rates should work well. If you’re distributing HDR from there, it would not seem necessary to boost the data rate to those suggested in the Apple encoding ladder.

That said, I haven’t produced any HDR content, so if anyone has a different opinion on this, please let me know in a comment.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel