N2224 has long been considered the Rosetta Stone of

N2224 has long been considered the Rosetta Stone of

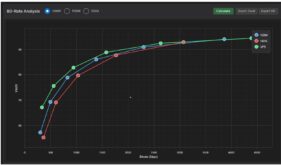

ABR encoding (image courtesy of Beamr).

Apple TN2224 was originally posted in March 2010 to provide direction for streaming producers encoding for delivery to iOS devices via HTTP Live Streaming (HLS). Because the document was so comprehensive and well thought out, and HLS became so successful, TN2224 has often been thought of as the Rosetta Stone of adaptive bitrate streaming.

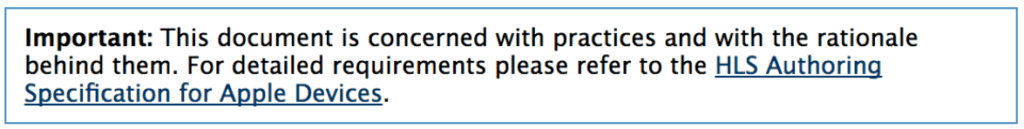

Over the last nine months or so, Apple has made sweeping changes to the venerable Tech Note, including (gasp!) deprecating the document in favor of another document called the HLS Authoring Specification for Apple Devices. In this post, I’ll present an overview of those changes.

I’ll start with how the documents interrelate. I first reported on the Apple Devices Spec back in March 2016, when it was called the Apple Specification for Apple TV. Later, Apple amended that title to Apple Devices, and expanded the scope of the document to all include all HLS production, but didn’t retire TN2224. Instead, Apple added this to TN2224.

In other words, where the two documents conflict, the Apple Devices spec is the controlling document.

In other words, where the two documents conflict, the Apple Devices spec is the controlling document.

Contents

Key Changes

In truth, some of these changes were in Apple TN2224 before Apple switched over to the Devices spec, but if you haven’t checked TN2224 in awhile, here are the major overall changes.

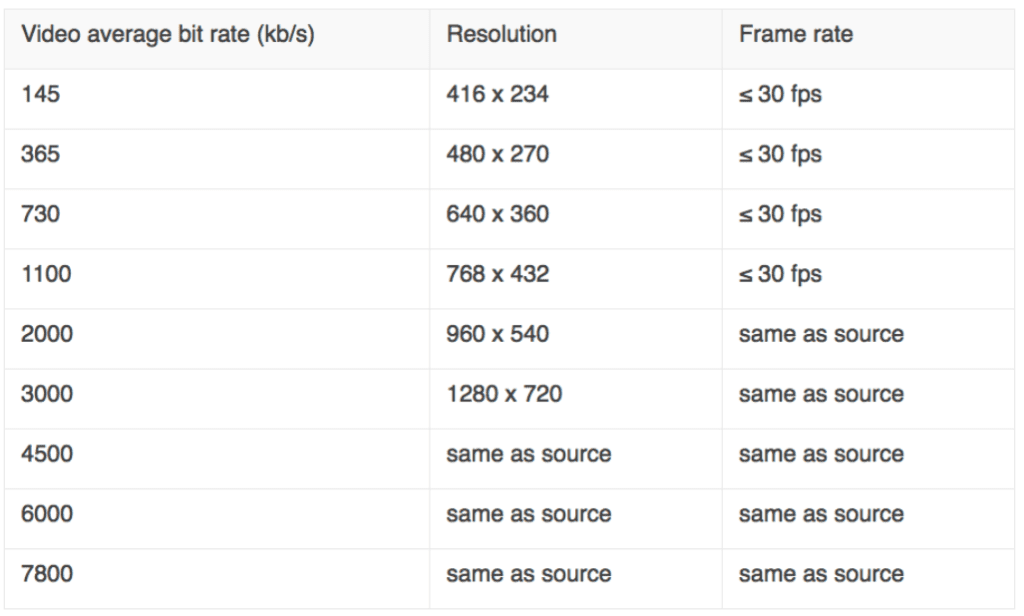

1.Completely new encoding ladder. Both documents present different ladders, since the Apple Devices spec controls, here’s the one from that document.

The recommended encoding ladder from the Apple Devices spec.

Here are some interesting observations about the two encoding ladders.

a. Up to 200% constrained VBR. TN2224 preserves the 110% constrained VBR requirement, while the Apple Devices spec says “1.19. For VOD content the peak bit rate SHOULD be no more than 200% of the average bit rate.” Since the latter document controls, you are free to use 200% constrained VBR, though the deliverability issues discussed here indicate that 110% constrained VBR may deliver a better quality of experience. The wording indicates that you’re not required to use 200% constrained VBR, but that you are free to do so.

b. Ignore Baseline and Main profiles. Both documents recommend the High profile. TN2224 says “You should also expect that all devices will be able to play content encoded using High Profile Level 4.1.” The Apple Devices spec says, “ 1.2. Profile and Level MUST be less than or equal to High Profile, Level 4.2. 1.3. You SHOULD use High Profile in preference to Main or Baseline Profile.” Apple TN2224 provides a table showing which devices the new recommendation obsoletes, which basically are iPhones and iPad touches from before 2013. Apple is pretty quick to ignore older products; I would check your server logs to determine how many of these older devices are still consuming your content before obsoleting them.

c. New keyframe interval/segment duration. Both documents agree on keyframes every two seconds and six-second segments. TN2224 (kindly) states, “Note: We used to recommend a ten-second target duration. We aren’t expecting you to suddenly re-segment all your content. But we do believe that going forward, six seconds makes for a better tradeoff.”

d. Byte-range addresses OK. TN2224 says, “In practice, you should expect that all devices will support HLS version 4 and most will support the latest version.” The Apple Devices spec doesn’t address this issue. Note that one key feature of HLS version 4 is the ability to use byte-range requests rather than discrete segments, which minimizes the administrative hassle of creating and distributing HLS streams.

e. 2000 kbps variant first for Wi-Fi, 730 kbps for cellular. The first compatible variant in the master playlist file is the first video played by the HLS client, and this can have a dramatic impact on the initial quality of experience (see tests at the bottom of this post. In this regard, the Apple Devices Spec makes two recommendations in the iOS section (rather than general), stating, “1.21.a. For WiFi delivery, the default video variant(s) SHOULD be the 2000 kb/s variant. 1.21.b. For cellular delivery, the default video variant(s) SHOULD be the 730 kb/s variants.”

f. Use 60p rather than 30p for interlaced 29.97i content. Here, Apple states “1.15. You SHOULD deinterlace 30i content to 60p instead of 30p.” I know that this improves smoothness, particularly for sports videos, but also increases the bitrate necessary for frame quality that’s the equivalent of 30p. Note that Apple also states that “1.16. Live/Linear video from NTSC or ATSC source SHOULD be 60 or 59.94 fps.” AFAIK, while networks may be following this rule, it seems that most producers are still sending 30p to the cloud.

Other Changes

Beyond the issues discussed above, Apple also completely revamped the audio requirements in the Apple Devices spec, adding HE-AAC v2 plus Dolby Digital (AC-3) and Dolby Digital Plus (E-AC-3). Apple provides much more guidance regarding captions, subtitles, and advertising, as well as master and media playlists, even beyond that provided in the HLS specification itself. For example, where the latest draft of the Apple spec recommends including Resolution and Codecs tags in the playlist, the Devices Spec makes them mandatory.

Apple also provides direction for those moving to the Common Media Application Format (CMAF) fragmented MP4 format. The bottom line is that if you’re an HLS publisher and you haven’t scrutinized the Apple Devices spec, you should do so ASAP.

References

HLS Authoring Specification for Apple Devices

About the Streaming Learning Center:

The Streaming Learning Center is a premier resource for companies seeking advice or training on cloud or on-premise encoder selection, preset creation, video quality optimization, adaptive bitrate distribution, and associated topics. Please contact Jan Ozer at jan@streaminglearningcenter.com for more information about these services.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel