Here’s the webinar, the description is below, along with an annotated transcript of the webinar. You can download the handout via a link below. Enjoy. If you’d like to be notified of future webinars, please join the mailing list on the upper left.

Who this free seminar is for: If your video looks ugly, or isn’t smoothly playing on your target computers or mobile devices, it’s likely that your video configuration isn’t optimal. This webinar is for video producers who want help identifying the optimal resolution, frame rate and data rate for their streaming video, whether encoding for delivery from their own website or to upload to an online video platform (OVP) or user generated content site (UGC).

What it covers:

– The seminar starts by defining video resolution, frame rate and data rate, and by showing the video configuration used by high volume broadcast sites like CNN, ESPN and corporate sites like Apple, Starbucks, Target and others.

– Then it defines bits per pixel, which is the best metric for quickly understanding the quality of your streaming video, and details how to use bits per pixel when configuring your own streaming files.

– The seminar concludes with guidelines for encoding your video for uploading to UGC and OVP sites.

What you will learn: You will learn how your current video configuration compares with prominent broadcast and corporate sites, how to use the bits per pixel value to help configure your videos, and how to configure your video for uploading to UGC and OVP sites.

Transcript

Here’s the transcript. I apologize for any rough edges; I cleaned up the transcript a bit, but didn’t rigorously edit or proofread.

Here’s the agenda.

Here’s the agenda.

Most readers will be familiar with some of these concepts, but some won’t be. So data rate, resolution, and frame rate, you’ve probably heard of.

So, we’ll spend a little bit of time on these concepts, and the bulk of our time on bits per pixel, which from my perspective, is the one metric you need to know about every video file that you’re streaming, whether it’s live or video on demand. Bits per pixel is critical because that tells you whether the quality is too low, or whether the data rate is too high and you’re wasting bandwidth and potentially not reaching viewers with slow connections who can’t retrieve the file in real time.

We’ll finish up with a look at encoding for re-encoding.

________________________________________________________________________________________

So what is data rate? It’s the amount of data per second of audio and video, often called bit rate, and usually expressed in kilobits per second (kbps) or megabits per second (Mbps). In most encoding tools you set audio and video data rates separately. This is the video date rate control for Telestream Episode.

Data rate is a fundamental encoding parameter. Every time you encode a file, whether it’s live or on demand, you’re going to enter the data rate of that file.

________________________________________________________________________________________

So why is data rate important? Number one, if you’re high-volume producers, say CNN or ESPN, data rate controls your bandwidth costs, right? The more data you send with each file, the more you pay in bandwidth costs every month.

For most producers, though, bandwidth is perhaps an incidental expenditure, and data rate is important because it largely controls quality. So you probably heard the term lossy? Basically all video compression is lossy, which means the more you compress the more you lose. At a fixed resolution, if you increase the data rate, you’re going to get better quality, and if you decrease the data rate, you’re going to get worse quality. So data rate is the single most important factor in overall streaming video quality.

________________________________________________________________________________________

So what is resolution? Resolution is the width and height of the encoded video file in pixels and this is Sorenson Squeeze. You see the width and the height.

This will produce a 640 x 360 file, which is the most widely used resolution on the Internet today. Like data rate, resolution is a parameter that you set every time you encode a file.

_______________________________________________________________________________________

Today, most producers shoot in HD, in either 720p or in 1080p. Then we scale the video down to a much lower resolution for streaming.

So you see the little video and the border on the bottom right? That’s a 640 x 360 window.

We shoot in HD because we like capturing the quality, maybe we’re going to use it for DVD or internal distribution, but we stream at the lower resolution.

Why is that?

________________________________________________________________________________________

Now, why do we scale to lower resolutions for streaming? If you shoot at 1080p, which is 1920 x 1080 per frame, that’s 2,073,000 pixels per frame, which is a lot of data. If you sample that down to 640 x 360, it’s 230,400 pixels per frame, a data reduction of close to 90%.

Most producers encode at a fixed target data rate. For example, 1.2 megabits per second is the data rate that CNN uses for their 640 x 360 file. File resolution is important because if CNN compressed their 1080p input down to 1.2 megabits per second, they’d have to apply ten times the compression than if they subsampled that down to 640 x 360 and then encoded the video. Since video compression is lossy, this extra compression would appear as a dramatic reduction in quality.

So why do we sample the HD video down to SD? Because it makes it easier to compress.

________________________________________________________________________________________

One question you hear a lot, what comes first, the data rate or the resolution, and that can happen either way. Sometimes the web person will say, “Hey, we’ve got a 640 x 360 window, what data rate do we need to make the video look good?” Sometimes you hear, “We can afford 1.2 megabits per second per stream, what resolution will look good at that data rate?”

The key point is you always have to consider them together. So you can’t really talk about data rate without knowing the resolution because 1.2 megabits per second is going to look great for 640 x 360. It’s going to look awful for 1080p or 1920 x 1080. So never consider about the two configuration options separately

________________________________________________________________________________________

Now, what about frame rate? Back in the early days of streaming, a lot of producers reset the frame rate to 15 frames per second to improve quality and you still see that technique used in very low bit rate files, particularly for streams targeting mobile devices in a group of adaptive files.

However, reducing the frame rate is hardly ever used for higher quality streams, which is the focus of our discussion here. So I’m going to ignore the lowest quality file and focus on the mid-range of the adaptive group and higher. For these, I recommend always producing at full frame rate, and you’re going to see a lot of examples of that in both broadcast and in corporate coming up in a moment.

________________________________________________________________________________________

Okay. So we talked about resolution. We talked about frame rate. We talked about data rate. So what combination of the three produces the optimal quality file?

This is where bits per pixel is relevant

________________________________________________________________________________________

So we’ve got two video files here from two different accounting firms. One is from Deloitte that’s on the right, and one is from, I think, Accenture on the left.

Now, which of these firms would you take compression related advice from, and just looking at these images, it’s a bit tough to compare, right? I mean, the video files, they both look reasonably similar quality.

________________________________________________________________________________________

Now suppose I told you that the Accenture video was 320 x 180, so it’s definitely a smaller video and it was encoded at a data rate of 1.5 megabits per second. So you’d say, “That seems pretty high. It’s a small video,” but it’s really hard to understand how that compares to the Deloitte video, which is much larger resolution and has even a lower data rate.

________________________________________________________________________________________

So how do these two files truly compare? That’s where the concept of bits per pixel really becomes valuable because when we include that in the information, we see that Accenture is encoding their video on a bits-per-pixel basis almost seven times higher than Deloitte.

So you’re probably thinking, “Oh my goodness,” do they need all that data? Well, not if the Deloitte video looks good, and it looks just fine. So let’s look at how to calculate bits per pixel and then we’ll come back and look at some examples of the bits per pixel used by certain broadcasters and large corporations. ________________________________________________________________________________________

So the formula for bits per pixel, and I’ve got tons of spreadsheets to calculate this automatically, is your per second data rate divided by the number of pixels in the individual per second of video. To calculate that, multiply your frames per second times the width times the height.

Or, you can download a free program named MediaInfo, which is available on the Mac, Windows and Unix, and will provide the bits per pixel of every file you load into it. It’s easy enough to calculate, but it’s always nice to have a tool that does it for you in MediaInfo. Not only does it tell you bits per pixel, it tells you a lot of information about the file that’s going to be relevant for other reasons. It’s the one program that’s on every computer I have in my office.

________________________________________________________________________________________

So what’s the general rule for bits per pixel? Well, at 640 x 360, values above 0.2 are almost clearly a waste. CNN is at 0.121 for low motion video and ESPN is at 0.201, but that’s for sports. As you’ll see, the required values drop as the videos get larger because codecs work more efficiently at higher resolutions.

________________________________________________________________________________________

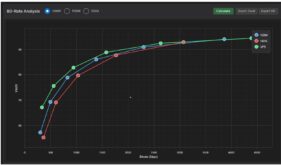

So what’s the magic number? So here we see a table of files produced by different media companies which are segregated by groups according to resolution. The top group contains all videos smaller than 640 x 360 resolution. The second group is 640 x 360 resolution, and it’s the most commonly used resolution in broadcast today. Below that are files slightly larger at 768 x 432 and above that is the 720p group, 1280 x 712, 1280 x 720.

If you look all the way to the right, you see that the bits per pixel is 0.133 for the very, very small files. It goes up a little bit to 0.159 for slightly larger files and then it starts to drop. For the 768 x 432, it’s 0.134 and the 720p files average 0.056. So again, this is compression getting more efficient at higher resolutions.

Now, while we’re here, let’s take a look at the frame rate. So that’s the FPS column on the right, and as I said before, none of the media companies are dropping the frame rate. They’re all producing at the native frame rate, either 24, 29.997 or 30 frames per second. So most media companies, if not all media companies, encode at the native frame rate. They adjust resolution and data rate to get the quality that they need, not frame rate.

________________________________________________________________________________________

So, codecs get more efficient at higher resolutions, but how much more efficient? That’s where the Power of .75 Rule comes in.

Ben Waggoner is a well-known compressionist, formerly with Microsoft and now with Amazon. I don’t know if he created this rule, or if he just shared it with me. Basically, it’s a mathematical formula that lets you calculate the data rate necessary in a larger resolution file to produce the same quality as a lower resolution file.

If you know how to work with fractional exponents, have at it, but in a few slides you’ll see a table with recommendations for the most commonly used video resolutions.

________________________________________________________________________________________

Okay, I wanted to show this chart again so you could apply the rule you just learned. Again bits per pixel starts at 0.133 on the right for the very, very small files. I think it’s an anomaly that it goes up to 0.159 and then you start to see it go down to 0.134 and to 0.056. Now let’s see how corporations using this metric.

Before we go, note that the broadcast space is almost exclusively using H.264, with only two holdovers using VP6. We’ll see a lot more of that legacy codec in the corporate space, as well as files encoded at 15 fps.

________________________________________________________________________________________

So let’s look at some corporate examples. We’ll see four tables and then a summary table. This first table is comprised of very, very low resolution producers. A couple of points before we get to bits per pixel.

First, only 33% of these producers are encoding with H.264, with Mayer Hoffman encoding with H.263, which is three generations old. As you recall, only two media companies were using VP6, none using H.263. If you haven’t yet transitioned to H.264, it’s well past the time to be doing that. You’re also seeing the frame rates dropping to 15 or 18 fps, which we didn’t see at all in the media companies.

In terms of bits per pixel, we’re seeing a couple of companies that are exceedingly high, dragging the average way beyond anything we saw with media companies.

________________________________________________________________________________________

This group includes producers at larger than 400 pixel width and below full SD, or 640 x 360 or 640 x 480. Here we see bits per pixel value at a fairly normal level, very close to what we saw for broadcast, and a much greater share of the H.264 codec, which is what I recommend.

Again, though, some companies encode at 15 frames per second, which no broadcasters did, and which I don’t recommend.

________________________________________________________________________________________

So here we go to companies who are using 640 x 360 or very close to 640 x 360, and what we see is these are some pretty tony companies. You’ve got Cisco, General Motors, Jeep, Kroger, Porsche, Apple, and Dell.

While H.263 is still there, I don’t know what to say about BMW on that score, you’re seeing no companies encoding at lower than the nominal frame rates. Everybody is either at 24 or 29 or 25 and you’re seeing the bits per pixel averaging at 0.140,right on line with what you’re seeing with broadcast sites.

_______________________________________________________________________________________

This is the larger than 640 x 360 category, and again we’re seeing, I guess, a surprising representation of codecs (I don’t know what Aon is thinking, either). However, no companies are subsampling down to lower frame rates. Everybody is at either 24, 25, or 30 or around those numbers and we’re seeing bits per pixel values averaging at 0.117, which is at these resolutions, is pretty close to where they should be.

If you look at on the bottom, you see that 720p is used by the last three. In terms of bits per pixel, you see Interactive Intelligence at 0.06. You see HP, who produces a lot of high quality streaming video, at 0.076. You see Kurt Salmon, a big consulting firm in Atlanta, at 0.088. So again we’re seeing that drop in bits-per-pixel value that Ben Waggoner’s Rule of .75 predicts.

________________________________________________________________________________________

And here’s the corporate summary. In terms of bits-per-pixel value, the 0.589 in the very tiny category is way too high, but then we see at 0.133 it goes up. in the sub full-SD category, the value is about right, with a slight increase at full SD, which is an anomaly. Then it goes down for the greater than SD, as it should.

________________________________________________________________________________________

So how to use all this information? This is a table from my book, Producing Streaming Video for Multiple Screen Delivery. This shows common video resolutions on the left and different bits per pixel values on the right starting at 0.08 to 0.1, 0.125, 0.15 and then 0.175. These red circles would be the starting point for data rate at the respective resolutions and at full frame rate – this table is for 29.97 (the book also has tables for 25 and 24 fps).

So, if you’re producing 1080p video, you should encode at around 5 megabits per second and target 0.08 for the bits-per-pixel value. At 720p, at 2.7 megabits per second and target for the bits-per-pixel value. And so on.

Note that these numbers will be conservative for many videos; if the video looks good, you could try a lower data rate to see how that looks. But I certainly wouldn’t exceed these numbers unless the video didn’t look good at them.

________________________________________________________________________________________

What’s the bottom line? You should know the bits-per-pixel values for all videos. If it’s under 0.10 and your video looks bad, raise the data rate of your video or reduce the resolution. There’s no magic pill to make things better. There’s no compression technology that’s much better than anything else.

If your bits-per-pixel value is over 0.2, re-encode at lower data rate to see if the quality will suffer. Try it at 0.15, try it at 0.175 and see if the quality is still sufficient. Not only will you save bandwidth costs, you’ll increase the number of people who can smoothly stream the file online.

So back to your studios, get Mediainfo, and check the bits-per-pixel value for every one of them, live and on demand.

________________________________________________________________________________________

So that’s bits per pixel and let’s bridge over to encoding for upload to UGC and OVP. UGC is user generated content. OVP is online video platform. YouTube is obviously the most famous UGC site, Vimeo is up there as well, MetaCafé. The poster child for OVPs are Brightcove, Ooyala and Kaltura.

So that’s bits per pixel and let’s bridge over to encoding for upload to UGC and OVP. UGC is user generated content. OVP is online video platform. YouTube is obviously the most famous UGC site, Vimeo is up there as well, MetaCafé. The poster child for OVPs are Brightcove, Ooyala and Kaltura.

How are these two groups similar? Both groups re-encode your uploaded file for delivery to your viewers. So when you’re producing for delivery from your own website, things like bits per pixel really matter because you’re trying to reach the optimal blend of quality and affordability or quality and streamability. But when you’re encoding for upload, your goal is to supply the highest quality master file to these companies because they’re going to re-encode it.

So the rules are changed. You don’t worry about bits per pixel. You worry about how to get the highest possible quality file up for re-encoding.

________________________________________________________________________________________

How to do this? I’m basically using YouTube’s recommendations as a general purpose recommendation for both UGC and OVP because YouTube encodes more videos than all other sites put together and what’s good for them is going to be good for online video platforms and other UGC sites. If you look at the reference on the bottom of the page, you’ll see that YouTube has two recommendations. One is standard quality and one is high quality uploads for creator’s with enterprise quality internet connections.

Everything’s a trade-off with video compression. If you encoded a file at 8 megabits per second and uploaded that to YouTube and if you encoded a file at 50 megabits per second and uploaded that to YouTube, you would notice a small difference in the quality between the files that YouTube produced. It’s not dramatic. It’s not even obvious, but if you compare them side by side, you would see that the 50 megabit per second file produces a higher quality result. I know because I’ve done the test.

So basically it comes down to how many files you’re producing, and how fast is your internet connection. I produce two or three files a month to upload to Brightcove for Streaming Media Magazine, each about ten minutes long. I encode them all at 720p at 30 megabits per second because I’m not limited by my upload speed. Two files a month I can handle at 30 megabits per second.

If I was encoding 30 files a day and uploading those to Brightcove, it would be a different story. Basically the high level message is encode at the highest possible data rate that you can and still get all your files uploaded on a timely basis. It will make a difference now and in the future because YouTube will keep those files that you send them and use those again and again and again as they move to new technologies like H.265 and beyond.

________________________________________________________________________________________________________________________________________________________________________________

So how do you do this? In general, you want to encode your video at its native resolution, or smaller–you never scale to higher resolutions during encoding. You want to encode at its native frame rate, unless is interlaced, like 60i, in which case you want to produce progressive mode video at 30p. Then you want to encode at the highest data rate possible, which we just covered.

What about your encoder? Most encoding tools will have YouTube templates. In this slide, Apple Compressor is on the top right, Adobe Media Encoder is on the bottom right. If you’re using these tools, or any other, find a template that matches the resolution of your video, if possible, or is smaller than your resolution. So you’ve got 1080p, find a 1080p template or 720p template. Don’t take an SD file and zoom it to 1080p for uploading because you won’t get any better quality. Find a template that matches the resolution of your source video or is smaller. Find a template that matches the frame rate because, other than converting interlaced footage to progressive, you don’t want to change the frame rate when you upload it.

In terms of data rate, most templates are pretty conservative. For example, the Adobe Media Encoder YouTube 720p 29.997 template uses 5 Mbps. I would boost that to the maximum data rate possible, which is 17.5 mbps.

To boil this down into a cohesive message, find a template that’s either larger or the same resolution as your target, find one that matches the frame rate and boost the data rate as high as you can comfortably do and still get all your uploads done. Finally, remember that YouTube and some other UGC sites, have duration limits, so don’t exceed those. If you’re working with an OVP, there shouldn’t be a duration limit.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel