Author’s notes:

1. VMAF is now my metric of choice.

2. Since the first publication, I converted SSIM to decibel form using this formula from the Video Codec Testing and Quality Measurement :

-10 * log10 (1 – SSIM)

As it turns out, the results are the same (no comparisons in red) but now they should be correct.

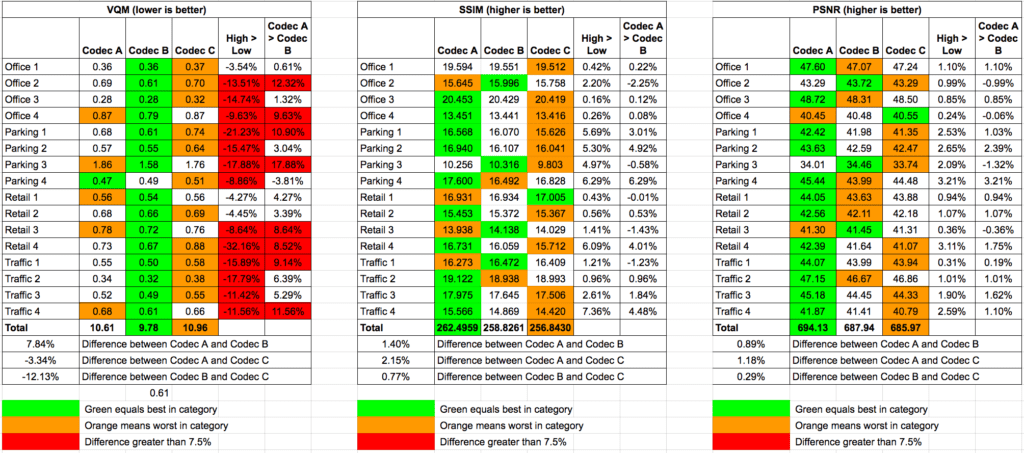

Objective video quality benchmarks serve a valuable role in evaluating the quality of encoded video. There are multiple algorithms, and multiple tools to measure them. Though the Peak Signal to Noise (PSNR) and Structural Similarity Index (SSIM) have been around longer and are much more widely used, I prefer the VQM metric as applied in the Moscow University Video Quality Measurement Tool (you can read my review of the tool here). A brief glance at the table, which you can see at full resolution by clicking it, explains why.

The background is last summer, in 2014, I had a consulting project that involved comparing 3 HEVC codecs. I purchased the Moscow University tool to apply the objective benchmarks. I worked with PSNR and SSIM initially, but they provided little differentiation between the contenders. You can see this in the SSIM and PSNR tables in the screen grab.

The high versus low column shows the biggest difference between the highest and lowest score in each clip test. Those cells marked in red show a difference of greater than 7.5%. As you can see in the SSIM and PSNR tables, none of the tests crossed that threshold, or really even came close.

In contrast, the VQM test showed 13 instances where the best quality option was greater than 7.5% better than the lowest quality option. More importantly, in all those instances, the differentials accurately presaged true visual distinctions between the contenders. Even better, as you can see in the video below, the Moscow University tool also makes it easy to visualize the actual differences after identifying them.

When you’re choosing an objective quality algorithms, you want one that identifies significant difference between the technologies you’re analyzing, and an analysis tool that makes it easy to see and verify those differences. That’s why I like the VQM metric and the Moscow University tool. Another up-and-coming algorithm/tool is the SSIMWave Quality of Experience Monitor that I recently reviewed for Streaming Media in a review you can read here. I like that tool, and its SSIMplus algorithm, which is a significant advance over Plain Jane SSIM.

Dr. Zhou Wang, a co-inventor of SSIM and co-founder of SSIMWave, recently won an Emmy Award for the SSIM algorithm (along with his co-inventors), and I certainly don’t mean to detract from that accomplishment in any way. However, in my use, VQM is a much better canary in a coal mine for actual differences between compared files, and I’m sure at this point Dr Wang would say that SSIMplus is a significant advance over SSIM as well.

Here’s the video showing the Moscow University Tool in operation.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel