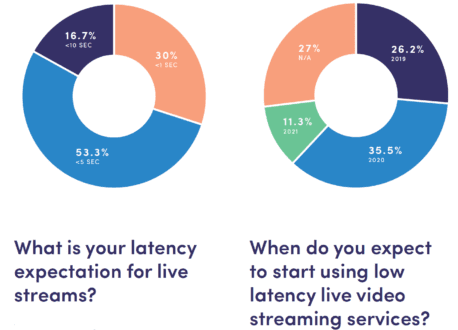

According to Bitmovin’s “Video Developer Report 2019,” latency was a concern of 54% of all its survey participants. Digging into the numbers, subsequent questions revealed that almost 50% of survey participants planned to implement a low-latency technology over the next 1–2 years, with over 50% seeking latency of under 5 seconds and 30% seeking latency of under 1 second (See above).

All of this bodes well for an article on low-latency options, don’t you think? I’ll start with a list of things to know about low-latency technologies, then provide a list of considerations for choosing one.

Contents

WebRTC

Most low-latency solutions use one of three technologies: WebRTC, HTTP Adaptive Streaming (HAS), or WebSockets. Per the WebRTC.org FAQ page, “WebRTC is an open framework for the web that enables Real-Time Communications in the browser.” WebRTC reached the Candidate Recommendation stage in the World Wide Web Consortium (W3C) standards organization but has not been finally approved. Still, according to Wikipedia, WebRTC is supported by all major desktop browsers on Android, iOS, Chrome OS, Firefox OS, Tizen 3.0, and BlackBerry 10. This means that it should run without downloads on any of these platforms.

By design, WebRTC was formulated for browser-to-browser communications. As its name suggests, it is a protocol for delivering live streams to each viewer, either peer to peer or server to peer. In contrast, HAS-based solutions divide the stream into multiple chunks for the client to download and play. As adopted for large-scale streaming, WebRTC is typically the engine for an integrated package that includes the encoder, player, and delivery infrastructure.

Examples of WebRTC-based large-scale streaming solutions include Real Time from Phenix, Limelight Realtime Streaming, and Millicast from CoSMo Software and Influxis. You can also access WebRTC technology in tools like the Wowza Streaming Engine or those from CoSMO Software, although you’d have to create a scalable distribution system for large-scale applications. Latency times for technologies in this class range from 0.5 seconds for 71% of the streams (Real-Time) to under 1 second (Limelight Realtime Streaming).

HAS-Based Solutions

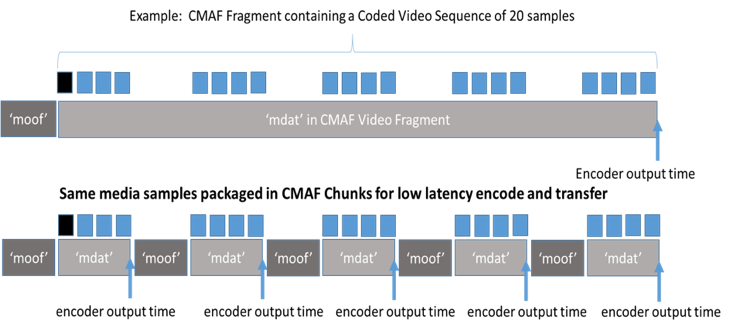

There are multiple HAS-based solutions from multiple vendors, although they operate in many different ways. All of them deploy a form of chunked encoding that breaks the typical 2–6-second segment into chunks that can be downloaded without waiting for the rest of the segment to finish encoding. These chunks are shown on the bottom of Figure 1, which was taken from an Akamai blog post by Will Law.

Besides chunked encoding, these systems adjust the manifest file to signal the availability of the chunks, which are pushed to the origin server via HTTP 1.1 chunked-transfer encoding. In terms of expected latency, Law’s post states, “If distribution is happening over the open internet (especially over a last-mile mobile network where rapid throughput fluctuations are the norm), current proofs-of-concept show more sustainable Quality of Experience (QoE) with a glass-to-glass latency in the 3s range, of which 1.5s-2s resides in the player buffer.”

There are many joint-development efforts around these schemas as well as some standards beyond chunked encoding and HTTP 1.1 chunked-transfer encoding, which have long been standards. One cluster of development was around Low-Latency HLS and the hls.js open source player, which includes contributions from Mux, JW Player, AWS Elemental, and MistServer. There’s also a Digital Video Broadcasting (DVB) specification for Low Latency DASH, and there are low-latency guidelines from the DASH Industry Forum. Obviously, each spec only applies to the designated technology, so you have to implement both to deliver low latency to both HTTP Live Streaming (HLS) and DASH clients.

There are also Common Media Application Format (CMAF) chunk-encoded solutions that allow delivery to both HLS and DASH clients from a single set of files. There are many advantages to low-latency CMAF-based approaches, including legacy player support. That is, if the player isn’t capable of low latency, it will simply retrieve and play the segments with normal latency. In addition, since the file format is standards-based, current techniques for DRM, captioning, and advertising insertion should work normally, and the HTTP segments should be cacheable and present no problem for firewalls.

For the most part, standards-based approaches like these provide the most ecosystem flexibility, allowing you to choose an encoder, packager, CDN, and player just like you would for normal latency transmissions.

WebSockets-Based Approaches

The third approach is typically based on a real-time protocol like WebSockets, which creates and maintains a persistent connection between a server and client that either party can use to transmit data. This connection can be used to support both video delivery and other communications, which are convenient if your application needs interactivity.

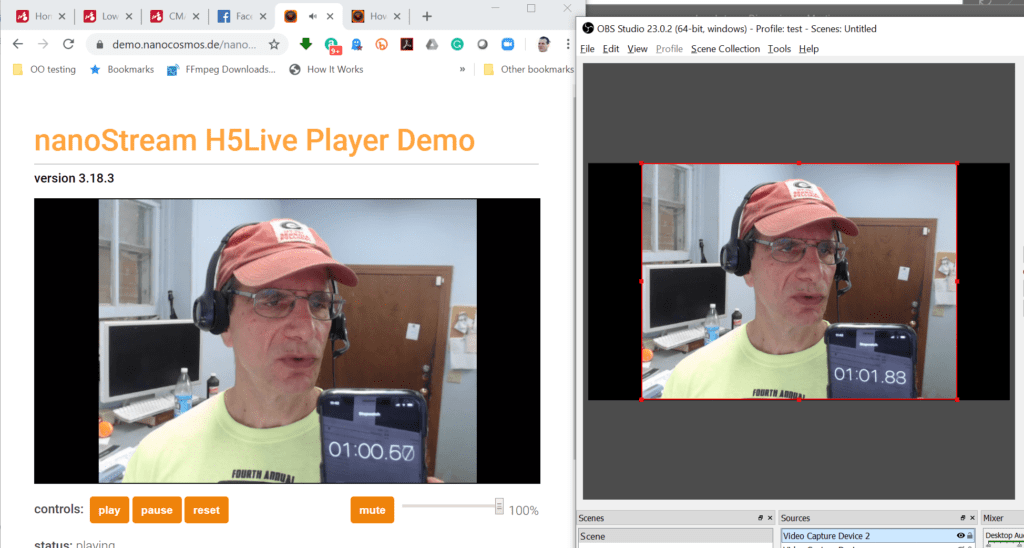

Like WebRTC implementations, those that use WebSockets are typically offered as a service that includes a player and CDN, and you can use any encoder that can transport streams to the server via RTMP or WebRTC. Examples include Nanocosmos’s NanoStream Cloud and Wowza’s Streaming Cloud With Ultra Low Latency. Wowza claims sub-3-second latency for its solution, while Nanocosmos claims around 1 second, glass to glass.

Figure 2 shows a test of the NanoStream cloud in which I’m encoding via OBS on my HP notebook (right window), sending the stream to the Nanocosmos server, and playing it in the H5Live Player. I’m holding up an iPhone with the timer running, and latency is in the 1.3-second range. Note that iPhones don’t natively support WebSockets, so Nanocosmos created a unique Low-Latency HLS protocol to minimize latency.

Latency Targets Largely Dictate Choice of Technology

With live streaming, there are three factors to balance: quality, latency, and robustness. You can get any two for an event, but you can’t get all three. As an example, the reason out-of-the-box HLS has an approximately 18-second latency is that each segment is 6-seconds long and the Apple default player buffers three segments before starting playback. The benefit is that the viewer will have to suffer a sustained bandwidth event before seeing any degradation. If you cut latency down to 3 seconds via any means, it only takes a short bandwidth blip to stop the stream.

From a technology-selection perspective, there are three levels of latency. The first is “doesn’t matter,” which is a one-to-many presentation with little interaction and no live television. Think of a church service, city council meeting, or even a remote concert. For applications like these, drop your segment size to 2–4 seconds, and you can reduce latency to between about 6–12 seconds with very little risk, no development cost, and minimal testing cost. Lower latency isn’t always better if you don’t absolutely need it.

The second level is “spoiler time,” or the proverbial enthusiast watching TV next door who starts shouting (and tweeting) about a touchdown 30 seconds before you see it. Most broadcast channels average about 5–10 seconds of latency; most of the latency estimates for HTTP-based technologies are in the 2–5-second range, which should comfortably meet this requirement, if not provide streaming with a noticeable advantage over broadcast.

The third level is “real-time,” as required by interactive applications like gambling and auctions in which even 2 seconds is too long. At least in the short term, HTTP-based technologies probably can’t deliver this at scale, so you’ll be looking at a WebRTC- or WebSockets-based solution.

If you’re a broadcaster, while 1 second of latency sounds great, WebRTC- and WebSockets-based solutions may have several key limitations. First, you’ll need captions and advertising support, which few services deliver. Second, you may need DRM; although several non-HAS services offer content protection, and forensic watermarking may soon be available, it’s a totally different solution than the CENC-based DRMs used for DASH, HLS, or CMAF. Third, your video quality for a given bitrate may suffer compared to HAS due to certain encoding restraints that may be imposed on some, but not all, WebRTC encoders.

Finally, low-latency HAS services produce content that’s both backward-compatible to players that don’t support low latency and immediately available for DVR or video-on-demand (VOD) delivery. WebRTC- and WebSockets-based systems can make their streams immediately available after the live broadcast for VOD, but not in the traditional adaptive bitrate (ABR) format without transcoding. If you’re broadcasting a single hour a month, the cost to convert the stream for HAS-based VOD (if desired) is negligible; if you’re producing 200 channels of TV each hour, it adds up pretty quickly.

The HAS Market Is Rapidly Evolving

As previously described, there were multiple groups working toward DASH or HLS-based low-latency solutions. Apple managed to surprise them all when it announced its Low-Latency HLS Preliminary Specification, generally called LL-HLS. The spec differs from previous efforts in two key ways. First, it enables transport stream chunks and fragmented MP4 files, whereas DASH only supports the latter (technically, DASH supports transport streams in the spec although it’s seldom if ever, used). If you use transport stream chunks to deliver low-latency video to HLS, you’ll need a separate stream using fragmented MP4 files to support DASH low-latency output.

Thomas Kramer, VP of product management at codec vendor MainConcept, feels that Apple’s move is complicating the ABR ecosystem, which has been slowly migrating toward CMAF. “Apple’s introduction of LL-HLS with the first sample code snippets showing the use of MPEG Transport Stream instead of fMP4 for CMAF and other proprietary extensions for low-latency indicates to me that Apple was not listening enough to the already ongoing adoption of CMAF in the marketplace in favor of their own ecosystem and playback devices.”

That’s a fair comment; Apple abandoned the transport stream format for HEVC, so why not do the same for low latency? Of course, as a developer, you get to choose your approach: You can use transport stream chunks for HLS and fMP4 for DASH clients and achieve maximum compatibility or use a single fMP4 stream for maximum efficiency and potentially lose some HLS low-latency viewers.

The second key difference between Apple’s scheme and earlier approaches is that it uses HTTP/2 rather than HTTP 1.1 chunked-transfer encoding. In his excellent blog post titled “The Community Gave Us Low-Latency Live Streaming. Then Apple Took It Away,” Mux’s Phil Cluff notes, “The choice of technologies (namely HTTP/2) Apple has selected is going to make it really hard for non-Apple devices to implement LL-HLS.”

As a counterpoint, Akamai’s Law states, “The HTTP/2 requirement does raise the bar for support, although mostly for CDNs and older clients. The majority of 2019+ client devices support a H2 stack and as always it will be the legacy devices which struggle with H2. Apple may make H2 optional for delivery in order to accommodate these older devices. I have a working MSE application that happily plays LL-HLS in Chrome/Firefox/Safari browser along with H2 push. From early testing I can say that H2 push certainly helps with stability and I would be hesitant to go to wide-scale LL-HLS distribution without it.”

I circled back with Cluff, who noted that Apple’s approach complicated bandwidth estimation for non-Apple developers, but added, “Apple [has] been more helpful than I’ve ever seen on this project. I, and many others in the industry, have met with them several times, and they’ve already made changes to improve things, and there’s hopefully more coming.” In this regard, Apple released reference LL-HLS software for CMAF output on Sept. 11, 2019. So that’s encouraging.

On the encoding and packaging side, Apple’s new spec is already getting a lot of traction. Regarding encoding, Nikos Kyriopoulos, Media Excel’s VP of product and business development, noted that his company’s Hero encoder currently supports Ultra-Low Latency DASH (based on CMAF) for ingest into Akamai and other delivery systems and that the company is working with partners to also support end-to-end Low-Latency HLS. According to Barry Owen, Wowza’s VP of solutions engineering, his company is prioritizing Apple’s low-latency schema first and then DASH “because we see a much larger percentage of HLS than DASH, whether it’s standard streaming or low latency streaming.”

On the user side, I spoke to several large OTT services about their low-latency plans. The consistent message was that they weren’t going to support multiple low-latency technologies and that they would delay their implementation plans until the competing technologies sort themselves out.

In this case, Apple seems to be working with the community to simplify the implementation of LL-HLS. Still, every new and different approach adds confusion to the market, which will take months to sort out.

Most of What You ‘Know’ About WebRTC Is Wrong

WebRTC started life as the technology underlying the initial, somewhat feeble, iterations of Google Hangouts, which left many with bad memories of very low-quality video. As a result, the common fictions about WebRTC are that it doesn’t scale, that ABR isn’t available, and that video is limited to low resolution and low quality.

The fact that WebRTC needs a persistent connection between the server and player does complicate scalability, but it’s a challenge large-scale streaming shops have solved within their own delivery infrastructure. That’s why to reach tens of thousands of viewers, you’ll need to use their CDN infrastructure or deploy their technology on your own CDN. According to Alexandre Gouaillard from CoSMo Software, the WebRTC-based Millicast system has served as many as 50,000 confluent users for a single Sotheby’s auction. Oliver Lietz of Nanocosmos claimed more than 100,000 viewers via WebSockets in one of the company’s recent events. So consider scalability a potential cost issue, not a capability issue.

Ditto for ABR and stream quality. The WebRTC spec can incorporate multiple streams in parallel, although not all WebRTC implementations support this. There are some browsers that limit resolution or stream bandwidth, but these aren’t absolute limitations of WebRTC.

Not all WebRTC-based services may offer all of these features, so it’s definitely something to check with each candidate service, but scalability, ABR, and quality aren’t absolute bars to selecting WebRTC. Whether they come at a cost equivalent to HAS systems is another matter altogether.

There Are Some Interesting New Approaches

Beyond the three categories of products noted, there are some pockets of innovation from various companies in the streaming ecosystem. One of the more interesting is THEO Technologies’ High Efficiency Streaming Protocol (HESP), which is based on a new HAS protocol. According to THEO Technologies’ website, it delivers sub-second latency, around a 100-millisecond time to first frame, and viewer bandwidth optimizations of up to 20%. It is also compatible with HTTP CDNs.

While proprietary technologies certainly have their challenges, HESP appears to provide the latency of WebRTC with the scalability of HAS solutions. If you’re looking for subsecond latency, HESP is worth checking out.

You’re Choosing a Service Provider, Not an Acronym

According to Nanocosmos’s Lietz, his customers don’t ask which technology to use; they’re seeking a well-featured and affordable end-to-end platform with the lowest possible latency. So I’ll close with some questions to ask to sort this out. Thanks to Bill Wishon, chief product officer at Phenix, for identifying most of the points on this list, which obviously don’t all apply to all use cases.

Questions to Ask About Low-Latency Technologies:

- Is the technology adoptive? If so, how many streams, and are there any relevant bitrate or resolution limitations?

- What are the quality limitations, if any? Baseline profile only? No B-frames or look-ahead buffer?

- Is there a download required? (Most systems don’t require this, but it’s worth asking.)

- Can the system synchronize all viewers to the same point in the stream? (Streams can drift over locations and devices, and without this capability, users on a fast connection may have an advantage for auctions or gambling.)

- Can it get through firewalls? (HTTP-based systems use HTTP protocols, which are firewall-friendly. Others employ User Datagram Protocol, which may not be.) If User Datagram Protocol, are there any backchannels to deliver to blocked viewers?

- What content protection is available?

- Can the system scale to meet your target viewer numbers? Is the CDN infrastructure private, and if so, can it deliver to all relevant viewers in all relevant markets?

- Can you use your own player, or do you have to use the system’s player? If your own, what changes are required, and how much will that cost?

- What’s needed for mobile playback? Will it play in the browser, or is an app required?

- What additional platforms are supported (set-top boxes, dongles, OTT devices, smart TVs)?

- What’s the latency achievable at a scale relevant to your broadcast?

- What’s the overall cost of the event?

- Can the content be reused for VOD, or will re-encoding be required?

- What are the redundancy options?

- Are captions available?

- What about advertising insertion?

- What about DVR?

- Which encoders can the system use?

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel