A consulting client sent the following command string for a 1080p file they were encoding for upload to Vimeo, their online video platform.

crf=20:vbv-maxrate=10000:vbv-bufsize=9500

What’s wrong with these parameters?

First, some theory. One distinction to understand when encoding files is the difference between producing a mezzanine file to use for subsequent transcoding and encoding for distribution. When producing a mezzanine file, you’re producing a file for uploading to a service like Vimeo for transcoding into an encoding ladder to be distributed to end-users. Video being a garbage-in/garbage-out medium, your primary consideration is delivering a file with sufficient quality to serve as a master for those transcodes.

When encoding for distribution, you want to deliver a high-quality experience, but you need to be able to actually deliver the file to the viewer without interruption. This means you have to be concerned with both the overall data rate and how the data rate varies within the file.

Looking at the command string, it’s not particularly well suited for either role.

Contents

Unpacking the Command String

Basically, the command string is telling FFmpeg to:

- Encode at a quality level of 20 CRF

- Cap the bitrate at 10000 kbps

- Maintain a sub-one-second buffer size.

For those not familiar with CRF, it stands for constant rate factor, and it’s an encoding mode supported by FFmpeg and other encoders when producing output using codecs like x264, x265, VP9, AV1. Instead of varying the quality to achieve a target bitrate, as bitrate-driven encoding does, CRF varies the bitrate to deliver constant quality. Quality increases as CRF values get lower, so CRF 15 delivers better quality than CRF 20.

When you use CRF with a bitrate cap, as shown in the command string, it’s called capped CRF, and it’s a simple form of per-title encoding (for more on Capped CRF, see here). Encode a 24 fps 1080p animation with the suggested command string, and you might end up with a 2 Mbps file that never comes near the 10 Mbps cap. Encode a 60 fps 1080p soccer match and the bitrate might be flatlined at 10 Mbps. So, you save bitrate on easy-to-encode files and still deliver a good experience with hard-to-encode files.

Encoding for Transcoding with CRF

CRF is a fabulous mode for creating mezzanine files because your overarching consideration is quality, not overall bitrate or variations in the bitrate. So, looking at our command string, you really don’t need the cap or the buffer restriction. You don’t care with a mezzanine file.

You also would use a lower value than 20 to produce a higher-quality file to enable higher-quality transcoding. You don’t need to go crazy, but a value of 10 – 15 CRF should be fine (see here to read about the impact of mezzanine file bitrate on output quality). In fact, if you check Vimeo’s recommended upload settings, they state “If you have the ability to set the CRF (constant rate factor), we recommend setting it to 18 or below.”

So, you might use this configuration to output a mezzanine file.

crf=15

A few additional points about producing mezzanine files.

- Always check the requirements of the service that you’re uploading to. Follow their requirements, not what’s listed here.

- You don’t need to set a short GOP size or keyframe interval (the same thing). Unless specified otherwise, a GOP size of 10 seconds should be fine (FFmpeg’s default if you don’t specify is 250 seconds). Note, however, that YouTube recommends “Closed GOP. GOP of half the frame rate.” I’m not sure why and your file won’t be rejected if you don’t follow these guidelines, but at a high enough bitrate, the quality difference between a GOP size of 10 seconds and .5 seconds will be negligible.

- Use a higher-quality codec like HEVC to cut upload time (if accepted by the service), though it might extend encoding time.

- Be sure not to change the frame rate when encoding, either in a GUI or command script.

Producing Files for Delivery

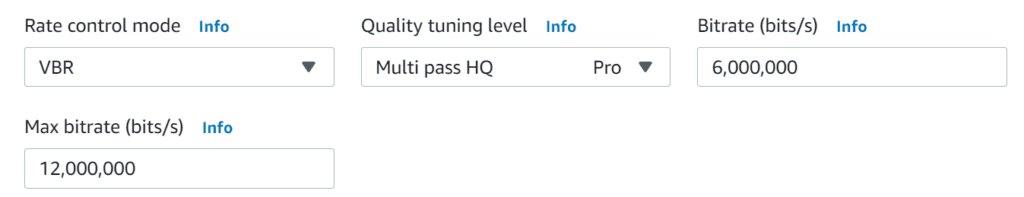

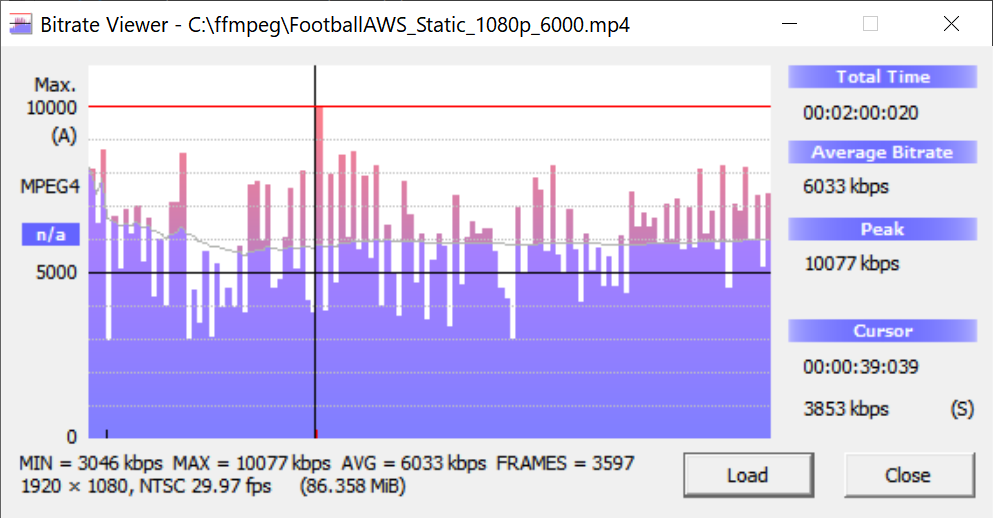

When producing files to actually deliver to viewers, you care about quality, the average bitrate, and spikes in the bitrate that might interrupt smooth retrieval and playback, which can be an issue with smart TVs and other relatively low CPU power devices. If you were using traditional bitrate control with an encoder like AWS Elemental MediaConvert, you might implement what’s called 200% constrained VBR, where you choose variable bitrate control (VBR), a 6 Mbps target, and a 12 Mbps max bitrate. Obviously, the 200% comes from the ratio between the target and the max.

These settings produced the file shown in Bitrate Viewer in Figure 2, which has an average bitrate of 6033 kbps and a maximum bitrate of 10077 kbps. This file should be high quality and easy to deliver. Mission accomplished MediaConvert.

With capped CRF, you set the maxrate as the target maximum average bitrate and control data rate variability, and to some degree overall bitrate, with the buffer size.

For example, I used the following strings to encode two files to H.264.

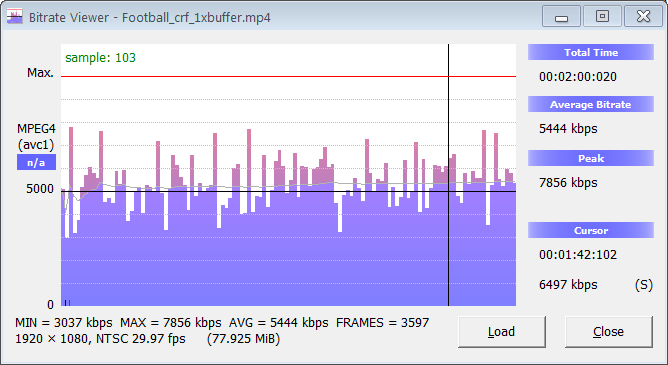

ffmpeg -i Football.mp4 -c:v libx264 -crf 22 -maxrate 6000k -bufsize 6000k crf_1xbuf.mp4

ffmpeg -i Football.mp4 -c:v libx264 -crf 22 -maxrate 6000k -bufsize 12000k crf_2xbuf.mp4

I used CRF 22 rather than 20 for reasons discussed here.

Here’s Bitrate Viewer and the first file, showing an average bitrate of 5444 kbps and a max bitrate of 7856 kbps. You see the faint blue line showing the average bitrate varies very little.

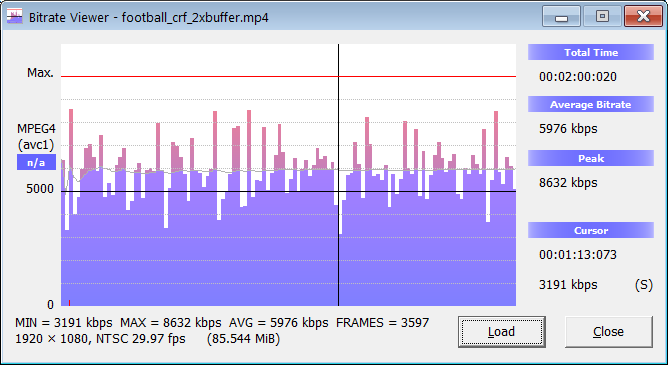

Here’s the second file, with a slightly higher bitrate of 5976 kbps and a max bitrate of 8632 kbps. Though the spikes are a bit more pronounced, and the average bitrate higher, it’s still pretty consistent.

Buffer Size and Quality

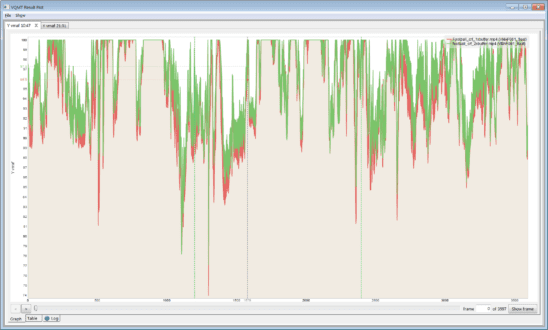

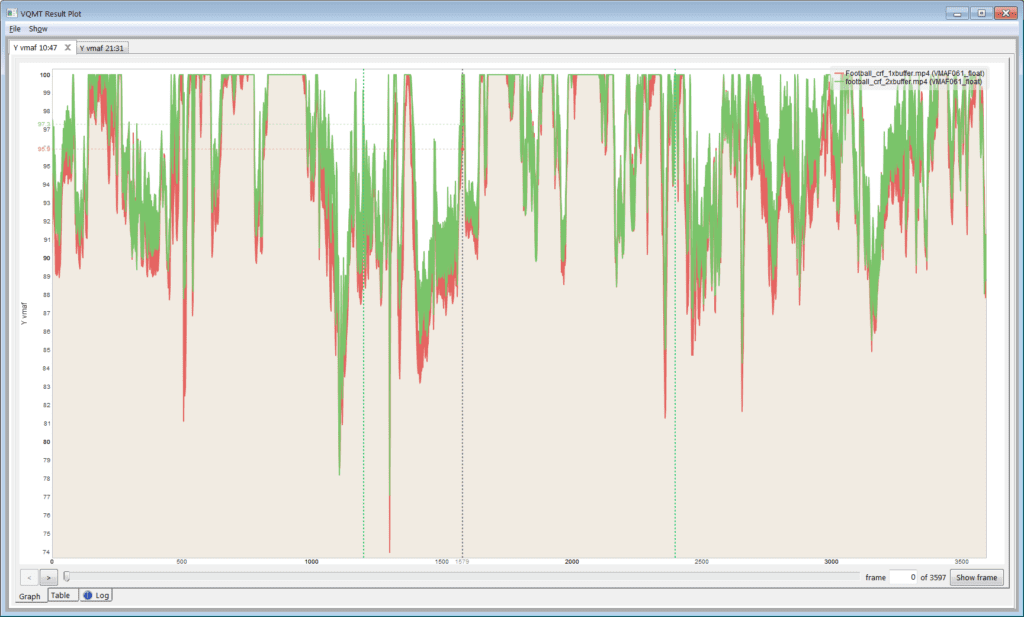

How does buffer impact quality? The graph below, from the Moscow State University Video Quality Measurement Tool (VQMT), compares the VMAF scores over the duration of the two files shown above. The first file, in red, has the 1x buffer, and the file in green has the 2x buffer.

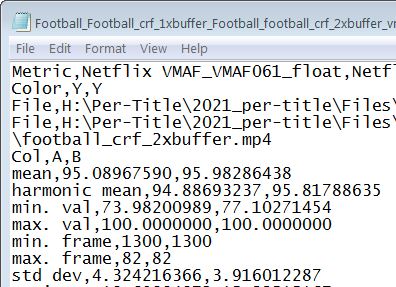

VQMT reports both the mean, arithmetic mean, min and max frame values and standard deviation. As you can read about here, harmonic mean incorporates quality variations into the score so is the preferred measure.

Again, the first file has the 1x buffer, and the second the 2x buffer. The file with the 2x buffer has a harmonic mean score that’s roughly 1 VMAF point higher, which isn’t significant. It also has a roughly 3 points higher min. val. score, which means that the lowest quality frame in the file is 3 points higher than the lowest quality frame in the 1x buffer file. Probably not something that a viewer would notice, but you’d definitely prefer the higher score.

What’s truly scary about the file with 1x buffer, in red in Figure 4, are the frequent transient drops in quality. This occurs whenever you stress the codec unnecessarily, and you often see a similar result with files encoded with constant bitrate encoding (CBR). This is why you would use the 2x buffer rather than 1x, and 200% constrained VBR rather than CBR.

Many people, myself included, have written complete books about encoding for distribution. Still, to be consistent with the points above, here are a few additional points about producing for distribution.

- You do need a shorter GOP size for adaptive bitrate distribution, usually about 2 seconds.

- You have to choose a codec compatible with your target viewer. That means H.264 for everyone, VP9 for browser or living room, and HEVC for HDR in the living room or mobile (but not browser).

- Typically you want to produce at the native frame rate, see more on frame rate here.

Summary

When producing a mezzanine file, you care about quality and not (within reason) deliverability. When producing for distribution, you care about quality and deliverability. You should customize your CRF or more traditional encodes accordingly.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel