Your encoding ladder should include lower resolution rungs even if higher resolution rungs deliver better quality. This blog explains why.

Several lessons in the online course Streaming Media 101: Technical Onboarding for Streaming Media Professionals focus on creating and configuring encoding ladders, including the Convex Hull technique discussed below. With advanced codecs like AV1 and HEVC, however, this analysis leads to a ladder with no lower-resolution rungs. Does a 1080p ladder through and through make sense?

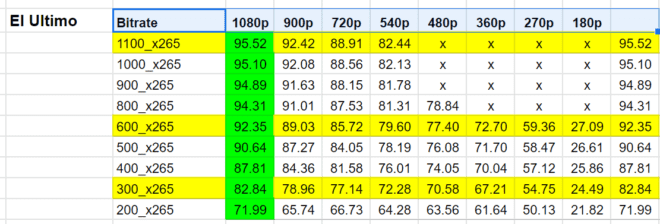

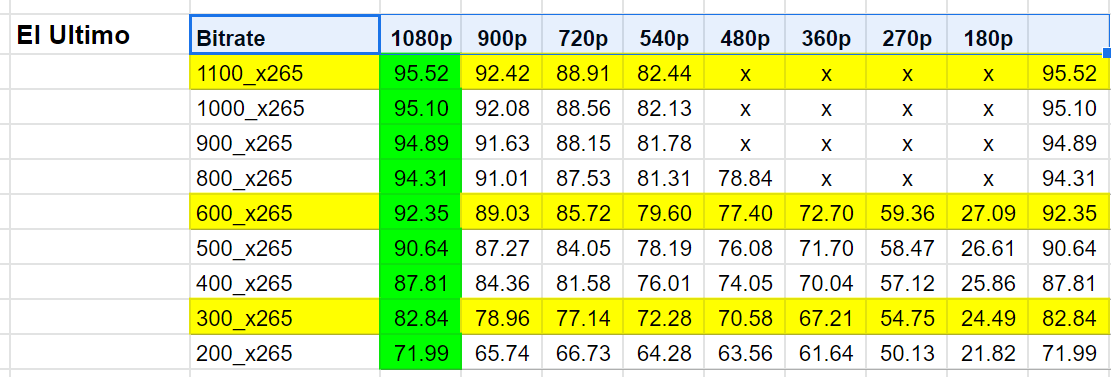

I recently asked for feedback on the need to include lower resolution rungs in an encoding ladder even when higher resolution rungs delivered better quality. You can read the original post for full context but the bottom line question was when an analysis like the table below shows that the highest quality resolution for every data rate rung is 1080p (green background), is there a need to include rungs at lower resolutions?

Four practitioners offered the great advice shared below.

Alex Zambelli, Technical Product Manager at Discovery (and formerly with Hulu, iStreamPlanet, and Microsoft) set the stage with the following comment. “I think your article raises a great question. It’s a good reminder that per-title encoding results shouldn’t always be taken at face value – it’s important to also constrain them and root them in reality.

The practitioners raised three general concerns, performance, DRM, and conservatism relating to interpreting video quality metrics.

Performance Considerations

David Ronca, Director, Video Encoding at Facebook, and formerly Director, Encoding Technologies at Netflix (with multiple Emmys to his credit), stated, “It depends on your use-case. For example, if many of your users do not have 1080p display or hardware decoder that can support level 4.1 then the lower resolution is necessary.”

David and his teams have always been gracious in sharing the fruits of their research with posts like this on per-title encoding and this on high-quality video encoding at scale.

David’s performance concerns were echoed by Fabio Sonnati, Media Architect, Encoding and Streaming Specialist at NTT Data, who stated, “even if rare, there’s still the possibility that older computers and mobile phones may struggle in decoding 1080p. Often we take our decisions based on averages but it’s important to analyze the statistical distributions. So even if the average capabilities are well above the necessary to decode 1080 you still could have a 1% of clients with low capabilities that will not be able to decode properly even the lowest rendition. For a company like Youtube, that 1% is huge.”

Fabio’s fabulous blog, which includes many foundational articles, is here.

Zambelli, who besides recent stints with Discovery and Hulu, has plentiful experience streaming ultra-large-scale events like several Olympics, Sunday Night Football (NBC), and NASCAR RaceBuddy 3D (TNT), also weighed in, stating.

Not all devices can play 1080p video smoothly. Even in 2021, there are still devices out there that can’t play 1080p video without dropping frames. Their decoders may even be certified for an appropriate 1080p level (e.g. H.264 level 4.1), but the moment you layer an application on top of that decoder the device is no longer *just* decoding video, it’s now also making HTTP API calls, downloading segments, parsing data, decrypting AES, compositing graphics, etc.

And unlike DVD players and TVs of the past which were built to do only a limited set of tasks, OTT app platforms today are completely open-ended (e.g. Amazon doesn’t tell Fire TV app developers which features their app should or shouldn’t have). As a consequence of that open-endedness it is much more difficult to guarantee that every 1080p video will play smoothly on every supported device (in multiple codecs, no less).

720p playback does appear to be a much safer target for playback performance, but to be completely on the safe side I think including something <= 720×480@30p or 720×576@25p in an encoding ladder is a good idea.

You can read other Zambelli contributions at his blog, here.

DRM Requirements for Your Encoding Ladder

I’m not familiar with DRM at a day-to-day level, and DRM considerations came as a surprise to me. Again, Ronca raised the issue, stating ”if you are streaming studio content and the contract requires L1 DRM for HD then you will need lower resolutions. Netflix created “shadow bitrates” to address these corner cases.”

Derek Prestegard, a video engineer with Disney, and previously at Encompass and Vudu, raised the same question and explained it much further when I asked for more detail.

Sure! Here’s the deal (and it may shock some). First off, the DRM implementation in popular browsers like Chrome and Firefox is typically Widevine Modular, which should be no surprise if you have any familiarity with the DRM space. On most platforms (Windows, macOS, Linux), this is implemented as “software DRM”, meaning the browser’s Content Decryption Module (CDM) does all of its cryptography on the host operating system using the CPU and RAM of the host.

It’s obfuscated for security, which is great (else there’d be no point), but hackers have historically had frequent success breaking into this and stealing content, either by dumping uncompressed video frames from memory or (worse) extracting the symmetric AES encryption keys from memory, allowing the DRMd assets to be decrypted offline.

Given that, we basically have to consider Widevine Level 3 (software DRM in this case) to be totally compromised. We don’t want HD or UHD content to be delivered to these endpoints because it can be easily stolen (and consistently is, just look at any torrent site with web rips etc). However, we still need to serve users on this platform!

The answer is to limit the resolution you deliver to software DRM endpoints. Typically we’d stream SD-only, or maybe 720p in certain cases. To make that useful, you need to encrypt those video tracks with a specific SD-only encryption key. This way even if hackers compromise the symmetric key used to encrypt the SD content, they can’t do anything with HD or higher content since it’s encrypted with unique keys.

This all requires that we actually encode and deliver at least some SD layers. In practice, most content still benefits from a few SD steps on the ladder (360p, 480p, 576p or whatever). That has the added benefit of being useful for people on really terrible or intermittently terrible connections 🙂

By way of background, Prestegard proved his DRM chops at Disney deploying multi-key 4K DRM solutions including PlayReady, Widevine, and FairPlay for DASH and HLS (while leveraging CMAF), one of the key technological achievements of the Disney+ platform. He’s also implemented cloud-native 4K HEVC encoding, including SDR, HDR10, and Dolby Vision for Movies Anywhere, bringing HDR to the masses.

Discovery’s Zambelli added the following.

As you know, DRM is still a requirement for pretty much all premium content, live and VOD. Most content licensing agreements stipulate not only the DRM systems that should be used (industry consensus is currently on Playready, Widevine, and Fairplay), but also the security levels required – and the level of playback quality permitted for each security level. For example, a common requirement in these agreements is that if a device implements only software-based DRM (e.g. Widevine L3 or PlayReady SL2000) and can’t guarantee that HDCP is enforced on HDMI outputs, then video playback quality must be restricted to SD only. Some contracts define SD as 480p, some as 576p, some are more liberal and maybe allow 720p – but in general, you’ll find some variation of this type of requirement in nearly all content licensing agreements.

What this means in practice is that if you want to maximize device reach and keep your users happy you need to make sure that even the least secure devices have something to play. Since the content licensing agreements tend to limit the least secure devices to SD, including at least 1 resolution that falls within that definition is a good idea.

Obviously, this is only a concern if you are protecting your content with DRM. From my perspective, however, since many consumers of the per-title comparison that I will be creating deploy with DRM, it was critical input for my planning.

Metrics Aren’t Perfect

Finally, Fabio Sonnati suggested that that blindly trusting metrics like VMAF and others might not be the best idea. Here’s Fabio’s take:

It’s prudent to not blindly trust quality metrics or prediction algorithms in content-aware encoding and use some “seat-belts.” Quality metrics can have points of weakness, as seen yesterday when Netflix released CAMBI to compensate for VMAF’s inability to identify banding. Also, in general, a single score cannot account for local quality problems inside the same image, so when assessing at a very aggressive resolution/bitrate ratio I’d not trust VMAF or any other metric too much and lowering the resolution to work at a more comfortable operating point is IMHO more prudent.

Suppose you have undersea footage, that you know is quite difficult to encode at low bitrates. A number of small fishes move smoothly on a low energy background, the resolution is 1080p and the bitrate is very low because VMAF in a static, low contrast scene provides a higher score for 1080p even at a very low bitrate.

Probably the quality in the static part is more than sufficient, but the quality around the fish is not because the encoder is bitrate starving. The average score says that 1080p at such a low bitrate is good because the score is averaged between wide good parts and a relatively small bad part…but the final subjective MOS would not be good at all because the human eye easily spots the artifacts around the moving fishes. A more conservative approach would use a smaller resolution, i.e. 720p or 576p with more stable performance across different parts of the frame and across frames.

Indeed the road to a fully reliable and accurate quality assessment is still long, though the direction is right.

As a result of this input, I changed my definition of the “ideal” encoding ladder to include 720p and 360p layers.

Thanks to all four respondents for taking the time to share their expertise with me and all of you readers. I owe you four an adult beverage next time we cross paths (hopefully soon).

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel