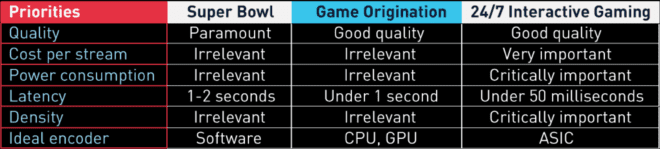

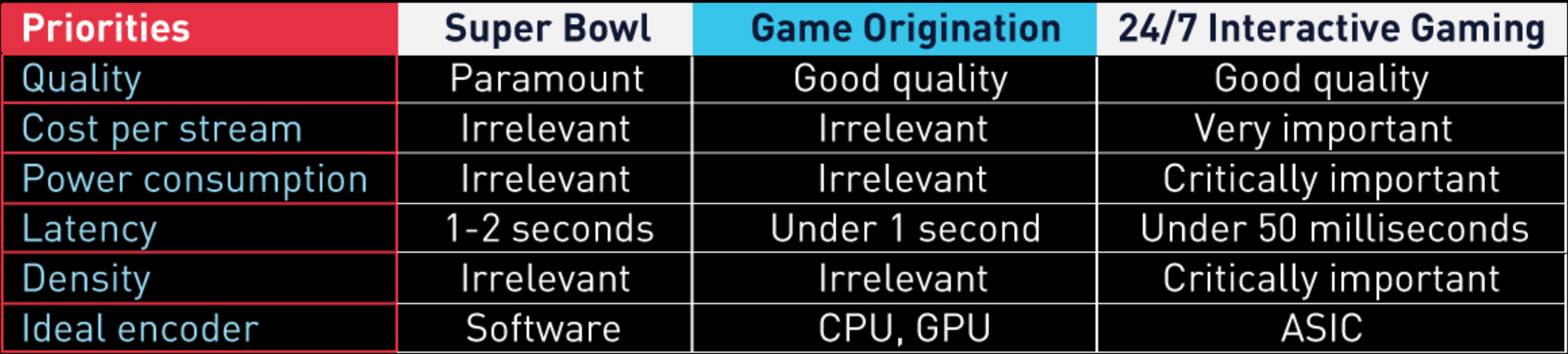

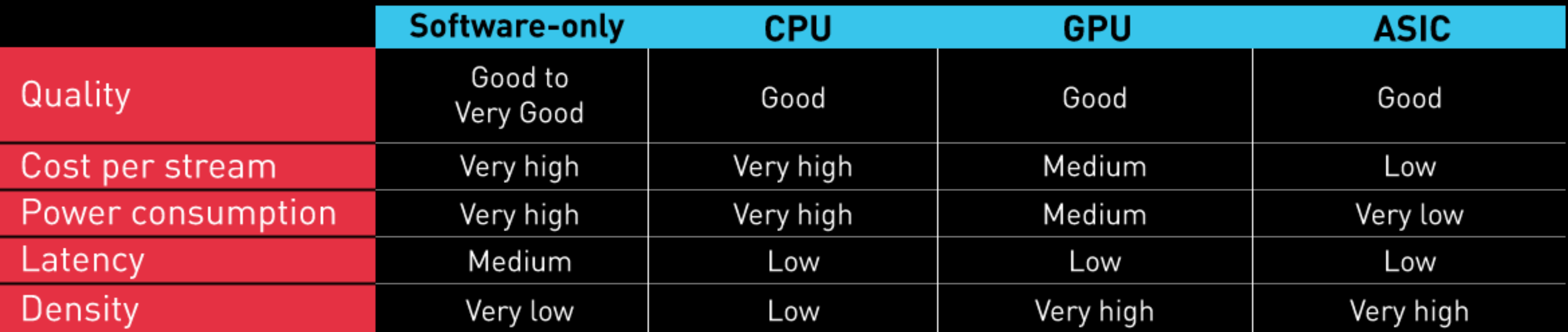

Four days on the show floor at IBC solidified the concept that your choice of the live encoder is dictated by your encoding application. In this article, I’ll review the types of encoders and the trade-offs associated with each type and will identify the type of encoder that works best for a few selected encoding applications.

Contents

Types of Live Transcoders

There are generally four kinds of encoders; software-only driven by the host CPU, and hardware encoding delivered by CPUs, GPUs, and ASICs. As compared to software-only encoding, these hardware encoders include codec-specific gates on the chip to perform the transcoding, relieving the host CPU from most transcoding chores.

All encoding approaches involve different tradeoffs, and most of these are delineated in Table 1. As you can see in the table, the primary advantage of software-encoding is quality. That is, with a software encoder on a high-core computer, or distributed environment like the cloud, you can optimize the quality beyond that attainable by hardware transcoders.

There are many obvious tradeoffs. High CPU usage translates to increased cost, increased power consumption, and the associated carbon emissions, and some increase in latency. Obviously, a solution driven by high CPU count isn’t very dense, so throughput is limited, particularly with more advanced codecs like HEVC or AV1.

In contrast, all hardware devices, whether CPU, GPU, or ASIC, deploy limited encoding tools to deliver real-time throughput. This produces a bit lower quality than the best software-only solutions driven by multiple-core systems, with many operational and OPEX advantages, though this varies by type.

CPUs are Central Processing Units, the chips that drive your computer system. They are relatively expensive devices, which boost the cost per stream. CPUs are also very power hungry; for example, the Intel Core i9-12900K CPU draws 150 watts of power, more than 21x the power of the NETINT T408 ASIC. Most computers only have one or two CPU slots, which limits overall throughput and translates to very low density.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel