I’m comparing cloud-based per-title VOD encoding technologies for a report I hope to publish in the near term. In an attempt to create a level playing field I set the following specifications.

- 2-second GOP

- 200% constrained VBR

- 2-second VBV buffer

Kind of like you were encoding with FFmpeg and using these parameters

-b:v 2600k -maxrate 5200k -bufsize 5200k

All cloud services let you specify these parameters when encoding using a pre-defined ladder, but several don’t let you specify with their per-title features; instead, they configure them automatically. Those that let you set these parameters may exceed the target maximum bitrate, just like FFmpeg occasionally does.

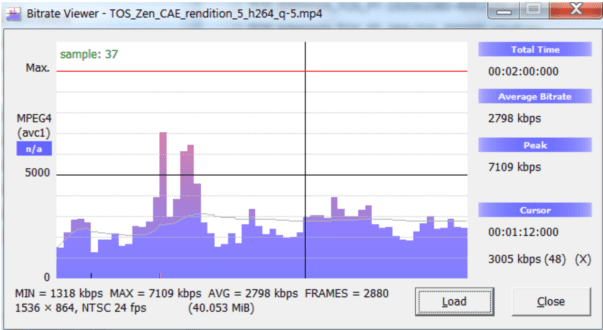

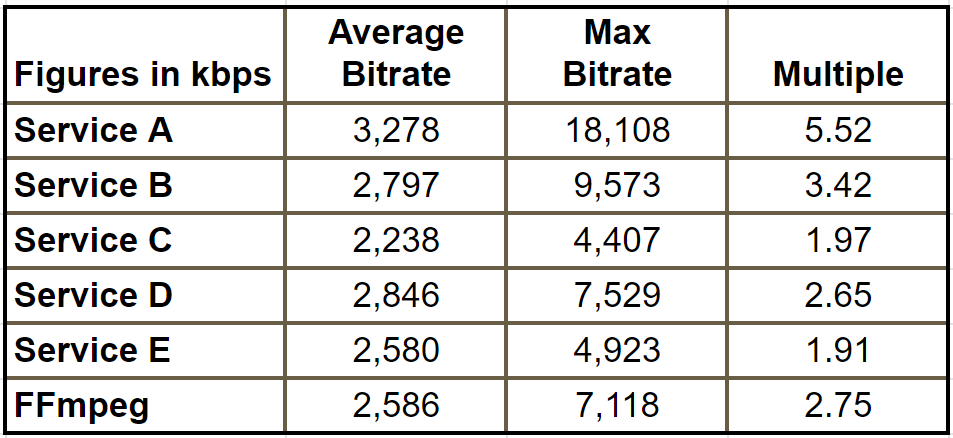

One particular file stressed out the encoders more than others, producing the results shown in Table 1. Service B and E let you specify the max bitrate with per-title encodes; E hit the target and B didn’t. Obviously, FFmpeg does and was well over the target.

A, C, and D don’t let you specify; Service A is very high, Service C below the desired level, and Service D a bit over. The bitrates are all different because these are per-title encodes and each service produces a unique encoding ladder.

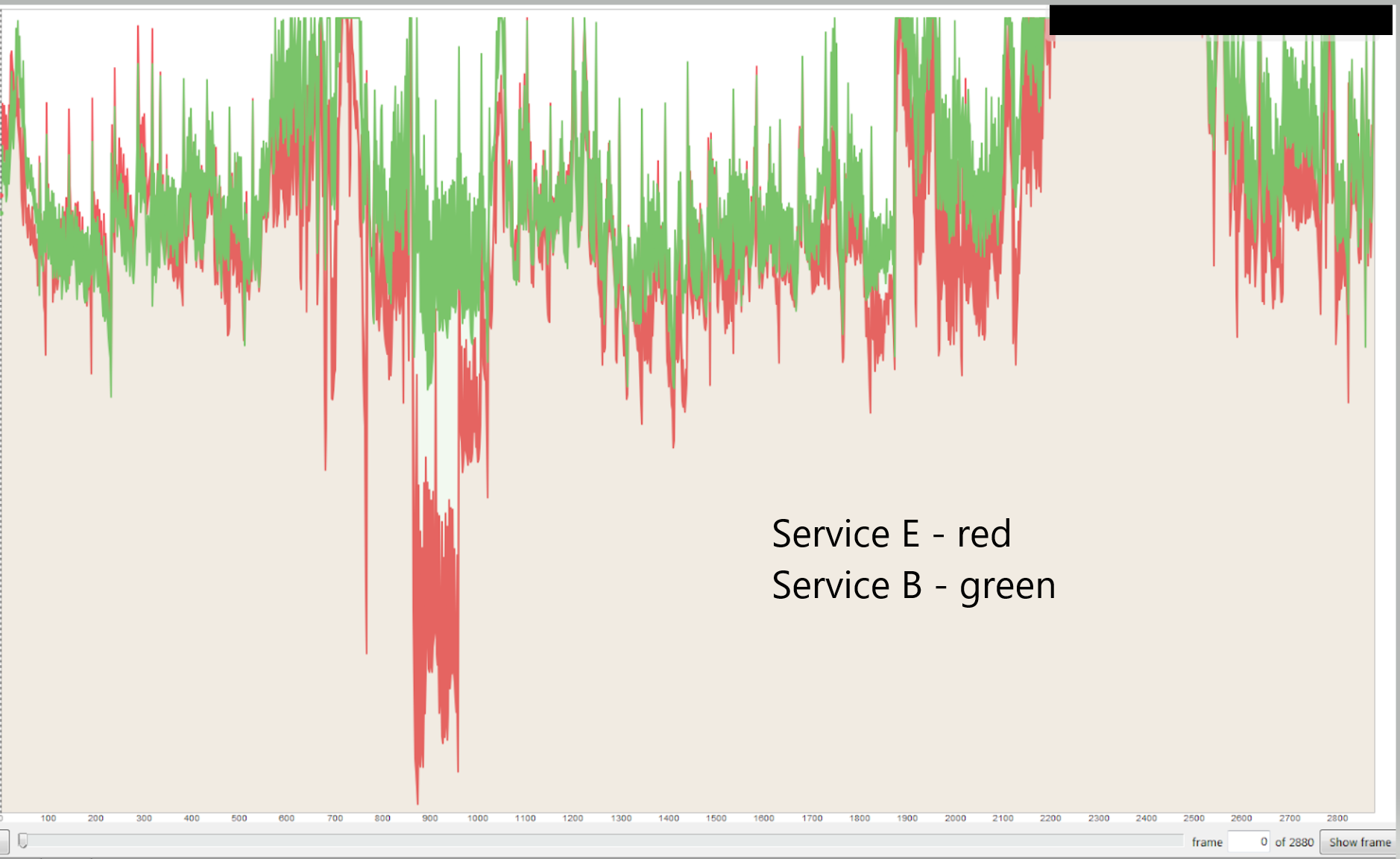

Service E met the 200% constraint requirements, but the quality dropped in the challenging region. Service B exceeded the target and the output quality was higher.

Back in the day, we deployed constrained VBR because it helped ensure smooth delivery to lower-performing connections. That still may be true for 4K/8K files encoded at 40 Mbps, but probably not for files in the 3-6 Mbps range to the typical target.

If we don’t care about constraining the data rate anymore, I should reencode with Service E without any maximum bitrate limit. If we do, I should reencode with Service B with increasingly constricted settings until the limit is met, or if that’s not possible, caveat the quality scores of all services that didn’t meet the 200% target.

Any thoughts? I’d appreciate any answers to the following questions:

- Do you care about constrained data rates anymore?

- If so, what percentage constraint do you use? 150%? 200% 400%?

- How valuable a feature in a per-title service is the ability to set a constraint level and have the service meet it?

You can answer as a comment or email me at janozer@gmail.com. All emailed answers will be kept confidential unless you agree otherwise. Thanks!

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel