This article analyzes the codecs used by YouTube for 4K videos with millions of views, and the savings that AV1 and VP9 deliver over YouTube’s full encoding ladder.

This is the third in a series of articles written about which codecs YouTube uses. The first covers which codecs YouTube uses for high-volume 1080p videos. The second covers the codecs used by YouTube for 4K videos. This article details the codecs used by YouTube for 4K videos with millions of views, and the savings that AV1 and VP9 deliver over YouTube’s full encoding ladder.

Contents

Downloading File Lists with YouTube-dl.exe

When it comes to research you’re only as good as your research tools. The first two articles relied upon the YouTube Player feature Stats for Nerds to determine which codec YouTube used to encode a video. Then, in a LinkedIn comment on this post, Keunbaek Park, a video software engineer at Naver Corp in South Korea, mentioned a tool called youtube-dl.exe which I had never heard of before. Another poster, Raju Babannavar, provided a link to the tool and its documentation.

If you’re not familiar with youtube-tl.exe, here’s the description:

youtube-dl is a command-line program to download videos from YouTube.com and a few more sites. It requires the Python interpreter, version 2.6, 2.7, or 3.2+, and it is not platform-specific. It should work on your Unix box, on Windows or on macOS. It is released to the public domain, which means you can modify it, redistribute it or use it however you like.

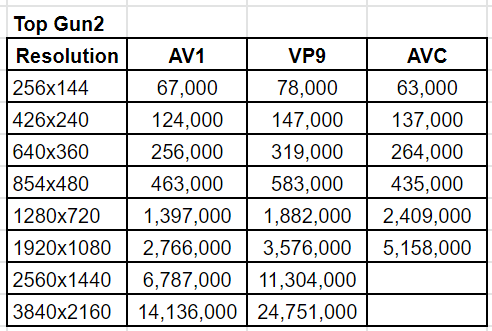

To collect the data described herein, I used youtube-dl to download a file that lists all files available for playback for each video along with codec, resolution, and data rate information. This is a link to a text file with this configuration information for the trailer for Top Gun 2 (we love you Goose). Table 1 summarizes this data.

The big revelation here is that YouTube is producing AV1 output for high-volume 4K files, which in the second codec article I guessed that they weren’t. I checked ten very high view count 4K music videos and every one of them had both VP9 and AV1 iterations through the encoding ladder. I also checked a 4K file with around 36,000 views and found only VP9 encodes. I didn’t check enough files to gauge when YouTube might start adding AV1 encodes but all the ones with AV1 files had at least several million views.

The other surprise was that for some file iterations, AVC had the lowest data rate (compare at 480p). Looking at the eleven files that I downloaded this happened occasionally below 1080p, but at 720p and 1080p AV1 was always the most efficient, followed by VP9 and AVC. To explain, YouTube uses per-title encoding when producing their files; I explained the schema used back in 2016 in this article. I have no idea how they are deploying per-title with multiple codecs but it’s not surprising that their technique produces unexpected results with some files.

I should note that I have no idea where YouTube is displaying these 4K AV1 files. I checked all the videos at 4K in both Chrome and Firefox on a Windows computer with Stats for Nerds, and all played VP9, not AV1. But that’s an issue for another day.

Bandwidth Savings by Codec

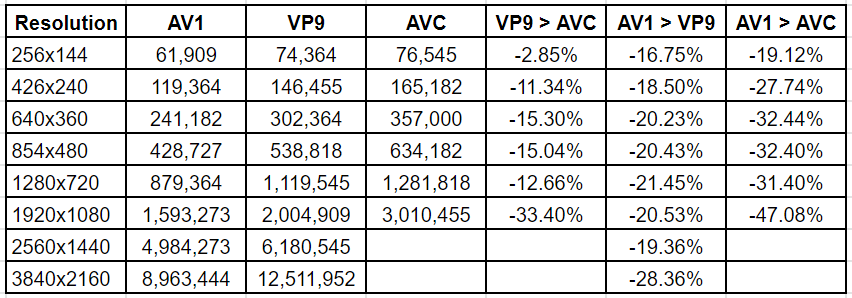

This Google sheet contains the file information for all 11 downloads plus the summary information and charts. If you spot any errors, please let me know at janozer@gmail.com. I also added columns that computed the following:

- VP9 savings as compared to AVC ((VP1-AVC)/AVC)

- AV1 savings as compared to VP9 ((AV1-VA9)/VP9)

- AV1 savings as compared to AVC ((AV1-AVC)/AV1)

Table 2 shows the results.

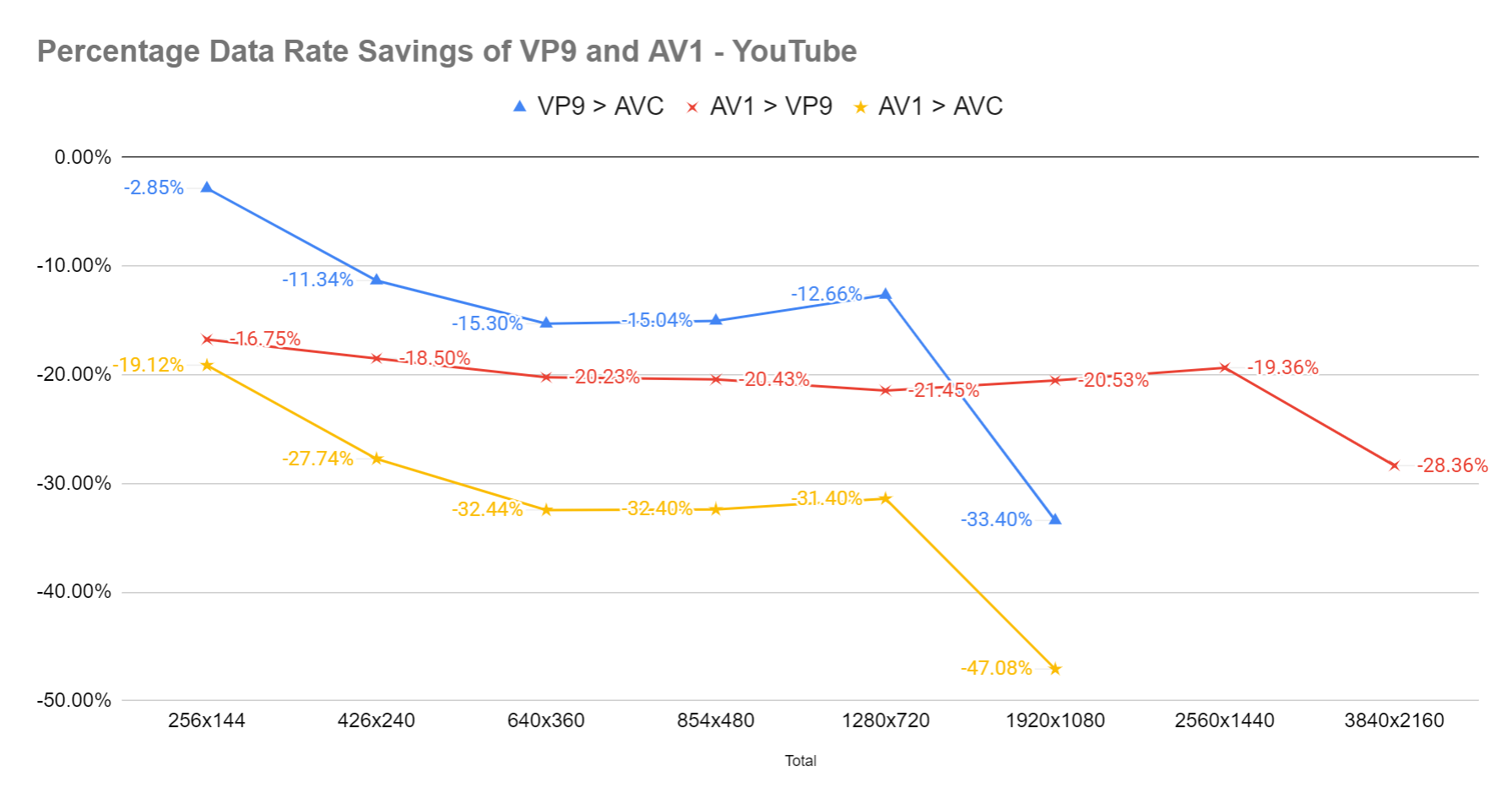

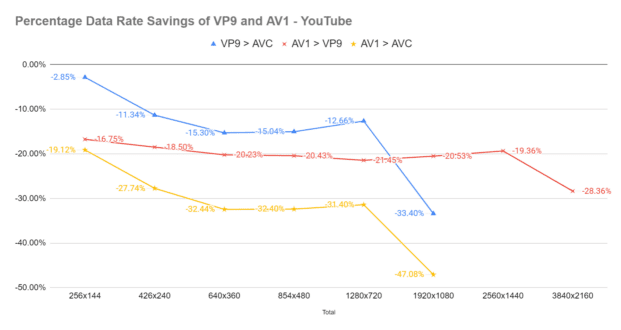

Figure 1 charts the comparative savings data. Here’s the color-coding.

- VP9 savings as compared to AVC ((VP1-AVC)/AVC) (Blue)

- AV1 savings as compared to VP9 ((AV1-VA9)/VP9) (Red)

- AV1 savings as compared to AVC ((AV1-AVC)/AV1) (Yellow)

Regarding the blue data (VP9 efficiency compared to AVC), the average results weren’t surprising at lower resolutions given that AVC rungs had a lower bitrate than VP9 at some lower rungs in some files. However, the savings over AVC really kicked in at 1080p which, at ~3.6 Mbps, are probably viewed by a significant chunk of viewers.

Regarding the red data (AV1 efficiency compared to VP9), I’m reminded that the Alliance for Open Media delayed the release of AV1 until it delivered a 20% savings over VP9, which it tracks pretty accurately through the encoding ladder, with a nice extra boost at 4K.

Regarding the yellow data (AV1 efficiency compared to AVC), this is the answer to the famous Clara Peller question, Where’s the Beef? If you’ve been looking for real-world data proving that AV1 can deliver substantial bandwidth savings over AVC, here it is.

A Bit About YouTube’s Per-Title Encoding

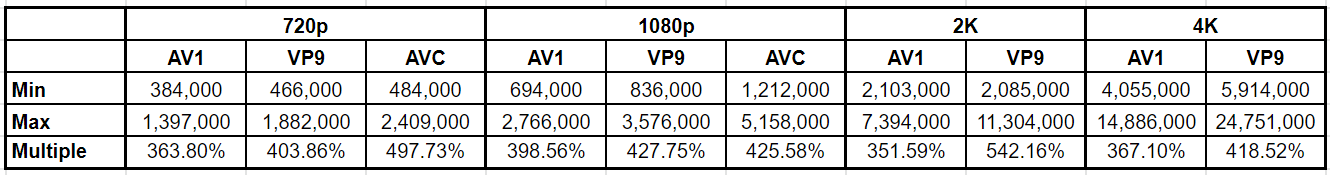

Let’s spend a moment on YouTube’s per-title encoding technology. First, have a look at Table 3, which shows the minimum and maximum data rates for all codecs deployed at 720p and above, plus the multiple from the minimum to the maximum value, which averages over 400%. Note that not all of the files were encoded at these resolutions, as many didn’t have 16:9 original resolutions, so YouTube scaled to the target width and interpolated the height (so 3840×1606 input converted to 1280×536).

Still, the range in data rates should provide a nice reality check on your encoding ladder and presents a very convincing case that if you’re not currently using per-title encoding you definitely should be.

Second, I checked some encodes where the bitrate for H.264 was lower than VP9 and/or AV1, in essence, the man bites dog outcomes. In all cases, AVC clearly exhibited the lowest quality, though the differences were most clear at ~200+ magnification levels. You can see this in the PDF file available here, which identifies the file and then shows the frames from the AV1, VP9, and then AVC encodes.

Every AI-driven per-title schema is going to miss occasionally; as noted, YouTube’s algorithm produced some unexpected results at lower rungs, where the data rate difference was minimal, but seemed to hit its stride at 720p and above.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel

Dear Jan, you mentioned

“I should note that I have no idea where YouTube is displaying these 4K AV1 files. I checked all the videos at 4K in both Chrome and Firefox on a Windows computer with Stats for Nerds, and all played VP9, not AV1. But that’s an issue for another day.”

I noticed I can see AV1 on my home’s new 4K Samsung Neo OLED TV. I can send you a screeshot photo if you are interested.

Alex – thanks for this – a screen shot will be great – I’ll add it to the article (assuming you don’t mind).

Please send to janozer@gmail.com.

Thanks!

Jan

Jan, not sure if you know about it, but Paul Pacifico’s donationware program Shutter Encoder, available for Linux, macOS, and Windows from , is an easy to use GUI front end for ffmpeg and yt-dlp, which is a fork of youtube-dl “with additional features and fixes”.

I’ve found the program to be quite useful and recommend it highly, especially for Windows users some of whom may not be especially conversant with the command line.

Frank – thanks for this – here’s a link to the program (which I haven’t tested but may in the future).

https://www.shutterencoder.com/en/

Jan