It’s a well-known and oft-repeated truism that codecs operate more efficiently at higher resolutions. The question is, is the truism really true? It turns out that it is, and we prove it below.

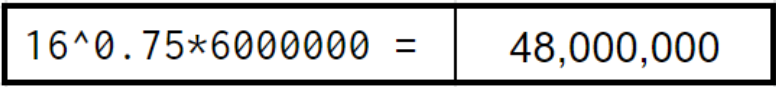

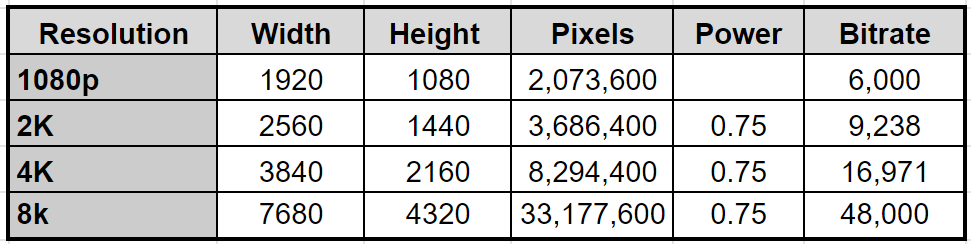

To lend numbers to our truism and focus the issue, the basic question is this. 8K video has 16 times the pixels of 1080p videos. So, if you need to encode 1080p video at 6 Mbps for top-rung quality, do you need to encode 8K video at a bandwidth-busting 96 Mbps (16*6)?

Contents

The Power of 75 Rule

There’s actually a rule for this called the Rule of .75, which I learned from Ben Waggoner, then with Microsoft, now the mad scientist at Amazon Prime who makes your videos look so good. Here’s what he said many years ago.

Using the old “power of 0.75” rule, content that looks good with 500 Kbps at 640×360 would need (1280×720)/(640×360)^0.75*500=1414 Kbps at 1280×720 to achieve roughly the same quality.

Working with this formula, I modified the numbers for the 16x increase in pixels from 1080p to 8K, plugged this data into Google Sheets, and got this result. To achieve the same quality as a 6 Mbps 1080p file at 8K, you’d have to encode to 48 Mbps.

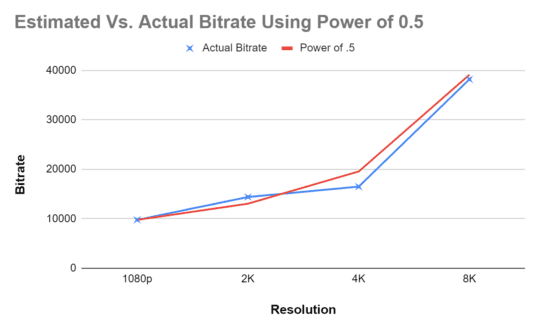

However, Waggonner later commented that the more efficient the codec, the lower the power. To experiment with this and to set up the discussion to come, I created this chart that predicted the required bitrate to maintain equal-to-6-Mbps-quality at 2K, 4K, and 8K.

Test Procedure

To test our theory, we first needed to set the quality of the 6 Mbps 1080p clip, which I arbitrarily targeted at 95 VMAF. Then I started encoding using a simple CRF-based string for x265 and immediately ran into an interesting technical issue.

To explain, the original thought was to create 1080p/2K/4K/8K outputs with 95 VMAF scores from the same source file and identify the bits per pixel for each resolution. As you probably know, bits per pixel measures the amount of data per pixel in the encoded file (see more here). If the bits per pixel for files A and B are 0.1 and 0.05, respectively, file B is applying half the bits per pixel of file A. If codecs operate more efficiently at higher resolutions you would expect the data rate to increase (of course) at higher resolutions, but the bits per pixel to decrease.

Here’s the problem that I ran into. When you create an encoding ladder from an 8K source file, you start with 8K and create lower resolution rungs. When you compute VMAF, you compare the lower-resolution rungs to the 8K source file according to this Netflix post. The consequence of this is that lower-resolution rungs can never achieve the quality of higher-resolution rungs because detail is lost when scaling to the lower resolution.

When you scale a lower-resolution rung back to the source resolution, the lost detail lowers your VMAF score, irrespective of the bitrate or bits per pixel. There’s absolutely no way to produce a 1080p file compared to an 8K source that achieves 95 VMAF. The only way to get the same VMAF score for files encoded at different resolutions is to compare the encoded files to source files at the same resolution.

In a perfect world, you’d have four cameras shooting the exact same content at different resolutions and encode from there. What I did was create 1080p/2K/4K/8K source files from the original source and produce the encoded files from those. That way, when I compared the encoded file to the source, I was comparing it to a source at the same resolution. I also created an 8K source to have an 8-bit file to encode and serve as the source for 8K VMAF comparisons.

Test Clip

The test clip was an 8K HDR 50i soccer clip contributed by Harmonic. It contains lots of fine detail (the crowd) and high motion (the players) and proved a very challenging clip to encode.

I started by converting to SDR because VMAF hasn’t been tuned for HDR yet. Plus, I didn’t want to look at dull, washed-out frames on my SDR computer monitor. I converted from HDR to SDR in FFmpeg using the following command string.

ffmpeg -ss 00:00:10 -i RiverPlate.mp4 scale=t=linear:npl=100,format=gbrpf32le,zscale=p=bt709,tonemap=tonemap=hable:desat=0,zscale=t=bt709:m=bt709:r=tv,format=yuv420p -t 00:00:02 rp_8K_source.mp4

I can’t find the site I grabbed this from but if you Google “HDR to SDR FFmpeg” you’ll find multiple options. This was the second configuration that I tried, and it worked well (the first was too garish). I didn’t experiment with the options in the command string, but the documentation is here.

HEVC Results

I used this FFmpeg command script to encode to x265 output.

ffmpeg.exe -i rp_8K_source.mp4 -c:v libx265 -preset veryslow -crf 35 riverplate_SDR_8K_35crf.mp4

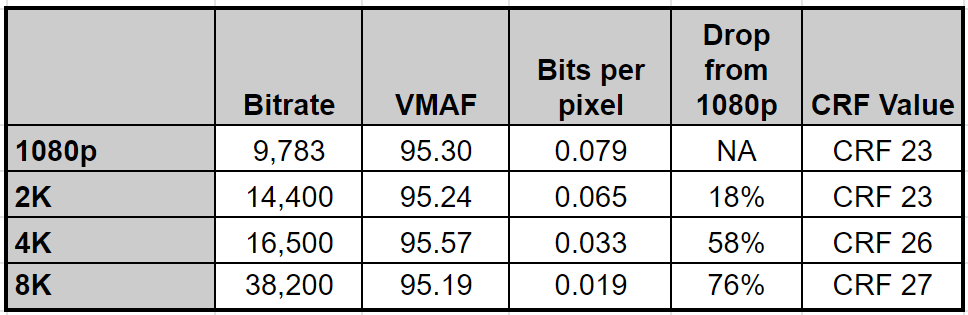

Table 3 shows the bitrates and bits-per-pixel values needed to maintain 95 VMAF at the resolutions shown. Notably, the bits-per-pixel values drop by 76% from 1080p to 8K.

The 1080p bitrate of 9,783 is obviously pretty high. Three factors contribute to this:

- 95 VMAF is a very high quality target. If I dropped the target to 93 VMAF the bitrate would drop to a more reasonable 6,464 kbps.

- The soccer test clip contains lots of high motion and fine detail

- The soccer test clip is 50 fps as opposed to 30 or 24.

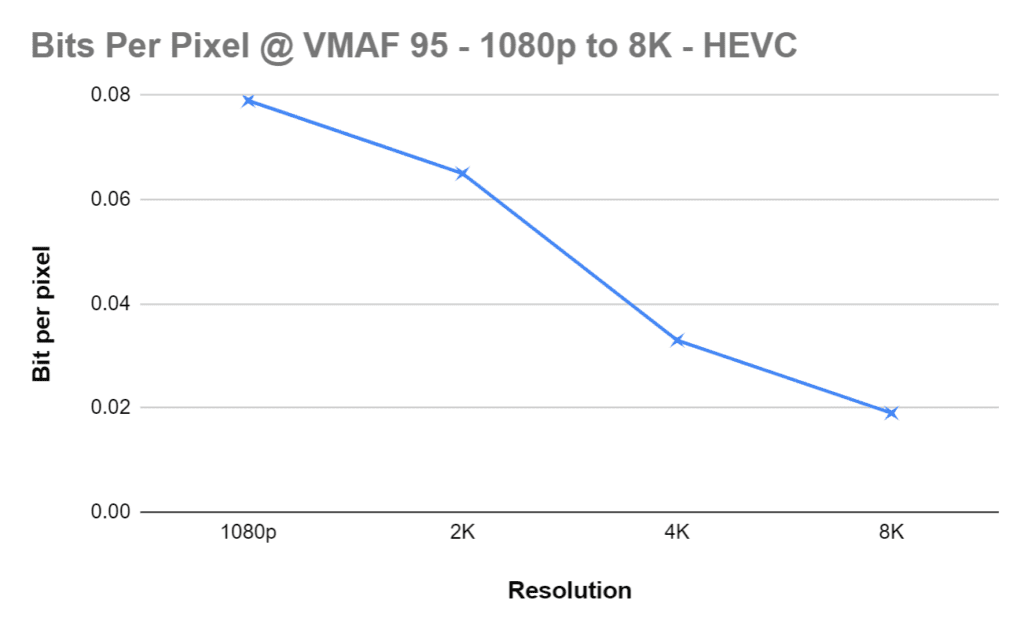

Figure 1 shows how the bits per pixel drops from 1080p to 8K, confirming the truism.

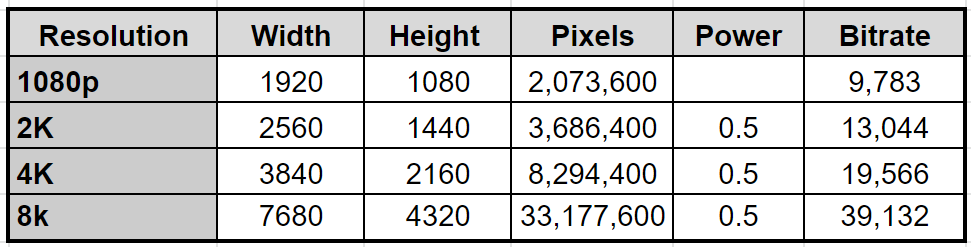

Let’s go back to our Power of .75 table, plug in the actual 1080p bitrate, and experiment with different power values to match the actual data. It looks like 0.5 is a good fit.

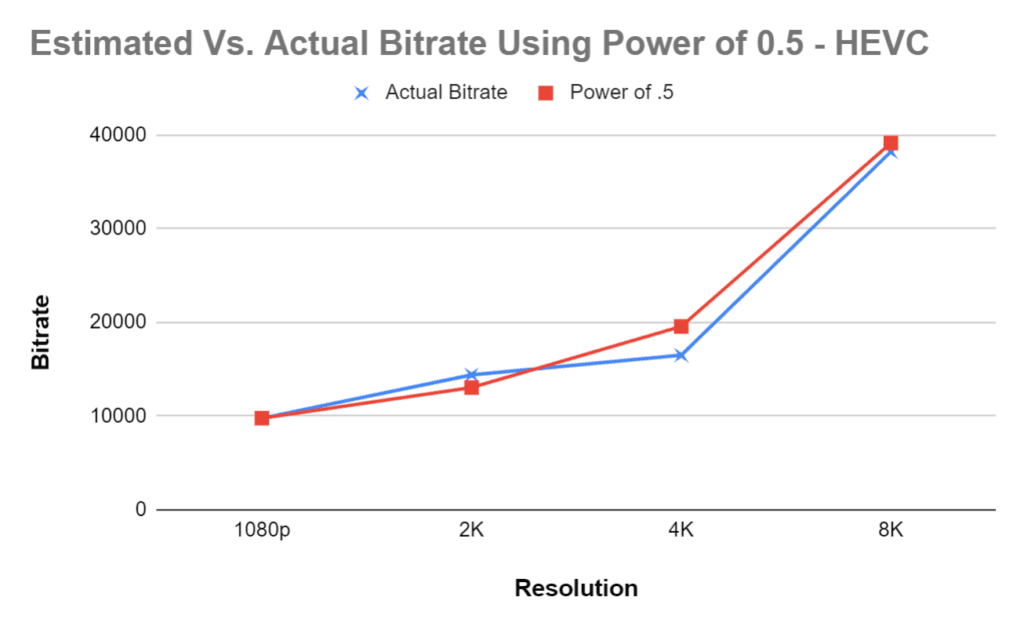

Figure 2 is a graph of the bitrate estimated with the Power of .5 Rule and the actual results, which align fairly closely.

AV1 Results

Let’s go through the same cycle with AV1. Here’s the command string.

ffmpeg.exe -i rp_8K_source.mp4 -c:v libaom-av1 -cpu-used 3 -crf 37 -b:v 0 riverplate_SDR_8K_37crf.mp4

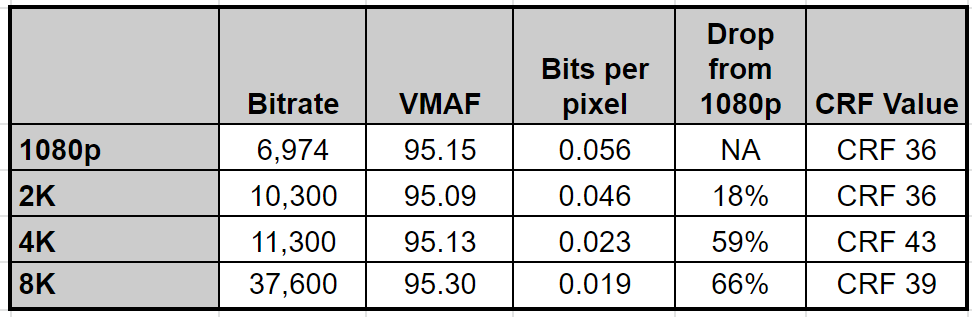

Table 5 shows the bitrates and bits-per-pixel values needed to achieve 95 VMAF at the tested resolutions, with another very significant drop in bits-per-pixel value from 1080p to 8K.

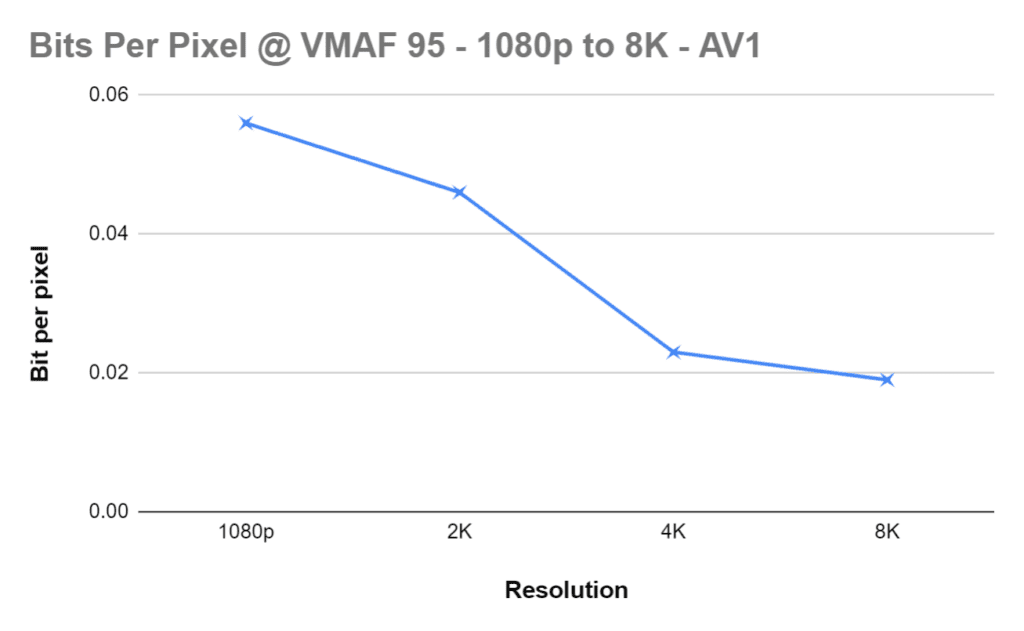

Figure 3 shows how the bits per pixel drops at higher resolutions, again confirming our opening truism.

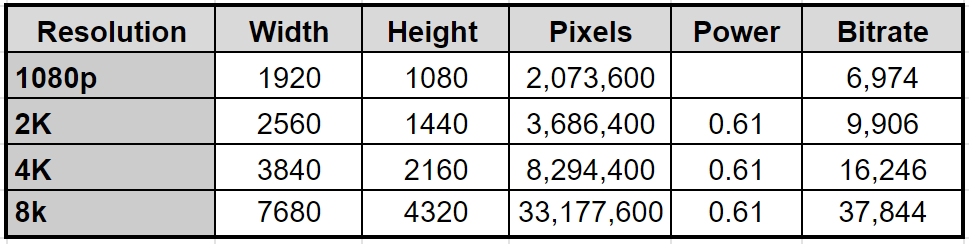

Now let’s recompute the “Rule” table with the new data. The Power of .61 seems the closest.

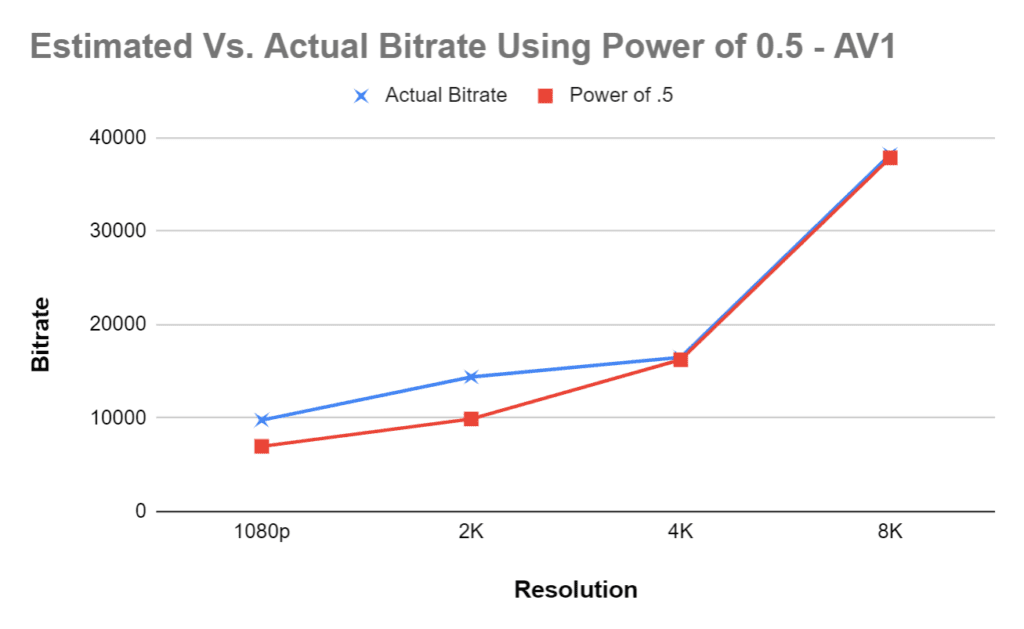

Figure 4 shows how the estimated and actual results compare. Again, they align reasonably closely, confirming the logic underlying the rule.

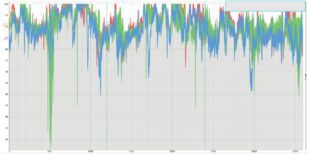

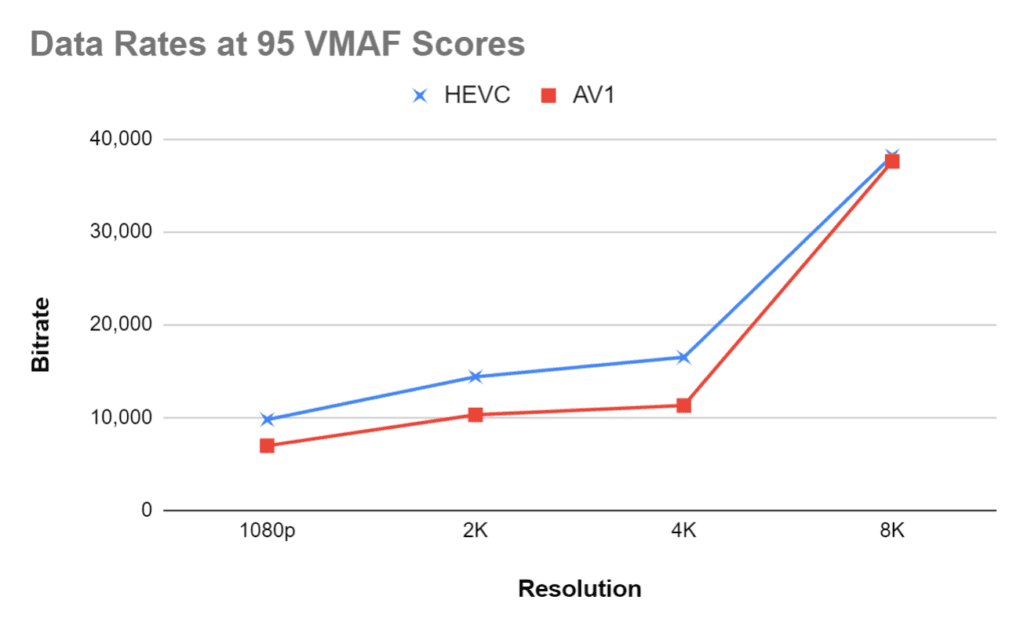

The only fly in the ointment is that you’d expect AV1 to have a lower power than HEVC because it’s a more powerful codec in most cases. That said, as seen in Figure 5, though AV1 is more efficient than HEVC at the first three resolutions, they end up surprisingly close at 8K.

Conclusions

Let’s not get off track and argue about which is the more powerful codec. Here are the conclusions we can reasonably draw from this data.

- The truism is true. Codecs operate more efficiently at higher resolutions, so the bits-per-pixel values needed to maintain the same quality level drop significantly from 1080p to 8K. You don’t need 16 times the data rate just because there are 16 times more pixels.

- For those seeking a rule of thumb, the Power of .75 Rule seems to work well, but you’ll have to test many more files to produce the right integer for each codec.

Streaming Learning Center Where Streaming Professionals Learn to Excel

Streaming Learning Center Where Streaming Professionals Learn to Excel